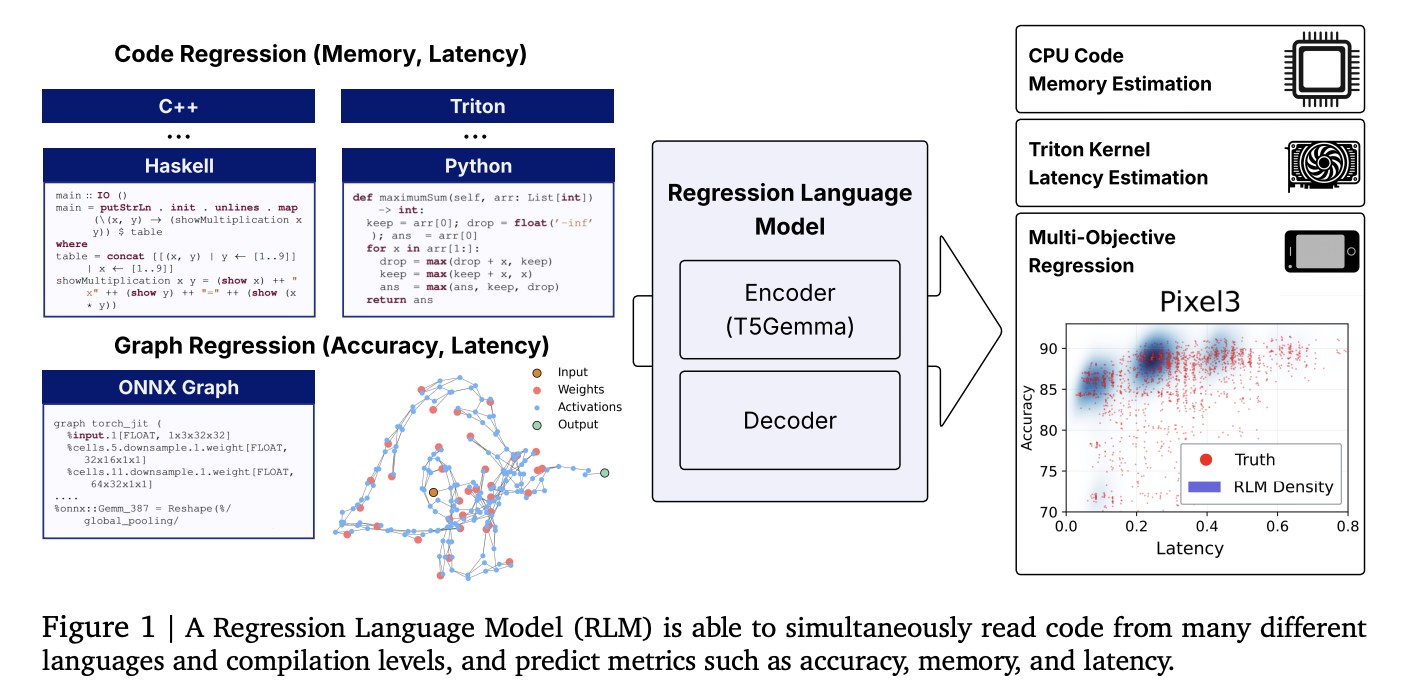

Can the model of small languages predict the Kernel Latency, Memory, and model accuracy from the code? A new language of the order to postpone (RLM) say yes

Investigators from Cornell and Google imported a combined language model for numbers directly to the code results directly to the Memory memory system, and the accuracy of NEURAL's networking. The acoder-parameter of Ecoder-Paramer initiated from T5-Gemma reaches strong position connecting to all Heterogeneous and languages, using one text decoding with compulsory decoding.

What's new new?

- Code-to-metric Code Regression: One RLM (i) Memory's Peak from high code (Python / C / C / C / C / C / C ++ no encoders, or Zero-Cast Proxies are required.

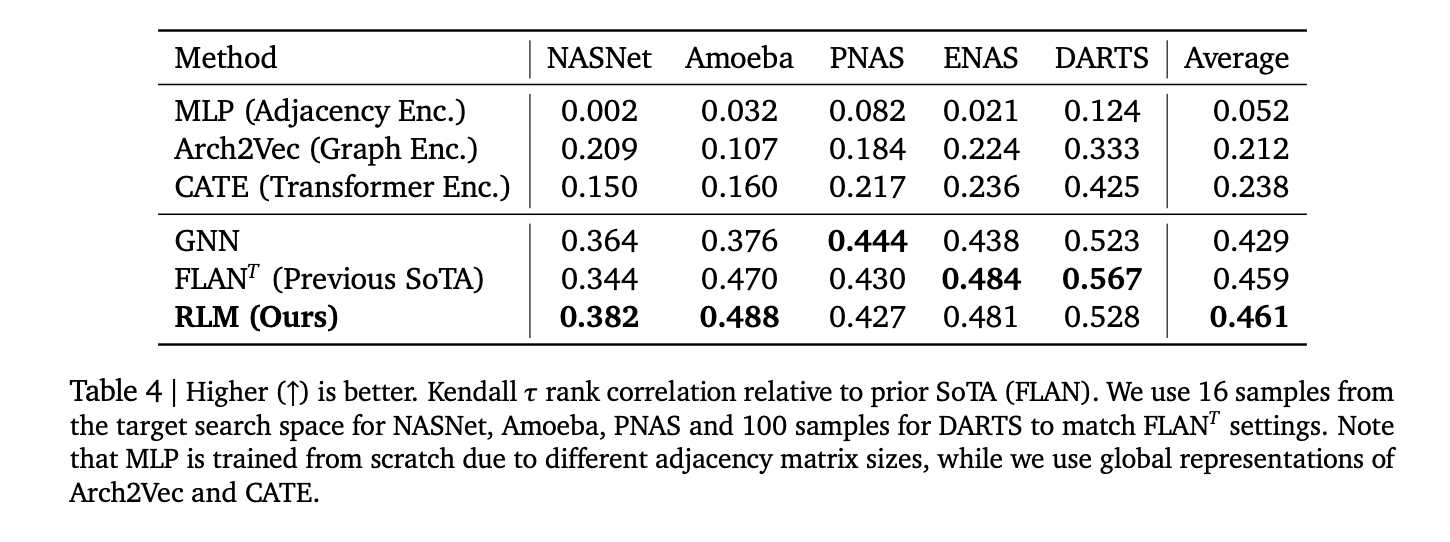

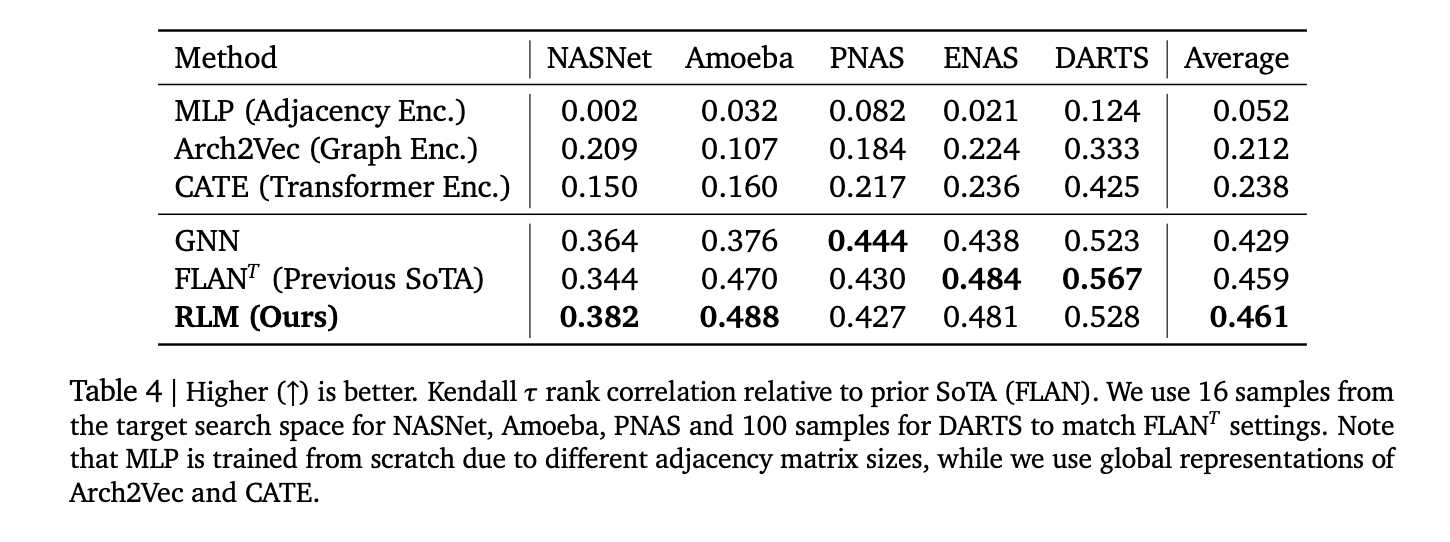

- Concrete Effects: Reported combination including Spearman ρ≈ ρ 0.93 In Letcode Apps, ρ ≈ 0.52 For Triton Kernel Latency, ρ> 0.5 On average across 17 languages of Codenetbesides Kendall ≈ 0.46 In the five old NAS spaces – competitor and other situations exceeded graph-based predictors.

- Different Concealing: Because Decoder is automatically operated, later model cases of the earthquakes (eg

Why is this important?

Pipeline predictions, Purnel selection, usually depends on the Bespoke aspects, syntax trees, or encoders of new ops / ounces. To cure the postponement as Next-Token prediction with numbers Streams Sticker: Enter input as an obvious text (source code, tritot Ir, onnx), and decrease the numbers that are measured by sample. This reduces the cost of repairs and promotes transmission to new jobs.

Data and benches

- Code-RegEGRESSION Data (HF): Selected to support Code-to-metric Functions that take apps / Lettade Run, Triton Kernel Latencies (KernelBook-taken), and Codenet Memory Footprints.

- NAS / ONNX Suite: Buildings from Nasbenth-101/20, FBNNN, and-For-All-For-All (MB / PN / RN), Twiaml, Hiaml, Cameter, NDS sent to An Onx text predicting accuracy and special device latency.

How does this work?

- Spine: Encoder-decoder with T5-gemma Encoder's implementation (~ 300m Params). Inputs are green wires (code or onnx). Outgoing are numbers released as Sign / Exponent / Mantissa Digit Tokens; Mandatory decoration includes valid numbers and supports uncertainty about sample.

- Crash: (i) the language of taking a language is speeding to meet and improve the President of Triton Latency; (ii) Only the number out of numbers Outperforms Outperforms Outgoing heads even and Y-General; . (iv) a long relief of circumstances; .

- Training code. This page Regress-LM Library provides the restoration resources of the replacement, compulsory constipation, and positive cooking / best recipes.

Important Statistics

- Apps (Python) Memory: A surveyor ρ> 0.9.

- Codenet (17 memory): usual ρ> 0.5; The most powerful languages include IC / C ++ (~ 0.74-0.75).

- Triton Kernels (A6000) Latency: ρ ≈ 0.52.

- NAS status: usual Kendall ≈ 0.46 In Nasnet, Amoeba, Pnas, Nas, Dades; Competition with Flan and GNN Basenes.

Healed Key

- Code-to-Metric computed activities are valid. One model of ~ 300m-parameter t5gemma

- Studies show Spearman ρ> in Memory of Apps, ≈0.52 on TRITO LATENCY,> 0.5 Average in 17 codenage, and Kendall-≈ 0.46 in five nas.

- Numbers are decorated as a text about obstacles. Instead of the head of the restoration, RLM tokens issue large tokens, enabling many metrics, default metrics (eg accuracy followed by multiple devices) and uncertainty about the sample latencies.

- This page Variation of code DataSet includes the Lettroom apps / memory, TRITO Kernel Latency, and Codenet's memory; This page Regress-LM Library provides training / decorative stack.

It is interesting that this work brings work forecasts as Text-to-T5gemma generation memory Memori-App Memori (ρ groups) Open and Library Memorizes and Reduce the barrier in good training or new languages.

🚨 [Recommended Read] Vipe (Video Pose Pose): A Powerful and Powerful Tool of Video 3D video of AI

Look PaperGitHub page including Data card. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to join ours 100K + ml subreddit Then sign up for Our newspaper. Wait! Do you with a telegram? Now you can join us with a telegram.

Asphazzaq is a Markteach Media Inc. According to a View Business and Developer, Asifi is committed to integrating a good social intelligence. His latest attempt is launched by the launch of the chemistrylife plan for an intelligence, MarktechPost, a devastating intimate practice of a machine learning and deep learning issues that are clearly and easily understood. The platform is adhering to more than two million moon visits, indicating its popularity between the audience.

Follow MarkteachPost: We have added like a favorite source to Google.