How to use your ML Notebook in Databricks?

Databricks is one of the leading platforms for building and making machine learning books at scale. It combines the power of apache Spark with a Notebook-âency preferred interface, test tracking, and integrated data tandem. Here in this article, I will guide you through the process of hosting your ML Notebook on Databricks Step by Step. Databricks offers several plans, but in this article, I will be using the free plan, as it is suitable for learning, testing and small projects.

Understanding Databricks strategy

Before we begin, let's quickly go through all the Datchrick programs available.

1. Free Edition

The Free Edition (Overly Community Edition) is the easiest way to get started.

You can sign up at Dabricks.com/Forunn/Freeree-edition.

It has:

- A single user workstation

- Access to a small compute cluster

- Support for Python, SQL, and SCALA

- MLFLOW integration is a test tracking

It's completely free and hosted. The biggest issues are that timeout qualifications after idle time, resources are limited, and certain business skills are turned off. However, it is suitable for new users or users trying databricks for the first time.

2. General plan

The standard program is suitable for small groups.

It offers more performance interoperability, large integrated clusters, and integration with your cloud storage (such as an AWS or AZURE data pool).

This level allows you to connect to your data base and measure manually when needed.

3. Premium plan

The Premium Plan introduces security features, Audit-based Access Control (RBAC), and compatibility.

It's common for middle-class teams to need user management, documented accounting, and integration with enterprise information systems.

4. Enterprise / Technical System

The Enterprise or Professional plan (depending on your cloud provider) includes everything that the premium plan has, and advanced management capabilities such as the Unity catalog, Delta live tables, scheduled jobs.

This is often used in production environments with multiple teams running workloads at scale. For this tutorial, I will be using Databricks Free Edition.

The hands

You can use it to try databricks for free and see how it works.

Here's how to follow along.

Step 1: Sign up for Databricks Free Edition

- Visit

- Sign up with your email, Google, or Microsoft account.

- After logging in, databricks will automatically create a workspace.

The dashboard you are looking at is your command center. You can manage notebooks, collections, and data all from here.

No local installation is required.

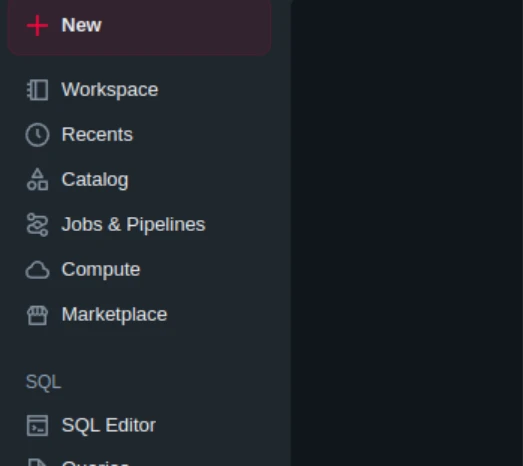

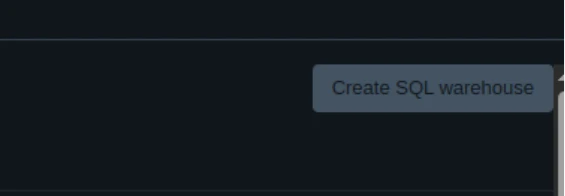

Step 2: Create a cluster

Databricks deploys code against a clustered, physical managed environment. You need someone to run your notebook.

- In the sidebar, navigate.

- Click to create a compute (or create a cluster).

- Name your team.

- Choose the default runtime (Fautive Databrick Runtime for machine learning).

- Click Create and wait for it to work.

When the status is valid, you are ready to enter your notebook.

In the free plan, collections can close automatically after inactivity. You can start over whenever you want.

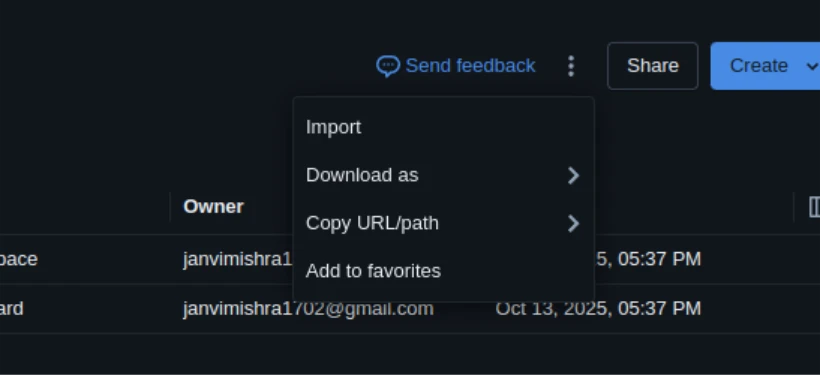

Step 3: Import or create a notebook

You can use your own ML Notebook or create a new one from scratch.

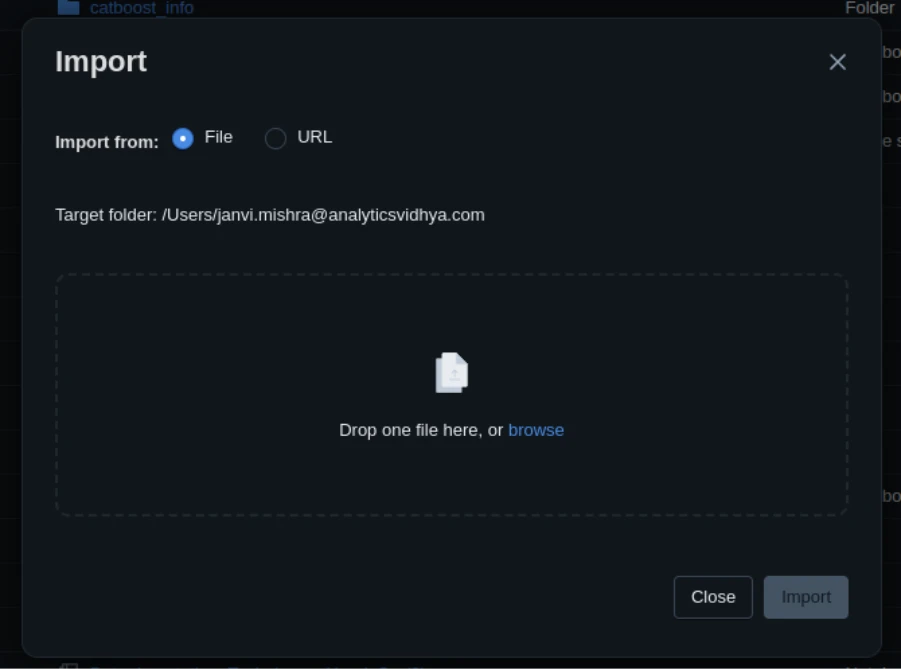

To import a notebook:

- Go to the workplace.

- Select the drop down next to your folder → import → file.

- Enter your own .IPYNB or .Giving file.

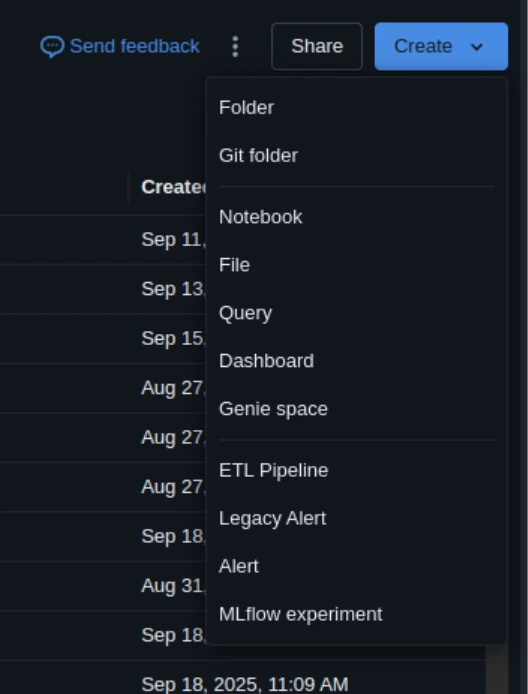

New build:

- Click on Create → Notebook.

After creating, tie the notebook to your running collection (search drop down).

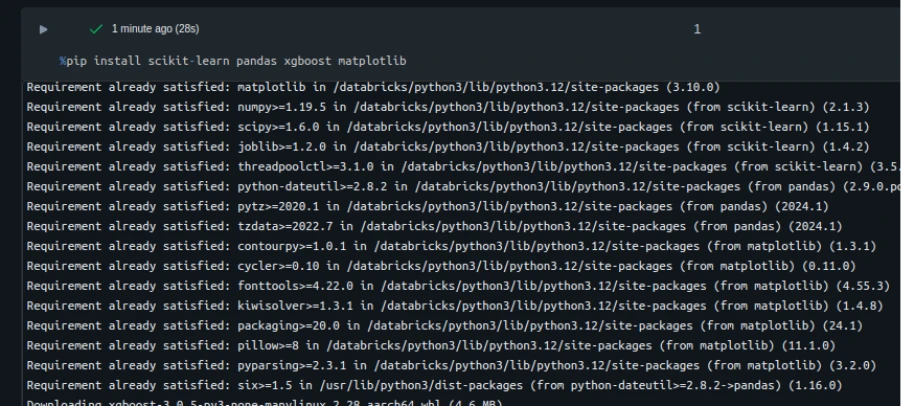

Step 4: Add dependencies

If your notebook depends on libraries like skikit-funda, pandas, or xgboost, put them inside a block.

Use:

%pip install scikit-learn pandas xgboost matplotlib

Databricks can restart the environment after installation; That's right.

A notebook: You may need to restart the kernel using %restart_python or dbutils.library.restartPython() to use updated packages.

You can enter from Requirements.Txt File and:

%pip install -r requirements.txt To confirm the setup:

import sklearn, sys

print(sys.version)

print(sklearn.__version__) Step 5: Start the notebook

Now you can generate your code.

Each cell runs on a collection of Dabricks.

- Press Shift + Enter to move to a single cell.

- Press Run to run the entire notebook.

You will get the same results as in Jupyter.

If your notebook has big data operations, databricks works with spark automatically, even in the free version.

You can check resource usage and job progress at Spark ui (Available under collection details).

Step 6: coding to databricks

Now that your Cluster and environment are set up, let's learn how to write and run ML Notebook on databricks.

We'll go through a full example, an NPS regression study, which uses regression modeling to predict customer satisfaction (NPS scores).

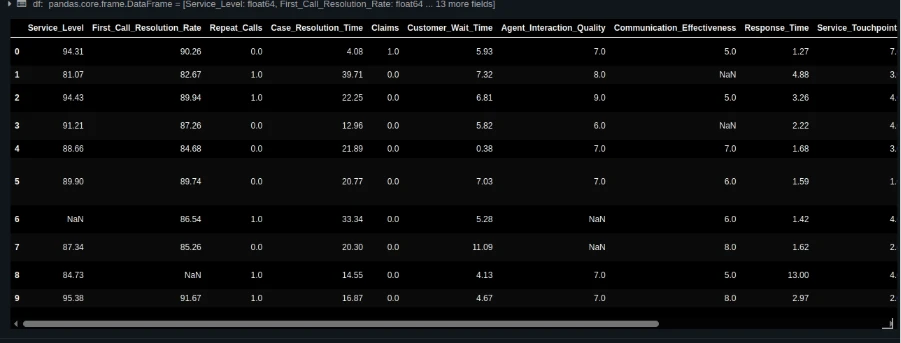

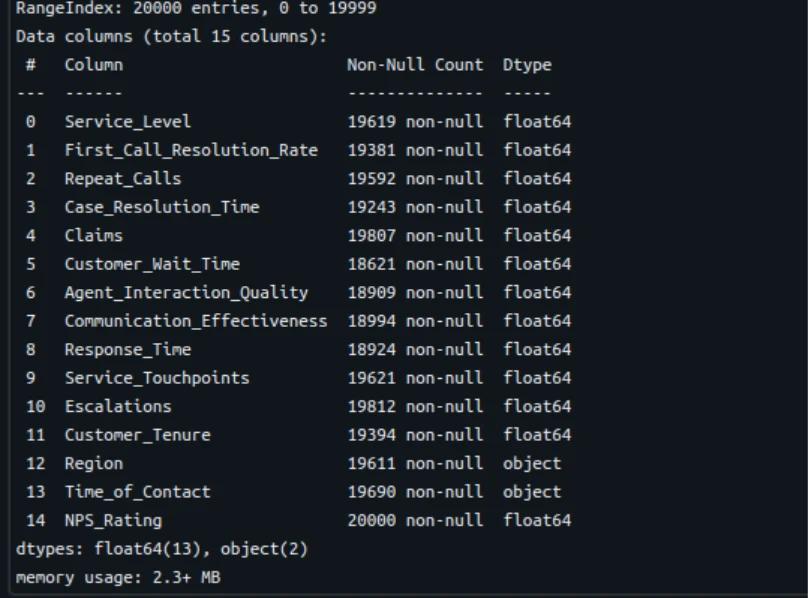

1: Load and check the data

Import your CSV file into your workspace and load it with pandas:

from pathlib import Path

import pandas as pd

DATA_PATH = Path("/Workspace/Users/[email protected]/nps_data_with_missing.csv")

df = pd.read_csv(DATA_PATH)

df.head()

Check the data:

df.info()

df.describe().T

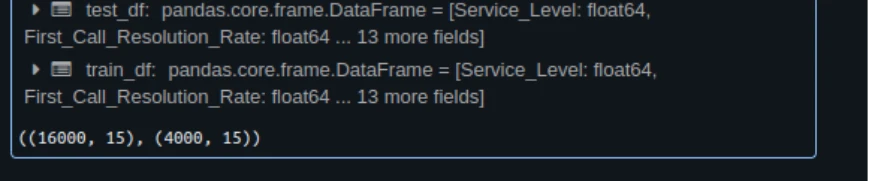

2: Train / test

from sklearn.model_selection import train_test_split

TARGET = "NPS_Rating"

train_df, test_df = train_test_split(df, test_size=0.2, random_state=42)

train_df.shape, test_df.shape

3: Immediately EDA

import matplotlib.pyplot as plt

import seaborn as sns

sns.histplot(train_df["NPS_Rating"], bins=10, kde=True)

plt.title("Distribution of NPS Ratings")

plt.show() 4: Preparing data and pipelines

from sklearn.pipeline import Pipeline

from sklearn.compose import ColumnTransformer

from sklearn.impute import KNNImputer, SimpleImputer

from sklearn.preprocessing import StandardScaler, OneHotEncoder

num_cols = train_df.select_dtypes("number").columns.drop("NPS_Rating").tolist()

cat_cols = train_df.select_dtypes(include=["object", "category"]).columns.tolist()

numeric_pipeline = Pipeline([

("imputer", KNNImputer(n_neighbors=5)),

("scaler", StandardScaler())

])

categorical_pipeline = Pipeline([

("imputer", SimpleImputer(strategy="constant", fill_value="Unknown")),

("ohe", OneHotEncoder(handle_unknown="ignore", sparse_output=False))

])

preprocess = ColumnTransformer([

("num", numeric_pipeline, num_cols),

("cat", categorical_pipeline, cat_cols)

]) 5: Train the model

from sklearn.linear_model import LinearRegression

from sklearn.metrics import r2_score, mean_squared_error

lin_pipeline = Pipeline([

("preprocess", preprocess),

("model", LinearRegression())

])

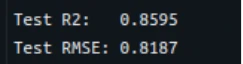

lin_pipeline.fit(train_df.drop(columns=["NPS_Rating"]), train_df["NPS_Rating"]) 6: Analyze performance with a model

y_pred = lin_pipeline.predict(test_df.drop(columns=["NPS_Rating"]))

r2 = r2_score(test_df["NPS_Rating"], y_pred)

rmse = mean_squared_error(test_df["NPS_Rating"], y_pred, squared=False)

print(f"Test R2: {r2:.4f}")

print(f"Test RMSE: {rmse:.4f}")

7: Visualize the prediction

plt.scatter(test_df["NPS_Rating"], y_pred, alpha=0.7)

plt.xlabel("Actual NPS")

plt.ylabel("Predicted NPS")

plt.title("Predicted vs Actual NPS Scores")

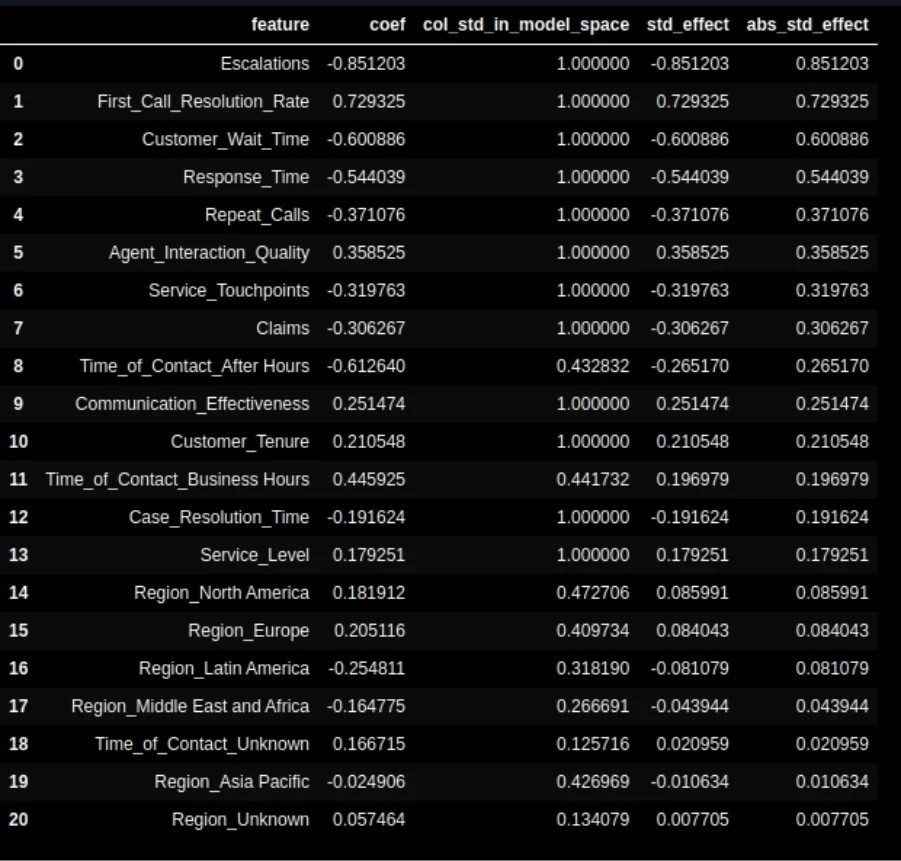

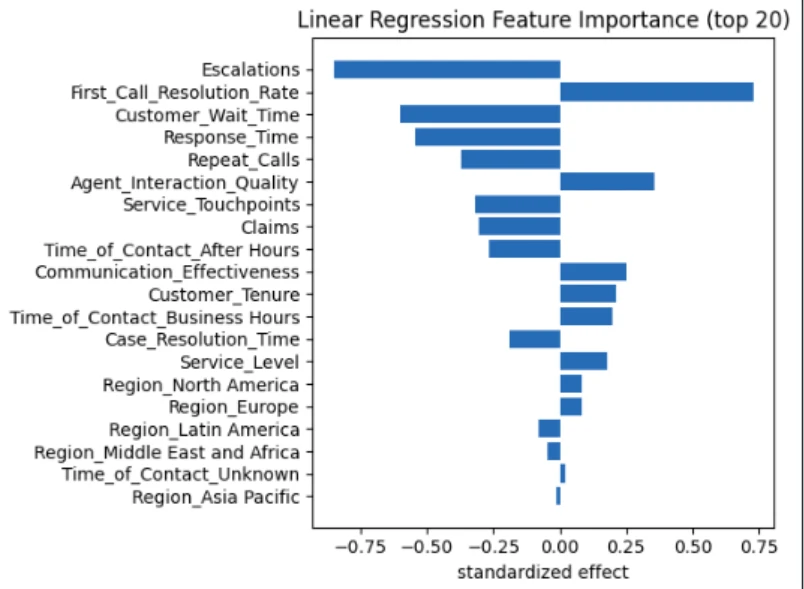

plt.show() 8: The importance of the feature

ohe = lin_pipeline.named_steps["preprocess"].named_transformers_["cat"].named_steps["ohe"]

feature_names = num_cols + ohe.get_feature_names_out(cat_cols).tolist()

coefs = lin_pipeline.named_steps["model"].coef_.ravel()

import pandas as pd

imp_df = pd.DataFrame({"feature": feature_names, "coefficient": coefs}).sort_values("coefficient", ascending=False)

imp_df.head(10)

Visualize:

top = imp_df.head(15)

plt.barh(top["feature"][::-1], top["coefficient"][::-1])

plt.xlabel("Coefficient")

plt.title("Top Features Influencing NPS")

plt.tight_layout()

plt.show()

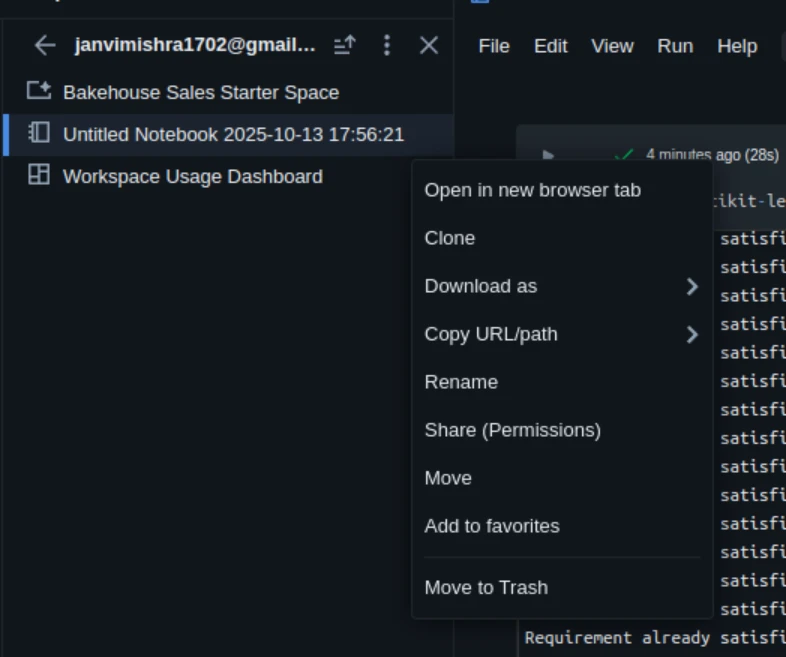

Step 7: Save and share your work

Databricks notebooks are automatically saved to your workspace.

You can send them to share or save them as a backup.

- Navigate to File → Click on the three dots and click on Download

- Choose .IPYNB,.DBCor .html

You can also connect your GitHub repository under version control repos.

Things You Didn't Know About Free Edition

The Free Edition is awesome, but don't forget the following:

- Clusters close after an idle period (about 2 hours).

- Storage capacity is limited.

- Certain business capabilities are not available (such as DELTA live tables and job scheduling).

- It is not a burden of production work.

Still, it's a perfect place to learn mL, try SPARK, and test models.

Lasting

Databricks makes cloud computing of ml notes easy. It requires no local installation or infrastructure. You can start with the free plan, develop and test your models, and upgrade to the paid plan later if you need additional capabilities or collaboration features. Whether you're a student, data scientist, or ML developer, databricks provide a seamless journey from prototype to production.

If you have never used it before, go to this website and start using your ML notebooks today.

Frequently Asked Questions

A. Sign up for DakAbricks Free Edition at Dabricks.com/Fornn/Freeree. It gives you a single user workstation, a small integrated cluster, and built-in support for MLFLOW.

A. No. The free plan is fully supported. You can create batches, import manuals, and run ML code directly online.

A. Use %pip install library_name inside the notebook cell. You can also enter from requirements.txt file uses %pip install -r requirements.txt.

Sign in to continue reading and enjoy expert content.