Microsoft Releases VibeVoice-ASR: An Integrated Speech-to-Text Model Designed to Capture 60-Minute Long-Form Audio in a Single Pass.

Microsoft released VibeVoice-ASR as part of the VibeVoice family of open source voice AI models. VibeVoice-ASR is described as an integrated speech-to-text model that can handle 60-minute long-form audio in a single pass and output structured transcripts including who, when, and what, with support for Custom Hot Words.

VibeVoice sits on a single repository that hosts Text-to-Speech, real-time TTS, and Automatic Speech Recognition models under the MIT license. VibeVoice uses continuous speech tokens operating at 7.5 Hz and the following token distribution framework where the Large Language Model reasons text and conversation and the distribution head generates acoustic information. This framework is primarily written for TTS, but it describes the overall design context in which VibeVoice-ASR resides.

Long form ASR with one global context

Unlike traditional ASR (Automatic Speech Recognition) systems that first cut audio into short segments and then use dialing and alignment as separate segments, VibeVoice-ASR is designed to accommodate up to 60 minutes of continuous audio input within a 64K token length budget. The model maintains a single global representation of the complete session. This means that the model can store the identity of the speaker and the context of the topic for an entire hour instead of resetting every few seconds.

60 Minutes Single-Pass Processing

I the first key feature that most standard ASR systems process long audio by cutting it into short segments, which can lose global context. VibeVoice-ASR instead captures up to 60 minutes of continuous audio within a 64K token window to be able to maintain consistent speaker sequence and semantic context throughout the recording.

This is important for tasks such as meeting transcripts, lectures, and long support calls. One pass in a complete sequence simplifies the pipeline. There is no need to use custom logic to combine incomplete views or adjust speaker labels at the boundaries between audio segments.

Hot words are customized to understand the accuracy of the domain

Custom Hotwords are the second main feature. Users can provide hot words such as product names, organization names, technical terms, or background context. The model uses these hot words to guide the recognition process.

This allows you to choose the correct spelling and pronunciation for certain domain tokens without retraining the model. For example, a dev user can pass internal project names or specific customer goals during a decision. This is useful when using the same base model across several products that share the same acoustic properties but very different names.

Microsoft also ships a finetuning-asr directory with LoRA-based tuning scripts for VibeVoice-ASR. Together, hotwords and LoRA fine-tuning provide the means for both light weight adaptation and deep domain expertise.

Rich text, dial, and time

I the third aspect Rich Transcription of Who, When, and What. The model jointly performs ASR, dialing, and timestamping, and returns structured output that shows who said what and when.

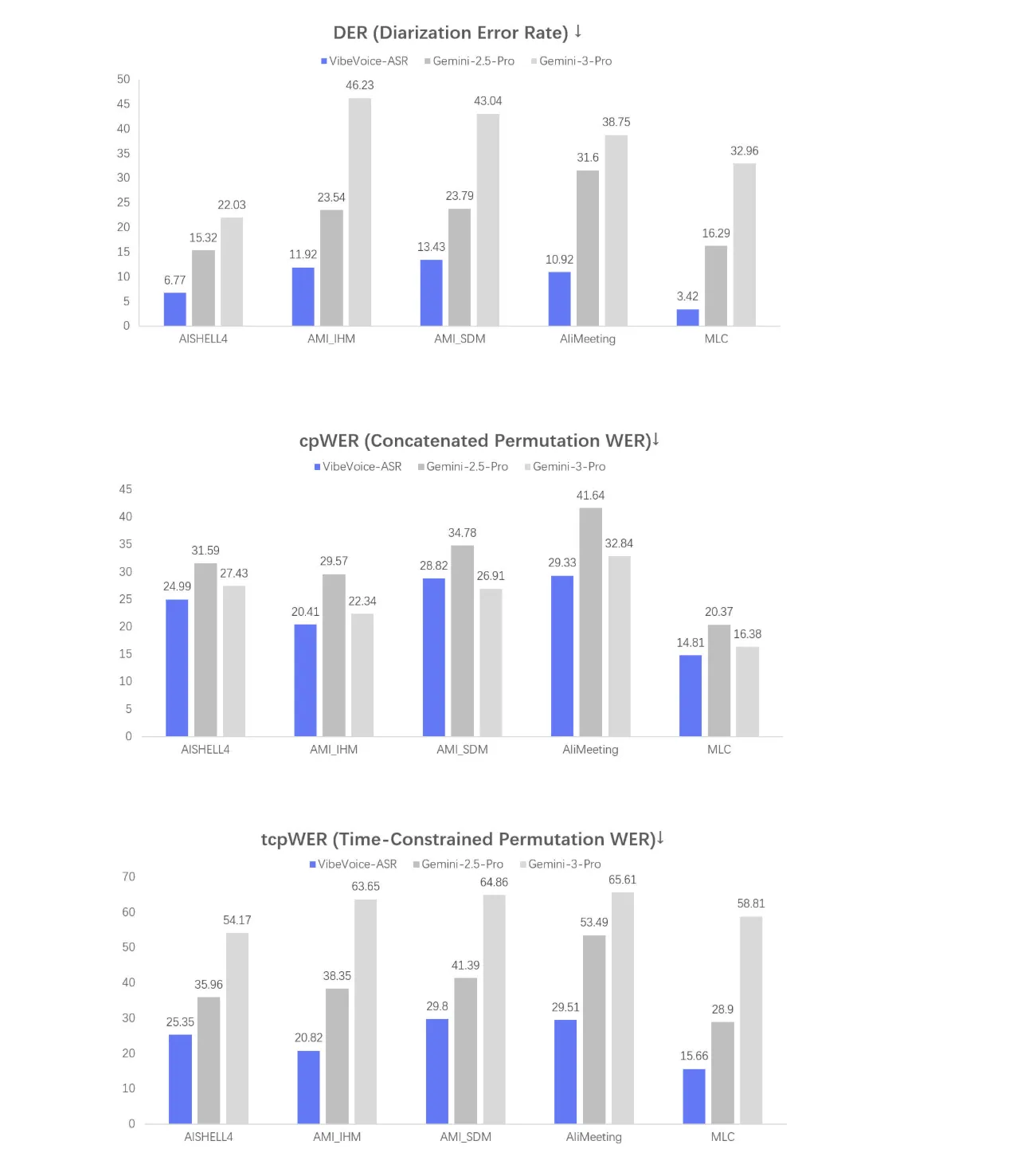

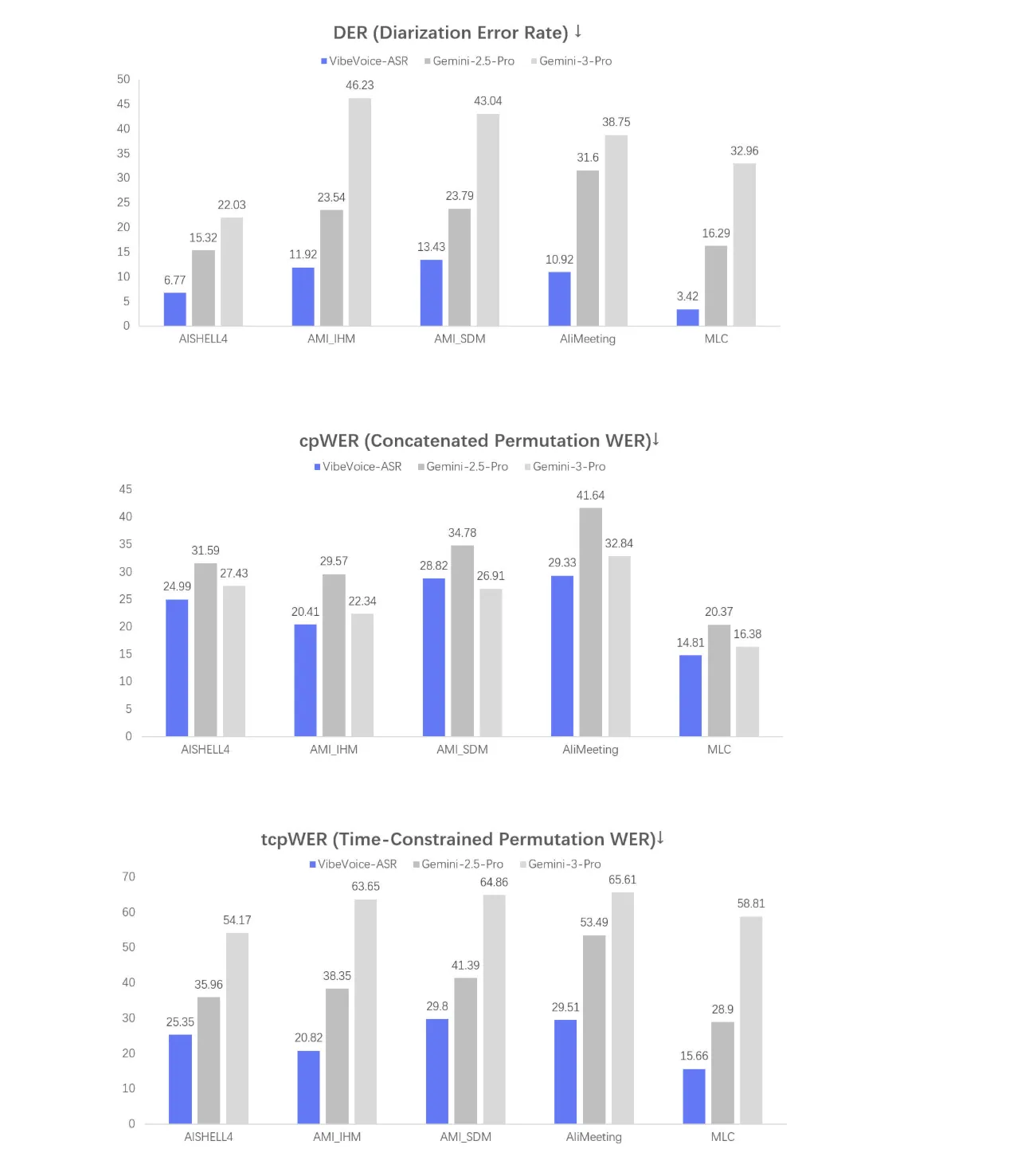

See below for three test statistics named DER, cpWER, and tcpWER.

- DER is Diarization Error Rate, it measures how well the model assigns speech segments to the correct speaker

- cpWER and tcpWER error rate metrics are computed under dialog settings

These graphs summarize how well the model performs on multi-speaker long-form data, which is the primary target setting for this ASR system.

The structured output format is well suited for downstream processing such as speaker-specific summaries, action item outputs, or statistical dashboards. Since the segments, speakers, and timestamps already appear in a single model, the downstream code can treat the transcript as a time-aligned event log.

Key Takeaways

- VibeVoice-ASR is an integrated speech to text model that handles 60 minutes of long-form audio in a single pass within a 64K token context.

- The model jointly performs ASR, decoding, and timestamping to extract structured documents that encode Who, When, and What in a single decision step.

- Customizable hotwords allow users to enter domain-specific terms such as product names or technical jargon to improve recognition accuracy without retraining the model.

- Testing with DER, cpWER, and tcpWER focuses on multi-speaker conversation scenarios that match the model to meetings, lectures, and long phone calls.

- VibeVoice-ASR is released from the open source VibeVoice stack under the MIT license with legal weight, fine-tuning documentation, and an online playground for testing.

Check it out Model weights, Repo again The playground. Also, feel free to follow us Twitter and don't forget to join our 100k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.