Erarag: Estimated Return, Highest Graph Relief Plan

Large models of language (LLMS) change many environmental processes, but they are experiencing sensitive restrictions in the face of seawater, details of domain, or complex. Retrieved Nexfugned Nexfugned Generation Generation (RAG) APPERT APPERTING FEATING LOGES by allowing language models to find and integrate information from external sources. However, RAG Systems based on Static based on Static and fighting and efficiency, accuracy, and disability where information continues to regularly – such as research content, or online content.

Introducing Erarag: An effective update of data appearance

Seeing these challenges, researchers from Huawei, Hong Kong University of Science Netechnology, and Webank have developed ArnagaThe novel restoration framework – not to be hidden from the disagreement of the programmers.

Important features:

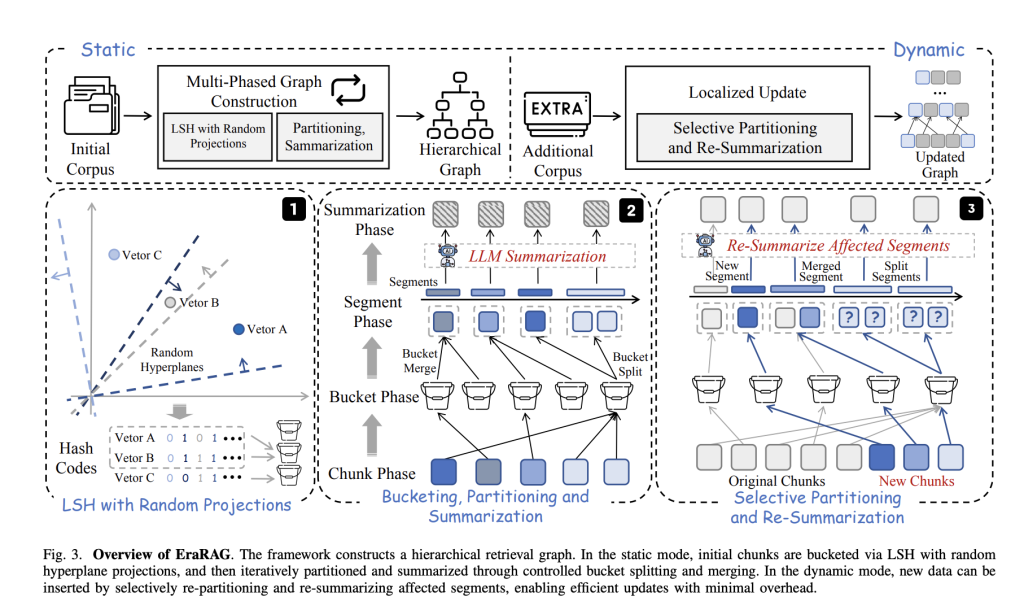

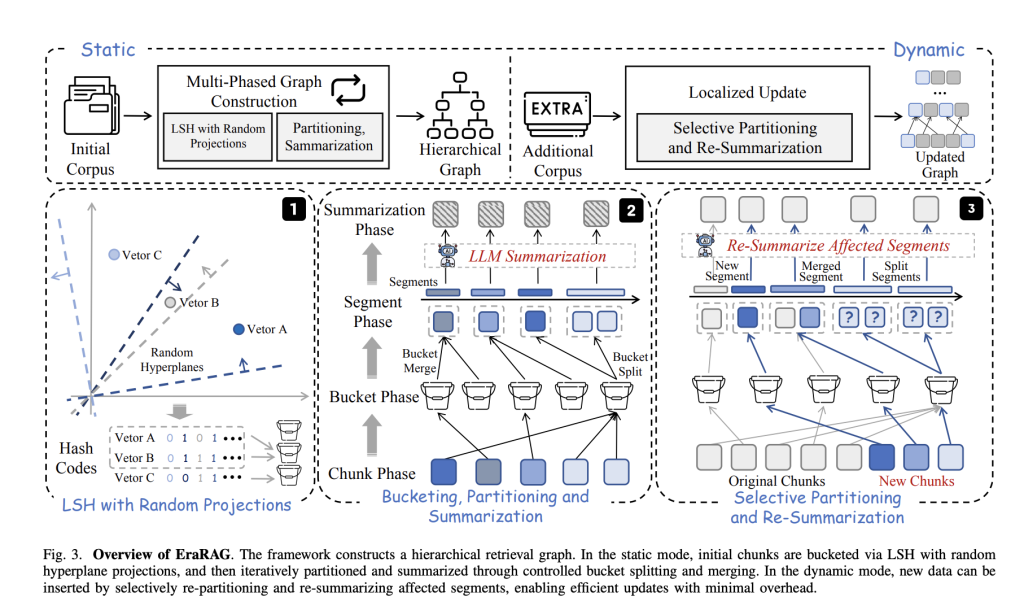

- Hyperplane-based on the sensitive area of the area (lhs):

All corpus depends on small pieces of text embarking on sectors. The Erarag used random hyperplanes to work on these sectors into the binary codes of the binary – the process with the same groups of the same chunks “with a bucket.” This method-based approach keeps joint in semantic combination and relevant groups. - Hierarchical, the construction of a lot of graph:

The Core Retrieval structure in Erarag is a multi-placed graph. In each part, parts (or buckets) of the same text summarized using the language model. The largest portions are divided into, while those smaller is combined – guarantee the semantic variable and a balanced granurity. Summary submissions to higher parts enable the effective restoration of both negative questions and habits. - Growth, local updates:

When the new data arrives, its harvesting is used to ensure the first verification of hyperplanes buckets / parts directly affected by the renewed, integrated, separation, and all the graph is always missing. The renewal distributes Graph Hierarchy, but lives in the affected district, saves important integration and the cost of the token. - Redemption and Decision:

Unlike Standard Lhs Clustering, Erarag keeps a set of hyperplanes used during the first death time. This makes buckets decrease and reorganize, which is very important for consistent, active updates over time.

Working with the impact

A complete examination of a variety of question to answer benches indicate that Erarag:

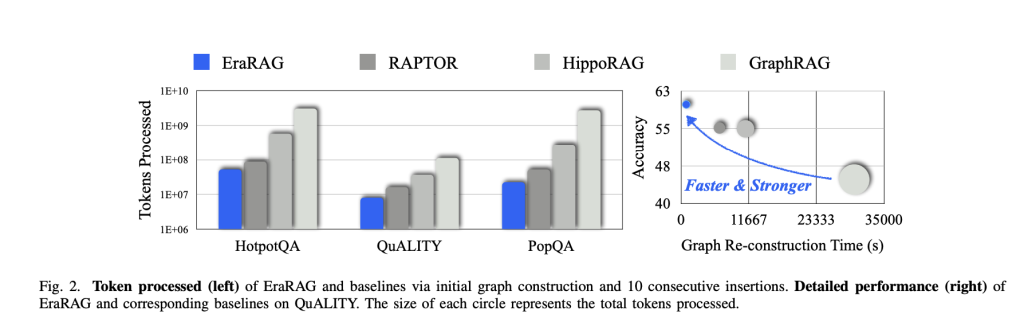

- Reduce update costs: It reaches 95% reduction during the reorganization of the graph and the use of the token compared with leading rags based on graphs (eg graphrag, raptor, hippor, hippor, hippor, hippor, hipporaging).

- Keeps higher accuracy: The Erarag remains the restrictions of both architecture restored to both accuracy and memory – across the level, and small response skills to restore returning power or multiple consultation skills.

- Supports the requirements for a variable question: A variety of graphs various allows Erarag to repay properly well organized information or senior summatic summaries, accompanied by its reorganization pattern in each of the question type.

Active results

Erarag provides limited and solid return framework for international settings where data is added continuously – as live affairs, domestic archives. It beats the balance between the refund and renewal of changes, making apps Satisfied LLM more, responding, and reliable areas immediately.

Look Paper including A Kiki tree. All credit for this study goes to researchers for this project | Meet Ai Dev Newsletter Learn about 40k + Devs And researchers from Envidia, Openai, Deepmind, Meta, Microsoft, Microsoft, Microsoft, Anggen, Aflac, Wells Fargo and 100s more [SUBSCRIBE NOW]

Nikhil is a student of students in MarktechPost. Pursuing integrated graduates combined in the Indian Institute of Technology, Kharagpur. Nikhl is a UI / ML enthusiasm that searches for applications such as biomoutomostoments and biomedical science. After a solid in the Material Science, he examines new development and developing opportunities to contribute.