Ukujula Okujulile Kwezobuchwepheshe Ezigabeni Ezibalulekile Zokuqeqeshwa Kwemodeli Yolimi Olukhulu Lwesimanje, Ukuqondanisa, Nokuthunyelwa

Ukuqeqesha imodeli yolimi olukhulu yesimanje (i-LLM) akusona isinyathelo esisodwa kodwa kuyipayipi elihlelwe ngokucophelela eliguqula idatha engahluziwe ibe isistimu ehlakaniphile ethembekile, eqondanisiwe, futhi ehlakazekayo. Umnyombo wawo amanga ukuqeqeshwa kusengaphambiliisigaba sesisekelo lapho amamodeli efunda amaphethini olimi ajwayelekile, izakhiwo zokucabanga, nolwazi lomhlaba ezinhlakeni zombhalo ezinkulu. Lokhu kulandelwa ukulungiswa kahle okugadiwe (SFT)lapho amasethi edatha akhethiwe alolonga ukuziphatha kwemodeli kumisebenzi ethile nemiyalo. Ukwenza ukuzivumelanisa nezimo kusebenze kahle, amasu afana I-LoRA (Ukujwayela Kwezinga Eliphansi) futhi I-QLoRA (Quantized LoRA) vumela ukuhlela kahle okunepharamitha ngaphandle kokuqeqesha kabusha yonke imodeli.

Ukuqondanisa izendlalelo ezifana I-RLHF (Ukuqiniswa Ukufunda Empendulweni Yomuntu) qhubeka wenze ngcono imiphumela ukuze ihambisane nezintandokazi zabantu, okulindelwe ukuphepha, namazinga okusebenziseka. Muva nje, ukulungiselelwa okugxile ekucabangeni okufana noku I-GRPO (Ukuthuthukiswa Kwenqubomgomo Ehlobene Neqembu) ziye zavela ukuthuthukisa ukucabanga okuhlelekile kanye nokuxazulula izinkinga ngezinyathelo eziningi. Ekugcineni, konke lokhu kufinyelela umvuthwandaba ukuthunyelwalapho amamodeli ethuthukiswa khona, akalwa, futhi ahlanganiswe kumasistimu omhlaba wangempela. Ndawonye, lezi zigaba zakha ipayipi lokuqeqesha le-LLM yesimanje—inqubo eguqukayo, enezendlalelo eziningi enganqumi nje ukuthi imodeli yazini, kodwa indlela ecabanga ngayo, eziphatha ngayo, futhi iletha inani elifanele ezindaweni zokukhiqiza.

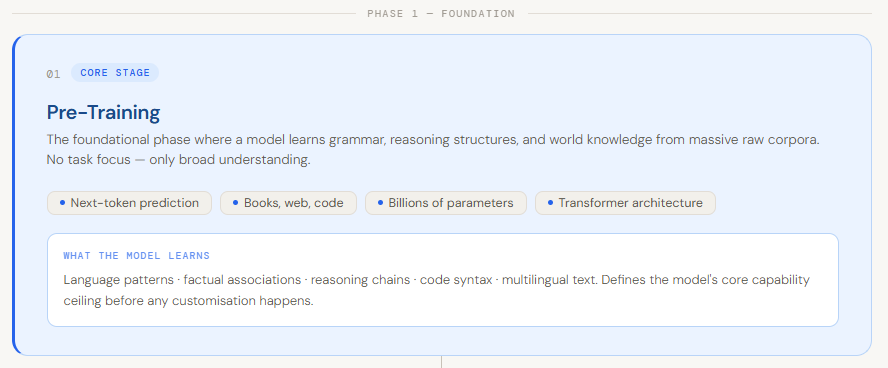

Pre-Training

Ukuqeqeshwa kusengaphambili kuyisigaba sokuqala nesiyisisekelo ekwakheni imodeli enkulu yolimi. Kulapho imodeli ifunda khona izisekelo zolimi—uhlelo lolimi, umongo, amaphethini okucabanga, nolwazi oluvamile lomhlaba—ngokuqeqeshwa ngamanani amakhulu edatha eluhlaza njengezincwadi, amawebhusayithi, nekhodi. Esikhundleni sokugxila emsebenzini othile, inhloso lapha ukuqonda okubanzi. Imodeli ifunda amaphethini anjengokubikezela igama elilandelayo emshweni noma ukugcwalisa amagama angekho, okuyisiza ukuthi ikhiqize umbhalo ozwakalayo nohambisanayo ngokuhamba kwesikhathi. Lesi sigaba ngokuyisisekelo siguqula inethiwekhi ye-neural engahleliwe ibe into “eqondayo” ulimi ezingeni elijwayelekile .

Okwenza ukuziqeqesha kusengaphambili kubaluleke kakhulu ukuthi kuchaza amakhono ayinhloko emodeli ngaphambi kokuba kwenzeke noma yikuphi ukwenza ngokwezifiso. Ngenkathi izigaba zakamuva ezifana nokulungisa kahle imodeli zijwayela izimo ezithile zokusetshenziswa, zakha phezu kwalokho osekufundiwe ngesikhathi sokuqeqesha. Ngisho noma incazelo enembile yokuthi “ukuqeqeshwa kusengaphambili” ingahluka—ngezinye izikhathi kuhlanganise namasu amasha njengokufunda okusekelwe emfundisweni noma idatha yokwenziwa—umqondo oyinhloko uhlala unjalo: yisigaba lapho imodeli ithuthukisa ubuhlakani bayo obuyisisekelo. Ngaphandle kokuqeqeshwa okuqinile, yonke into elandelayo iba ncane kakhulu ekusebenzeni.

I-Finetuning Egadiwe

I-Supervised Fine-Tuning (SFT) yisiteji lapho i-LLM eqeqeshwe kusengaphambili ishintshwa ukuze yenze imisebenzi ethile kusetshenziswa idatha yekhwalithi ephezulu, enelebula. Esikhundleni sokufunda embhalweni ongahluziwe, ongahlelekile njengokuziqeqeshela kusengaphambili, imodeli iqeqeshelwa okokufaka okukhethwe ngokucophelela—amapheya okukhiphayo aqinisekiswe ngaphambilini. Lokhu kuvumela imodeli ukuthi ilungise izisindo zayo ngokusekelwe kumehluko phakathi kokubikezela kwayo nezimpendulo ezifanele, ukuyisiza ihambisane nemigomo ethile, imithetho yebhizinisi, noma izitayela zokuxhumana. Ngamagama alula, ngenkathi ukuqeqeshwa kwangaphambili kufundisa imodeli lusebenza kanjani ulimii-SFT iyayifundisa indlela yokuziphatha ezimweni zokusetshenziswa zomhlaba wangempela.

Le nqubo yenza imodeli inembe kakhudlwana, ithembeke, futhi yazi umongo womsebenzi othile. Ingahlanganisa ulwazi oluqondene nesizinda, ilandele imiyalelo ehlelekile, futhi ikhiqize izimpendulo ezifana nethoni noma ifomethi oyifunayo. Isibonelo, imodeli evamile eqeqeshwe kusengaphambili ingase iphendule umbuzo womsebenzisi ofana:

“Angikwazi ukungena ku-akhawunti yami. Kufanele ngenzenjani?” ngempendulo emfushane kanje:

“Zama ukusetha kabusha iphasiwedi yakho.”

Ngemva kokulungiswa kahle okugadiwe ngedatha yosekelo lwekhasimende, imodeli efanayo ingaphendula ngokuthi:

“Ngiyaxolisa ukuthi ubhekene nale nkinga. Ungazama ukusetha kabusha iphasiwedi yakho usebenzisa inketho ethi 'Ukhohlwe Iphasiwedi'. Uma inkinga iqhubeka, sicela uxhumane nethimba lethu labasekeli ku- [email protected]—sikhona ukuze sisize.”

Lapha, imodeli ifunde ukuzwelana, isakhiwo, kanye nesiqondiso esiwusizo ezibonelweni ezilebulwe. Lawo amandla e-SFT—iguqula imodeli yolimi olujwayelekile lube umsizi oqondene nomsebenzi oziphatha ngendlela oyifunayo.

LoRA

I-LoRA (I-Low-Rank Adaptation) iyindlela ephumelelayo yokuhlela kahle eklanyelwe ukujwayela amamodeli ezilimi ezinkulu ngaphandle kokuqeqesha kabusha yonke inethiwekhi. Esikhundleni sokubuyekeza zonke izisindo zemodeli—okubiza kakhulu kumamodeli anezigidigidi zamapharamitha—i-LoRA imisa izisindo zangempela eziqeqeshwe kusengaphambili futhi yethula omatikuletsheni “asezingeni eliphansi” abaqeqeshekayo abe yizigaba ezithile zemodeli (imvamisa ngaphakathi kwesakhiwo se-transformer). Laba matric bafunda ukulungisa indlela yokuziphatha yemodeli yomsebenzi othile, behlisa kakhulu inani lamapharamitha aqeqeshekayo, ukusetshenziswa kwememori ye-GPU, nesikhathi sokuqeqeshwa, kuyilapho kugcinwa ukusebenza okuqinile.

Lokhu kwenza i-LoRA ibe wusizo ikakhulukazi ezimeni zomhlaba wangempela lapho ukuhambisa amamodeli ashunwe kahle angeke asebenze. Isibonelo, zicabange ufuna ukulungisa i-LLM enkulu ukuze uthole isifinyezo sedokhumenti yomthetho. Ngokucushwa kahle okuvamile, uzodinga ukuthi uphinde uqeqeshe izigidigidi zamapharamitha. Nge-LoRA, ugcina imodeli yesisekelo ingashintshiwe futhi uqeqesha kuphela isethi encane yabafundi abangeziwe “abanyakazisa” imodeli ekuqondeni okuqondene nomthetho. Ngakho-ke, lapho unikezwa isixwayiso:

“Fingqa lesi sigatshana senkontileka…”

Imodeli eyisisekelo ingase ikhiqize isifinyezo esijwayelekile, kodwa imodeli evumelaniswe ne-LoRA ingaveza impendulo enembe, eqaphela isizinda kusetshenziswa amagama asemthethweni kanye nesakhiwo. Ngamafuphi, i-LoRA ikuvumela ukuthi wenze amamodeli anamandla akhethekile—ngaphandle kwezindleko ezinzima zokuphinda uziqeqeshe ngokugcwele.

QLoRA

I-QLoRA (I-Quantized Low-Rank Adaptation) iyisandiso se-LoRA esenza ukuhlela kahle kusebenze kahle inkumbulo ngokuhlanganisa ukuzivumelanisa nezinga eliphansi nokulinganisa imodeli. Esikhundleni sokugcina imodeli eqeqeshwe kusengaphambili inokunemba okujwayelekile okungu-16-bit noma okungu-32-bit, i-QLoRA iminyanisa izisindo zemodeli zehle ziye ku-4-bit ukunemba. Imodeli eyisisekelo ihlala iqhwa kuleli fomu elicindezelweyo, futhi njenge-LoRA, ama-adaptha amancane aqeqeshekayo asezingeni eliphansi ayengezwa phezulu. Phakathi nokuqeqeshwa, ama-gradient ageleza kumodeli ye-quantized kulawa ma-adaptha, okuvumela imodeli ukuthi ifunde ukuziphatha okuqondene nomsebenzi othile kuyilapho isebenzisa ingxenyana yenkumbulo edingwa ukulungisa kahle okuvamile.

Le ndlela yenza kube nokwenzeka ukushuna kahle amamodeli amakhulu kakhulu—ngisho nalawo anamashumi ezigidigidi zamapharamitha—ku-GPU eyodwa, okwakungenzeki ngaphambilini. Isibonelo, ake sithi ufuna ukulungisa imodeli yepharamitha engu-65B esimweni sokusebenzisa i-chatbot. Ngokulungiswa kahle okujwayelekile, lokhu kuzodinga ingqalasizinda enkulu. Nge-QLoRA, imodeli iqala icindezelwe ibe yi-4-bit, futhi kuqeqeshwa izendlalelo ze-adaptha ezincane kuphela. Ngakho-ke, lapho unikezwa isixwayiso:

“Chaza i-quantum computing ngamagama alula”

Imodeli eyisisekelo ingase inikeze incazelo evamile, kodwa inguqulo ecushwe nge-QLoRA inganikeza impendulo ehleleke kakhudlwana, eyenziwe lula, nelandela imiyalelo—efanelana nedathasethi yakho—ngenkathi isebenza kahle kuzingxenyekazi zekhompyutha ezilinganiselwe. Ngamafuphi, i-QLoRA iletha ukulungiswa kahle kwemodeli enkulu ngendlela efinyelelekayo ngokunciphisa ngokumangazayo ukusetshenziswa kwememori ngaphandle kokudela ukusebenza.

I-RLHF

I-Reinforcement Learning From Human Feedback (RLHF) yisiteji sokuqeqesha esisetshenziselwa ukuqondanisa amamodeli olimi amakhulu nokulindelwe abantu kosizo, ukuphepha, kanye nekhwalithi. Ngemva kokuqeqeshwa kusengaphambili nokulungiswa kahle okugadiwe, imodeli isengase ikhiqize okukhiphayo okulungile ngokobuchwepheshe kodwa okungasizi, okungaphephile, noma okungahambelani nenjongo yomsebenzisi. I-RLHF ibhekana nalokhu ngokuhlanganisa ukwahlulela komuntu ku-loop yokuqeqesha—ukubuyekeza kwabantu futhi kulinganise izimpendulo zamamodeli amaningi, futhi le mpendulo isetshenziselwa ukuqeqesha imodeli yomvuzo. I-LLM ibe isithuthukiswa ngokwengeziwe (imvamisa kusetshenziswa ama-algorithms afana ne-PPO) ukukhiqiza izimpendulo ezandisa lo mvuzo ofundiwe, iwufundise ngempumelelo lokho okuthandwa abantu.

Le ndlela ilusizo ikakhulukazi emisebenzini lapho imithetho kunzima ukuyichaza ngokwezibalo—njengokuhlonipha, ukuhlekisa, noma ukungabi nobuthi—kodwa kulula ukuthi abantu bayihlole. Isibonelo, kunikezwe umyalo onjengokuthi:

“Ngitshele ihlaya ngomsebenzi”

Imodeli eyisisekelo ingase ikhiqize okuthile okungalungile noma okungalungile. Kodwa ngemva kwe-RLHF, imodeli ifunda ukukhiqiza izimpendulo ezihehayo, eziphephile, futhi ezihambisana nokunambitha komuntu. Ngokufanayo, embuzweni obucayi, esikhundleni sokunikeza impendulo engacacile noma eyingozi, imodeli eqeqeshwe yi-RLHF ingaphendula ngokuzibophezela okukhulu nangosizo. Ngamafuphi, i-RLHF ivala igebe phakathi kobuhlakani obungavuthiwe nokusebenziseka komhlaba wangempela ngokubumba amamodeli ukuze aziphathe ngendlela abantu abazazisa ngayo.

Ukubonisana (GRPO)

I-Group Relative Policy Optimization (GRPO) iyindlela entsha yokufunda yokuqinisa eklanyelwe ngokukhethekile ukuthuthukisa ukucabanga nokuxazulula izinkinga ngezinyathelo eziningi kumamodeli wolimi amakhulu. Ngokungafani nezindlela zendabuko ezifana ne-PPO ezihlola izimpendulo ngazinye, i-GRPO isebenza ngokukhiqiza izimpendulo zekhandidethi eziningi zokwaziswa okufanayo kanye nokubaqhathanisa phakathi kweqembu. Impendulo ngayinye yabelwa umvuzo, futhi esikhundleni sokuthuthukisa ngokusekelwe kumaphuzu aphelele, imodeli ifunda ngokuqonda ukuthi yiziphi izimpendulo. isihlobo esingcono kwabanye. Lokhu kwenza ukuqeqeshwa kusebenze kahle futhi kuhambisane kangcono nemisebenzi lapho ikhwalithi incike kukho—njengokucabanga, izincazelo, noma ukuxazulula izinkinga ngesinyathelo ngesinyathelo.

Empeleni, i-GRPO iqala ngomyalo (imvamisa ithuthukiswa ngemiyalo efana nale “cabanga isinyathelo ngesinyathelo”), futhi imodeli ikhiqiza izimpendulo ezimbalwa ezingaba khona. Lezi zimpendulo zibe sezinikezwa amaphuzu, bese imodeli izibuyekeza ngokusekelwe ekutheni yiziphi ezisebenze kahle kakhulu eqenjini. Isibonelo, kunikezwe umyalo onjengokuthi:

“Xazulula: Uma isitimela sihamba amakhilomitha angu-60 ngehora elilodwa, kuzothatha isikhathi esingakanani ukuhamba amakhilomitha angu-180?”

Imodeli eyisisekelo ingase igxumele empendulweni ngokuqondile, ngezinye izikhathi ngokungalungile. Kepha imodeli eqeqeshwe yi-GRPO kungenzeka ukuthi ikhiqize ukucabanga okuhlelekile okufana nalokhu:

“Isivinini = 60 km/h. Isikhathi = Ibanga / Isivinini = 180 / 60 = amahora angu-3.”

Ngokufunda ngokuphindaphindiwe ezindleleni zokucabanga ezingcono phakathi kwamaqembu, i-GRPO isiza amamodeli ukuthi angaguquguquki, anengqondo, futhi athembeke emisebenzini eyinkimbinkimbi—ikakhulukazi lapho kubaluleke khona ukucabanga isinyathelo ngesinyathelo.

Ukuthunyelwa

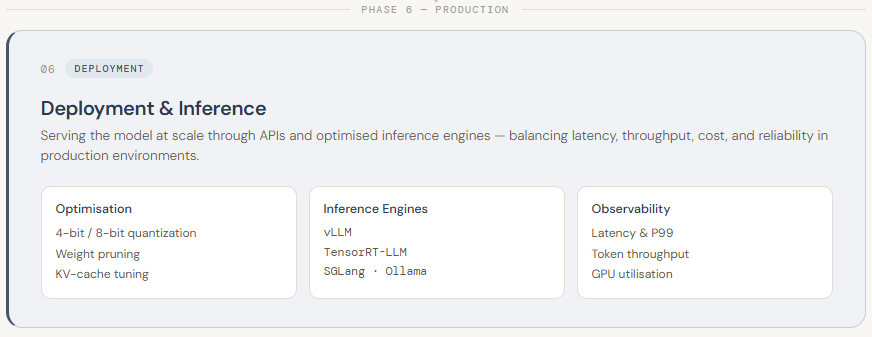

Ukuthunyelwa kwe-LLM kuyisigaba sokugcina sepayipi, lapho imodeli eqeqeshiwe ihlanganiswa endaweni yomhlaba wangempela futhi yenziwe ifinyeleleke ukuze isetshenziswe ngokungokoqobo. Lokhu ngokuvamile kubandakanya ukuveza imodeli ngama-API ukuze izinhlelo zokusebenza zihlanganyele nayo ngesikhathi sangempela. Ngokungafani nezigaba zangaphambili, ukuthunyelwa kuncane mayelana nokuqeqeshwa nokunye okuningi mayelana ukusebenza, ukukala, nokuthembeka. Njengoba ama-LLM emakhulu futhi asebenzisa izinsizakusebenza, ukuwasebenzisa kudinga ukuhlelwa ngokucophelela kwengqalasizinda—njengokusebenzisa ama-GPU asebenza kahle kakhulu, ukuphatha inkumbulo kahle, nokuqinisekisa izimpendulo zokungabambeki okuphansi kwabasebenzisi.

Ukwenza ukuthunyelwa kusebenze kahle, kusetshenziswa izindlela eziningi zokuthuthukisa nokuphakela. Amamodeli ngokuvamile quantized (isb, kuncishiswe kusuka ku-16-bit kuya ku-4-bit ukunemba) ukuze kwehliswe ukusetshenziswa kwememori nokusheshisa ukucabanga. Izinjini ezikhethekile ezifana ne-vLLM, i-TensorRT-LLM, ne-SGlang zisiza ukukhulisa ukuphuma nokunciphisa ukubambezeleka. Ukuthunyelwa kungenziwa nge ama-API asekelwe emafini (njengamasevisi aphethwe ku-AWS/GCP) noma ukusetha okuzenzelayo usebenzisa amathuluzi afana ne-Ollama noma i-BentoML ukuze uthole ukulawula okwengeziwe kobumfihlo nezindleko. Phezu kwalokhu, amasistimu akhelwe ukuqapha ukusebenza (ukubambezeleka, ukusetshenziswa kwe-GPU, ukuphuma kwamathokheni) nokukala ngokuzenzakalelayo izinsiza ngokusekelwe esimeni. Empeleni, ukusetshenziswa kumayelana nokuguqula i-LLM eqeqeshiwe ibe isistimu esheshayo, ethembekile, nelungele ukukhiqiza engasiza abasebenzisi esikalini.

Ngiyi-Civil Engineering Graduate (2022) evela e-Jamia Millia Islamia, eNew Delhi, futhi nginesithakazelo esikhulu kwi-Data Science, ikakhulukazi i-Neural Networks kanye nokusebenza kwayo ezindaweni ezihlukahlukene.