What is asyncio? Starting with asynchronous Python and uses asyncio in AI app with a LLM

In many AI tests today, working is a big deal. You may have seen that while working in large languages (llms), most of the time is spent waiting – waiting for API response, waiting for many calls to finish, or wait for the / O operations.

This is where Asyncio gets in. Amazingly, many developers use llms without realizing that they can speed up their apps by the asynchronous system.

This guide will travel:

- What is asyncio?

- Starting with Asynchronous Python

- Using asyncio in AI app with a llm

What is asyncio?

Asython's Asyncio Library to the writing of the same code using async / expecting syntax, which allows many I / OS functions to be properly within one cord. At its spine, asyncio works on expected – usually coroutines – that the event of the event Loop's Loop schedules have released without blocking.

With simple terms, code code is valid as one of the same food line, while the asynchronous code is conducting functions and multiple recipients. This applies to the API telephones (eg open, anthropic, anthropic, tie face), where most of the time is spent waiting for answers, enables murder.

Starting with Asynchronous Python

Example: Running activities and without asyncio

In this pattern, we were running a sort of work three times in a way that they agreed. Release indicates that each call_hello () good morning … “, waiting for 2 seconds, print” … … Earth! “. As the calls occur in order, the waiting time adds to the top – 2 seconds × 3 Calls = 6 seconds. Look Full codes here.

import time

def say_hello():

print("Hello...")

time.sleep(2) # simulate waiting (like an API call)

print("...World!")

def main():

say_hello()

say_hello()

say_hello()

if __name__ == "__main__":

start = time.time()

main()

print(f"Finished in {time.time() - start:.2f} seconds")The below code indicates that all three calls to Say_hello () the work started almost at the same time. SOME PRINTS “Hello …” Soon, then wait for 2 seconds at the same time before printing “… earth!”.

Because these tasks ran like the same time in succession, the full time is nearly long-waiting (~ 2 seconds) instead of the sum of all waiting (6 seconds in the synchronous version). This shows the operation of an Asyncio's operation of I / O-Bound. Look Full codes here.

import nest_asyncio, asyncio

nest_asyncio.apply()

import time

async def say_hello():

print("Hello...")

await asyncio.sleep(2) # simulate waiting (like an API call)

print("...World!")

async def main():

# Run tasks concurrently

await asyncio.gather(

say_hello(),

say_hello(),

say_hello()

)

if __name__ == "__main__":

start = time.time()

asyncio.run(main())

print(f"Finished in {time.time() - start:.2f} seconds")Example: Download Curse

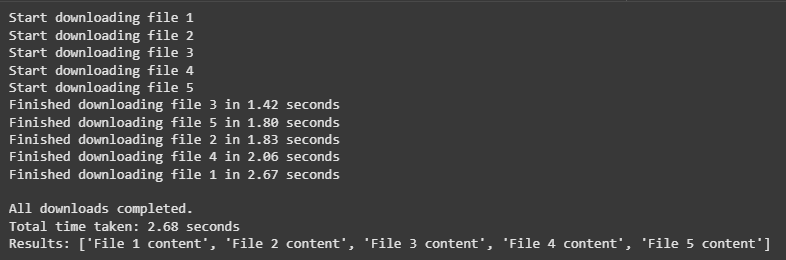

Imagine you need to download a few files. Each download takes time, but during that waiting, your system can work on another download instead of riding the food.

import asyncio

import random

import time

async def download_file(file_id: int):

print(f"Start downloading file {file_id}")

download_time = random.uniform(1, 3) # simulate variable download time

await asyncio.sleep(download_time) # non-blocking wait

print(f"Finished downloading file {file_id} in {download_time:.2f} seconds")

return f"File {file_id} content"

async def main():

files = [1, 2, 3, 4, 5]

start_time = time.time()

# Run downloads concurrently

results = await asyncio.gather(*(download_file(f) for f in files))

end_time = time.time()

print("nAll downloads completed.")

print(f"Total time taken: {end_time - start_time:.2f} seconds")

print("Results:", results)

if __name__ == "__main__":

asyncio.run(main())

- Every download starts almost at the same time, as shown by the “Start Download File X” appearing lines just as soon as possible.

- Each file lasted a different time downloading time (downloading “with asyncio.sleep ()) so they have completed different times – File 3 finally completed in 1.42 seconds.

- As all downloads have been working together once, the full time has been taken on the longest of time to download (2.68 seconds), not a total amount of time.

This shows asyncio's power – where jobs include waiting, it can be done alike, enhance efficiency.

Using asyncio in AI app with a llm

Now as we understand how Asyncio works, let's use it in the real example of AI. Large language models (llms) as Opelai's GPT types of models often include many API calls that take time to finish. If we run these calls in a row, we spend an important time waiting for answers.

In this stage, we will compare running with many explosions and without asyncio uses the Opelai client. We will use 15 short motivation to clearly show performance differences. Look Full codes here.

import asyncio

from openai import AsyncOpenAI

import os

from getpass import getpass

os.environ['OPENAI_API_KEY'] = getpass('Enter OpenAI API Key: ')

import time

from openai import OpenAI

# Create sync client

client = OpenAI()

def ask_llm(prompt: str):

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

def main():

prompts = [

"Briefly explain quantum computing.",

"Write a 3-line haiku about AI.",

"List 3 startup ideas in agri-tech.",

"Summarize Inception in 2 sentences.",

"Explain blockchain in 2 sentences.",

"Write a 3-line story about a robot.",

"List 5 ways AI helps healthcare.",

"Explain Higgs boson in simple terms.",

"Describe neural networks in 2 sentences.",

"List 5 blog post ideas on renewable energy.",

"Give a short metaphor for time.",

"List 3 emerging trends in ML.",

"Write a short limerick about programming.",

"Explain supervised vs unsupervised learning in one sentence.",

"List 3 ways to reduce urban traffic."

]

start = time.time()

results = []

for prompt in prompts:

results.append(ask_llm(prompt))

end = time.time()

for i, res in enumerate(results, 1):

print(f"n--- Response {i} ---")

print(res)

print(f"n[Synchronous] Finished in {end - start:.2f} seconds")

if __name__ == "__main__":

main()Synchronous Version A consideration of all 15 Prompts One Prempts One Present One, so the amount of time for the request for each application. As each request took time to finish, the time to run together were too long – 49.76 seconds in this case. Look Full codes here.

from openai import AsyncOpenAI

# Create async client

client = AsyncOpenAI()

async def ask_llm(prompt: str):

response = await client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

async def main():

prompts = [

"Briefly explain quantum computing.",

"Write a 3-line haiku about AI.",

"List 3 startup ideas in agri-tech.",

"Summarize Inception in 2 sentences.",

"Explain blockchain in 2 sentences.",

"Write a 3-line story about a robot.",

"List 5 ways AI helps healthcare.",

"Explain Higgs boson in simple terms.",

"Describe neural networks in 2 sentences.",

"List 5 blog post ideas on renewable energy.",

"Give a short metaphor for time.",

"List 3 emerging trends in ML.",

"Write a short limerick about programming.",

"Explain supervised vs unsupervised learning in one sentence.",

"List 3 ways to reduce urban traffic."

]

start = time.time()

results = await asyncio.gather(*(ask_llm(p) for p in prompts))

end = time.time()

for i, res in enumerate(results, 1):

print(f"n--- Response {i} ---")

print(res)

print(f"n[Asynchronous] Finished in {end - start:.2f} seconds")

if __name__ == "__main__":

asyncio.run(main())

The type of asynchronous has processed everything updated by 15 at the same time, to start them at the same time instead. As a result, fully performance was near the time for one equal application – 8.25 seconds instead of adding all applications.

The main difference is because, in a harmonious murder, each API telephone blocks the program until they finish, so times they can come. In the killing of Asynchlorous with asyncoin, calls are running like, allowing the program to carry many jobs while you are waiting for answers, to reduce the time of complete performance.

Why this is important for AI applications

In the actual AI apps, waiting for each application to finish before the first start to become a bottleneck, especially when you face many questions or data sources. This is very common in activities such as:

- It makes content to many users at the same time – eg, Chatboots, compliment engines, or multiple users.

- Calling the llm a few times in one work travel – such as summarizing, refined, separation, or several times.

- Downloading data from many APIs – for example, to combine the llm with information from information from vector database or external APIs.

Using asyncio in these cases brings great benefits:

- Improved performance – By making parallel api lies instead of waiting in a chronological order, your system can handle a lot of work in a short time.

- Cost efficiency – Fast execution may reduce operational costs, as well as for managing applications where possible may improve the use of paid APIs.

- A better user experience – Concurrency enable the apps to hear more, which is very important in real-time programs such as AI and Chatbots.

- Cribal – Asynchronous patterns allow your application to manage many applications at the same time without resources.

Look Full codes here. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to join ours 100K + ml subreddit Then sign up for Our newspaper.

I am the student of the community engineering (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in data science, especially neural networks and their application at various locations.

🔥[Recommended Read] NVIDIA AI Open-Spaces Vipe (Video Video Engine): A Powerful and Powerful Tool to Enter the 3D Reference for 3D for Spatial Ai