Abacwaningi abavela ku-MIT, NVIDIA, kanye neNyuvesi yaseZhejiang Bahlongoza I-TriAttention: Indlela Yokucindezela Yenqolobane ye-KV Efanisa Ukunakwa Okugcwele ku-2.5× Higher Throughput

Ukucabanga ngochungechunge olude kungomunye wemisebenzi ehlanganisa kakhulu kumamodeli wezilimi ezinkulu zamanje. Uma imodeli efana ne-DeepSeek-R1 noma i-Qwen3 isebenza ngenkinga yezibalo eyinkimbinkimbi, ingakhiqiza amashumi ezinkulungwane zamathokheni ngaphambi kokufika empendulweni. Ithokheni ngalinye lalawo mathokheni kufanele ligcinwe kulokho okubizwa ngokuthi inqolobane ye-KV – isakhiwo sememori esiphethe amaVektha angukhiye kanye Nenani imodeli okudingeka ibhekane nayo ngesikhathi sokukhiqiza. Uma uchungechunge lokucabanga lude, i-cache ye-KV ikhula, futhi ezimweni eziningi zokuthumela, ikakhulukazi ku-hardware yabathengi, lokhu kukhula kugcina kuqede inkumbulo ye-GPU ngokuphelele.

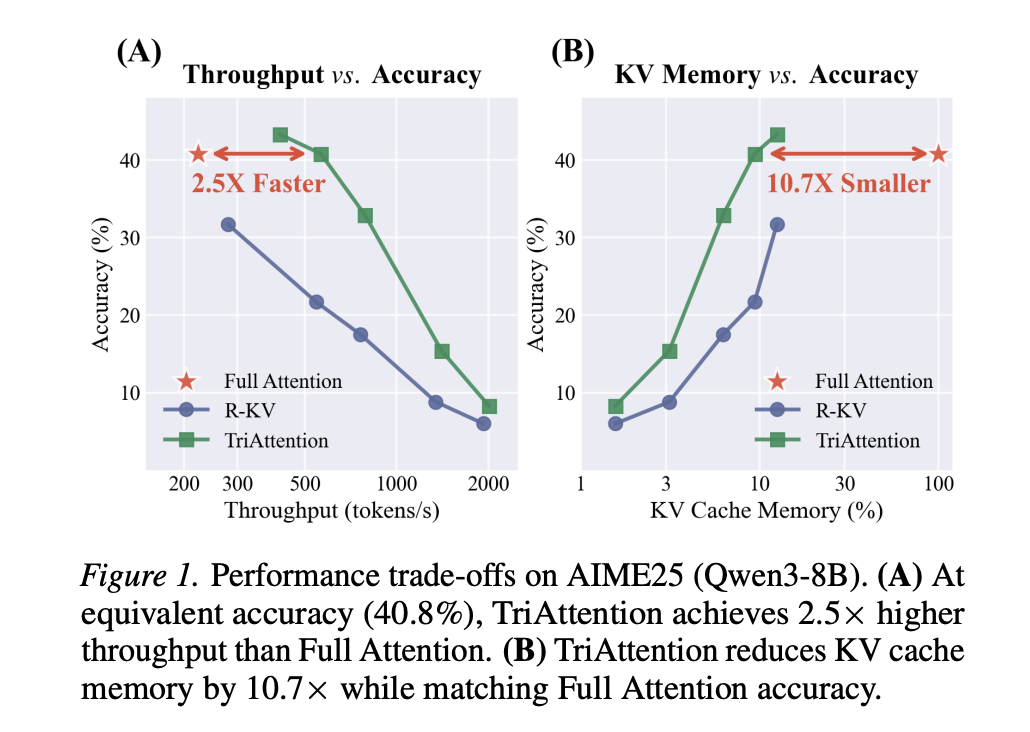

Ithimba labacwaningi abavela ku-MIT, NVIDIA, kanye neYunivesithi yaseZhejiang bahlongoze indlela ebizwa ngokuthi I-TriAttention ebhekana ngqo nale nkinga. Kubhentshimakhi yokucabanga yezibalo ye-AIME25 enesizukulwane samathokheni angu-32K, i-TriAttention ifanisa nokunemba Kokunaka Okugcwele kuyilapho izuza ukukhishwa okuphakeme okungu-2.5× noma ukuncishiswa kwenkumbulo okungu-10.7× KV. Izisekelo eziholayo zifinyelela kuphela ingxenye yokunemba ezingeni elifanayo lokusebenza kahle.

Inkinga Ngokucindezelwa Kwenqolobane Ye-KV Ekhona

Ukuze uqonde ukuthi kungani i-TriAttention ibalulekile, kuyasiza ukuqonda indlela evamile yokucindezelwa kwenqolobane ye-KV. Izindlela eziningi ezikhona – ezihlanganisa i-SnapKV, i-H2O, ne-R-KV – zisebenza ngokulinganisa ukuthi yimaphi amathokheni kunqolobane ye-KV abalulekile futhi axosha amanye. Ukubaluleka kuvame ukulinganiselwa ngokubheka amaphuzu okunaka: uma ukhiye uthola ukunakwa okuphezulu emibuzweni yakamuva, uthathwa njengobalulekile futhi uyagcinwa.

Okubanjiwe ukuthi lezi zindlela zisebenza kulokho ithimba labacwaningi elikubizayo i-post-RoPE isikhala. Intambo, noma Ukushumeka Kwesikhundla Se-Rotaryiwuhlelo lombhalo wekhodi osetshenziswa ama-LLM amaningi esimanje okuhlanganisa i-Llama, i-Qwen, ne-Mistral. I-RoPE ibhala ngekhodi indawo ngokuzungezisa Umbuzo kanye namavekhtha angukhiye ngendlela encike kumafrikhwensi. Njengomphumela, ivekhtha yombuzo esendaweni engu-10,000 ibukeka yehluke kakhulu embuzweni ofanayo we-semantic endaweni ye-100, ngoba inkomba yayo izungeziswe wumbhalo wekhodi wendawo.

Lokhu kuzungezisa kusho ukuthi imibuzo esanda kukhiqizwa kuphela eneziqondiso 'ezisesimweni' sokulinganisa ukuthi yiziphi izikhiye ezibalulekile njengamanje. Umsebenzi wangaphambili ukuqinisekisile lokhu ngokunamandla: ukukhulisa iwindi lokubuka lokulinganisa ukubaluleka akusizi – ukusebenza kuphakama cishe emibuzweni engu-25 futhi kwehle ngemva kwalokho. Ngefasitela elincane kangaka, okhiye abathile abazobalulekile ngokuhamba kwesikhathi bazokhishwa unomphela.

Le nkinga inkulu kakhulu kulokho ithimba locwaningo elikubizayo ukubuyisa amakhanda – izinhloko zokunaka umsebenzi wazo uwukuthola amathokheni athile ayiqiniso kuzingqikithi ezinde. Amathokheni afanelekile ekhanda lokubuyisa angahlala elele ezinkulungwaneni zamathokheni ngaphambi kokuba abaluleke ngokungazelelwe kuchungechunge lokucabanga. Izindlela ze-Post-RoPE, ezisebenza ngefasitela elincane lokubuka, zibona ukunaka okuphansi kulawo mathokheni phakathi nesikhathi sokungalali futhi ziwakhiphe unomphela. Lapho imodeli kamuva idinga ukukhumbula lolo lwazi, isivele ihambile, futhi uchungechunge lokucabanga luyaphuka.

I-Pre-RoPE Observation: Q/K Concentration

Ukuqonda okubalulekile ku-TriAttention kuvela ekubhekeni kokuthi Umbuzo kanye nama-Vector angukhiye ngaphambili Ukuzungezisa kwe-ROPE kusetshenziswe – isikhala sangaphambi kwe-RoPE. Lapho ithimba locwaningo libona ngeso lengqondo amavekhtha e-Q kanye ne-K kulesi sikhala, lithole okuthile okungaguquki futhi okumangalisayo: kulo lonke inani elikhulu lamakhanda okunaka nakuzo zonke izakhiwo zamamodeli amaningi, womabili amavektha e-Q kanye ne-K ahlangana ngokuqinile eduze kwamaphoyinti amaphakathi angaguquki, angewona aziro. Ithimba labacwaningi lichaza lesi sakhiwo Ukugxilisa ingqondo kwe-Q/Kfuthi uyilinganise usebenzisa i- Kusho Ubude Bomphumela R — isilinganiso esijwayelekile sezibalo zokuqondisa lapho u-R → 1 esho ukuhlangana okuqinile futhi u-R → 0 usho ukuhlakazeka kuzo zonke izinhlangothi.

Ku-Qwen3-8B, cishe amaphesenti angu-90 amakhanda okunaka abonisa u-R > 0.95, okusho ukuthi amavekhtha awo e-pre-RoPE Q/K acishe agxile ezindaweni zawo. Ngokubalulekile, lezi zikhungo zizinzile kuzo zonke izindawo zamathokheni ezihlukene nakuzo zonke izinhlelo ezihlukene zokufakwayo – ziyimpahla yangaphakathi yezisindo ezifundiwe zemodeli, hhayi indawo yanoma yikuphi okokufaka okuthile. Ithimba labacwaningi liphinde liqinisekise ukuthi ukugxilisa ingqondo kwe-Q/K kungukungaziwa kwesizinda: ukulinganisa Ubude Bomphumela Wokulinganiswa kuzo zonke izizinda ze-Math, Coding, ne-Chat ku-Qwen3-8B kukhiqiza amanani acishe afanayo angu-0.977–0.980.

Lokhu kuzinza yilokho izindlela ze-post-RoPE ezingeke zikwazi ukuzisebenzisa. Ukuzungezisa i-RoPE kuhlakaza lawa ma-vector agxilile abe amaphethini e-arc ahluka ngendawo. Kodwa esikhaleni se-pre-RoPE, izikhungo zihlala zingashintshi.

Ukusuka ekugxilweni kuye kuchungechunge lwe-Trigonometric

Ithimba labacwaningi libe selibonisa ngokwezibalo ukuthi uma amavekhtha e-Q kanye ne-K egxile ezindaweni zawo, ilogithi yokunaka – amaphuzu aluhlaza ngaphambi kwe-softmax enquma ukuthi ungakanani umbuzo oza kukhiye – wenza lula kakhulu. Ukufaka esikhundleni sezikhungo ze-Q/K kufomula yokunaka ye-RoPE, ilogithi inciphisa ibe umsebenzi oncike kuphela Ibanga le-QK (igebe lendawo elihlobene phakathi kombuzo nokhiye), livezwa njengochungechunge lwe-trigonometric:

Lapha, u-Δ iyibanga lendawo, ωf amafrikhwensi e-RoPE ebhendi ngayinye f, kanye nama-coefficient af kanye bf kunqunywa izikhungo ze-Q/K. Lolu chungechunge lukhiqiza isici sokunaka-vs-ibanga lejika lekhanda ngalinye. Amanye amakhanda akhetha okhiye abaseduze (ukunakwa kwendawo), amanye akhetha okhiye abakude kakhulu (osinki bokunaka). Izikhungo, ezibalwa ngokungaxhunyiwe ku-inthanethi kusuka kudatha yokulinganisa, zinquma ngokugcwele ukuthi yimaphi amabanga akhethwayo.

Ithimba locwaningo liqinisekise lokhu ngokuhlolwa kuzo zonke izinhloko zokunaka ezingu-1,152 ku-Qwen3-8B nakuzo zonke izakhiwo ze-Qwen2.5 ne-Llama3. Ukuhlobana kwe-Pearson phakathi kwejika le-trigonometric elibikezelwe kanye nezindawo zokunaka zangempela kunencazelo engaphezu kuka-0.5 kuwo wonke amakhanda, namakhanda amaningi azuza ukuhlobana kuka-0.6–0.9. Ithimba labacwaningi liphinde liqinisekise lokhu ku-GLM-4.7-Flash, esebenzisa I-Multi-head Latent Attention (MLA) kunokunakwa okujwayelekile kwe-Grouped-Query — i-architecture yokunaka ehluke ngokuzwakalayo. Ku-MLA, u-96.6% wamakhanda ukhombisa u-R > 0.95, uma kuqhathaniswa no-84.7% we-GQA, okuqinisekisa ukuthi ukugxiliswa kwe-Q/K akuqondile kumklamo owodwa wokunaka kodwa kuyindawo evamile yama-LLM esimanje.

I-TriAttention Ikusebenzisa kanjani Lokhu

I-TriAttention iyindlela yokucindezela inqolobane ye-KV esebenzisa lokhu okutholakele ukuze uthole okhiye ngaphandle kokudinga ukubhekwa bukhoma kwemibuzo. Umsebenzi wokushaya unawo izingxenye ezimbili:

I I-Trigonometric Series Score (Sinhlamvu) isebenzisa isikhungo esingu-Q esifakwe ikhompuyutha ngokungaxhunyiwe ku-inthanethi kanye nesethulo sangempela sokhiye ofakwe kunqolobane ukuze ilinganisele ukuthi ukhiye uzothola ukunakwa okungakanani, ngokusekelwe ebangeni lakho lendawo ukusuka kumibuzo yesikhathi esizayo. Ngenxa yokuthi ukhiye ungase unakekelwe imibuzo ezindaweni eziningi zesikhathi esizayo, i-TriAttention ilinganisa lesi silinganiso phezu kwesethi yokukhishwa okuzayo kusetshenziswa isikhala sejiyomethri.

I Isikolo Esisekelwe Ngokwejwayelekile (Sokujwayelekile) iphatha ubuncane bamakhanda okunaka lapho ukugxila kwe-Q/K kuphansi. Ikala ibhendi ngayinye yefrikhwensi ngomnikelo olindelekile wenkambiso yombuzo, ihlinzeka ngolwazi oluhambisanayo mayelana nokuqina kwethokheni okungaphezu kokuthandwayo kwebanga kuphela.

Amaphuzu amabili ahlanganiswa kusetshenziswa i-Mean Resultant Length R njengesisindo esiguquguqukayo: lapho ukugxila kuphezulu, i-Sinhlamvu iyabusa; lapho ukugxila kuphansi, i-Sokujwayelekile inikela okwengeziwe. Njalo kumathokheni akhiqiziwe angu-128, i-TriAttention ithola bonke okhiye kunqolobane futhi igcina kuphela okuphezulu-B, ikhiphe bonke abanye.

Imiphumela Yokubonisana Ngezibalo

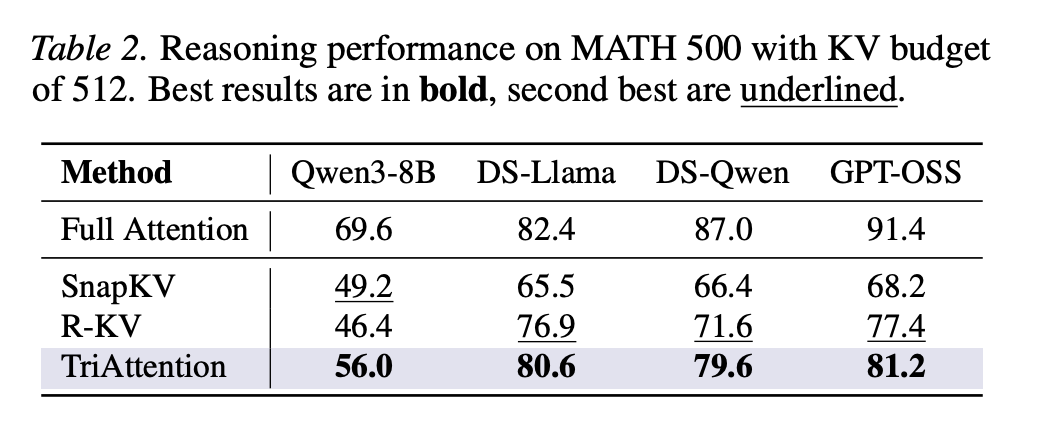

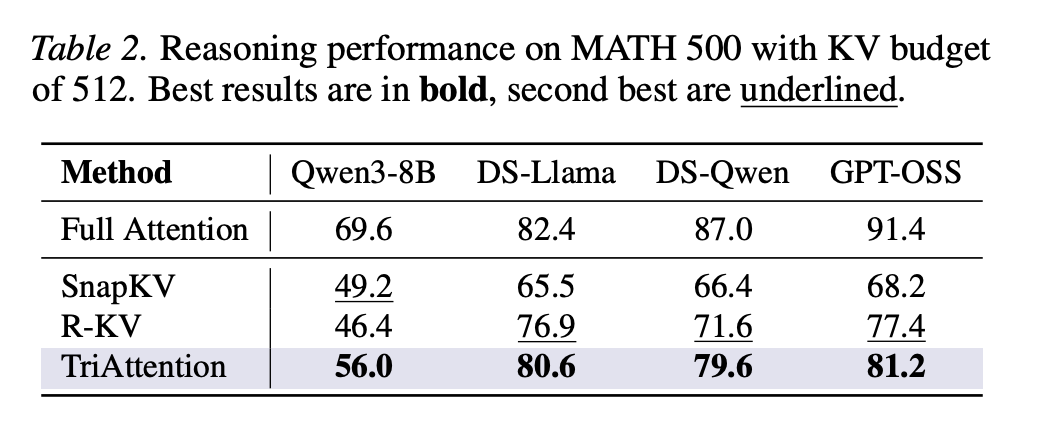

Ku-AIME24 nge-Qwen3-8B, i-TriAttention ifinyelela ukunemba okungu-42.1% iqhathaniswa ne-Full Attention's 57.1%, kuyilapho i-R-KV izuza kuphela u-25.4% kusabelomali se-KV esifanayo samathokheni angu-2,048. Ku-AIME25, i-TriAttention izuza u-32.9% uma iqhathaniswa no-17.5% we-R-KV — igebe lamaphesenti angu-15.4. Ku-MATH 500 enamathokheni ayi-1,024 kuphela kunqolobane ye-KV kokungaba ngu-32,768, i-TriAttention ifinyelela ukunemba okungu-68.4% uma iqhathaniswa ne-Full Attention's 69.6%.

Ithimba labacwaningi liphinde lethule a Ibhentshimakhi ye-Recursive State Query ngokusekelwe ekulingiseni okuphindaphindayo kusetshenziswa ukusesha okujulile kokuqala. Imisebenzi ephindaphindayo ukugcinwa kwenkumbulo yokucindezeleka ngenxa yokuthi imodeli kufanele igcine izimo ezimaphakathi kuwo wonke amaketango amade futhi ibuyele kuwo kamuva — uma noma yisiphi isimo esimaphakathi sikhishiwe, iphutha lisakazeka kuwo wonke amanani alandelayo okubuyisela, onakalise umphumela wokugcina. Ngaphansi kokucindezela kwenkumbulo okumaphakathi kuze kufike ekujuleni okungu-16, i-TriAttention isebenza ngokuqhathaniswa Nokunakwa Okugcwele, kuyilapho i-R-KV ikhombisa ukucekelwa phansi kokunemba okuyinhlekelele – yehla isuka cishe ku-61% ekujuleni okungu-14 kuya ku-31% ekujuleni okungu-16. Lokhu kubonisa ukuthi i-R-KV ikhipha ngokungalungile izimo zokucabanga eziphakathi nendawo.

Ekuphumeni kwayo, i-TriAttention izuza amathokheni angu-1,405 ngomzuzwana ku-MATH 500 iqhathaniswa namathokheni we-Full Attention angu-223 ngomzuzwana, isivinini esingu-6.3×. Ku-AIME25, ifinyelela amathokheni angu-563.5 ngomzuzwana iqhathaniswa nama-222.8, isivinini esingu-2.5× ngokunemba okufanisiwe.

Ukwenziwa Okujwayelekile Ngaphandle Kokubonisana Kwezibalo

Imiphumela inweba ngaphezu kwamabhentshimakhi ezibalo. Vuliwe I-LongBench — ibhentshimakhi yemisebenzi emincane engu-16 ehlanganisa ukuphendula, ukufinyezwa, ukuhlukaniswa ngezigaba ezimbalwa, ukubuyisa, ukubala, nemisebenzi yekhodi — I-TriAttention ifinyelela isilinganiso esiphezulu samaphuzu angu-48.1 kuzo zonke izindlela zokucindezelwa ngesabelomali esingu-50% KV ku-Qwen3-8B, iwina okungu-11 kwengu-16, imisebenzi engaphansi kwe-Adana-V engcono kakhulu elandelayo ye-Adana+V ngamaphuzu angu-2.5. Use UMBUSI ibhentshimakhi yokubuyisa ngobude bomongo ongu-4K, i-TriAttention ifinyelela ku-66.1, igebe lamaphoyinti angu-10.5 phezu kwe-SnapKV. Le miphumela iqinisekisa ukuthi indlela ayishunyekisiwe ekucabangeni kwezibalo kuphela – into eyisisekelo yokugxilisa ingqondo ye-Q/K idluliselwa emisebenzini yolimi evamile.

Okuthathwayo Okubalulekile

- Izindlela ezikhona zokucindezelwa kwenqolobane ye-KV zinendawo eyimpumputhe eyisisekelo: Izindlela ezifana ne-SnapKV ne-R-KV zilinganisela ukubaluleka kwethokheni kusetshenziswa imibuzo yakamuva ye-post-RoPE, kodwa ngenxa yokuthi i-RoPE izungezisa ama-vectors emibuzo ngokuma, kusetshenziswe iwindi elincane kuphela lemibuzo. Lokhu kubangela amathokheni abalulekile – ikakhulukazi lawo adingwa amakhanda okubuyisa – ukuthi akhishwe unomphela ngaphambi kokuba abe bucayi.

- Umbuzo we-Pre-RoPE kanye namavekhtha angukhiye ahlanganisa izikhungo ezizinzile, ezingashintshi kuzo zonke izinhloko zokunakwa: Lesi sakhiwo, esibizwa ngokuthi ukugxiliswa kwe-Q/K, sibamba ngokunganaki okuqukethwe okokufaka, indawo yethokheni, noma isizinda, futhi siyashintshana kuyo yonke i-Qwen3, Qwen2.5, Llama3, kanye nezakhiwo ze-Multi-head Latent Attention ezifana ne-GLM-4.7-Flash.

- Lezi zikhungo ezizinzile zenza amaphethini okunaka abikezelwe ngokwezibalo ngaphandle kokubuka noma yimiphi imibuzo ebukhoma: Uma amavekhtha e-Q/K egxilile, amaphuzu okunaka phakathi kwanoma yimuphi umbuzo nokhiye ancipha aye kumsebenzi oncike kuphela ebangeni lawo lokuma – afakwe ikhodi njengochungechunge lwe-trigonometric. I-TriAttention isebenzisa lokhu ukuthola wonke ukhiye ofakwe kunqolobane ungaxhunyiwe ku-inthanethi usebenzisa idatha yokulinganisa iyodwa.

- I-TriAttention ifanisa nokunemba kokucabanga Okugcwele Ukunakwa engxenyeni yenkumbulo nokubala izindleko: Ku-AIME25 enesizukulwane samathokheni angu-32K, ifinyelela ukudlula okuphezulu okungu-2.5× noma ukuncishiswa kwenkumbulo okungu-10.7× KV kuyilapho ifanisa nokunemba Kokuqaphela Okugcwele — cishe ukunemba kwe-R-KV okuphindwe kabili kubhajethi yememori efanayo kuyo yonke i-AIME24 ne-AIME25.

- Indlela ijwayele ukudlula izibalo futhi isebenza ku-hardware yabathengi. I-TriAttention idlula zonke izisekelo ku-LongBench yonkana imisebenzi emincane eyi-16 evamile ye-NLP nakubhentshimakhi yokubuyisa ye-RULER, futhi inika amandla imodeli yokucabanga engu-32B ukuthi isebenze ku-24GB RTX 4090 eyodwa nge-OpenClaw – umsebenzi obangela amaphutha angaphandle kwenkumbulo ngaphansi kokuthi Ukunakwa Okugcwele.

Hlola Iphepha, Repo futhi Ikhasi Lephrojekthi. Futhi, zizwe ukhululekile ukusilandela Twitter futhi ungakhohlwa ukujoyina wethu 120k+ ML SubReddit futhi Bhalisela ku Iphephandaba lethu. Linda! ukutelegram? manje ungasijoyina kuthelegramu futhi.

Udinga ukusebenzisana nathi ekuthuthukiseni i-GitHub Repo yakho NOMA Ikhasi Lobuso Lokugona NOMA Ukukhishwa Komkhiqizo NOMA I-Webinar njll.? Xhumana nathi