Isakana AI released Shinkaevolves: Open source compartment produces scientific acquisition programs for the effective sample operations

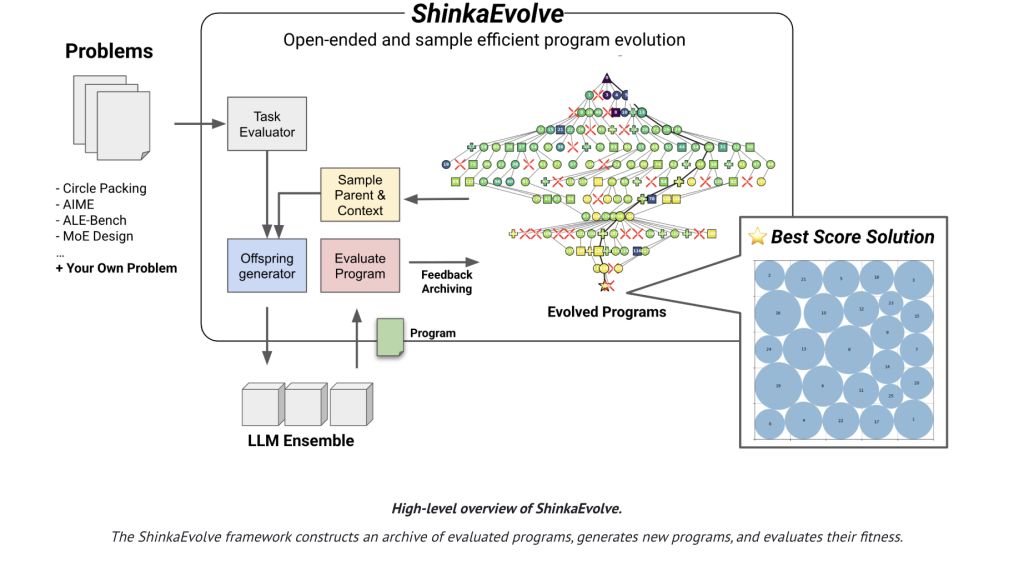

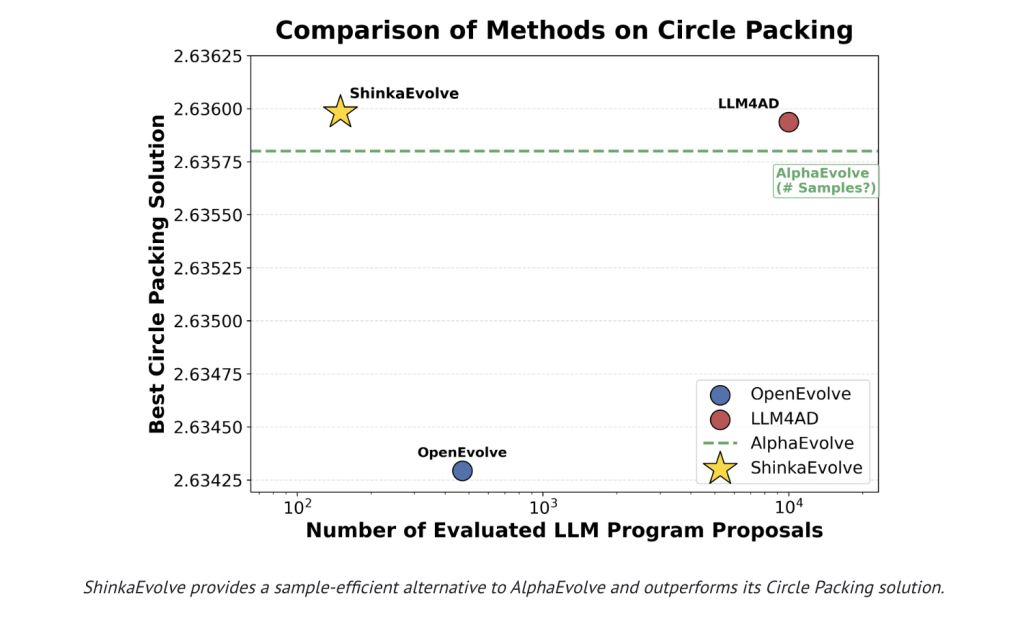

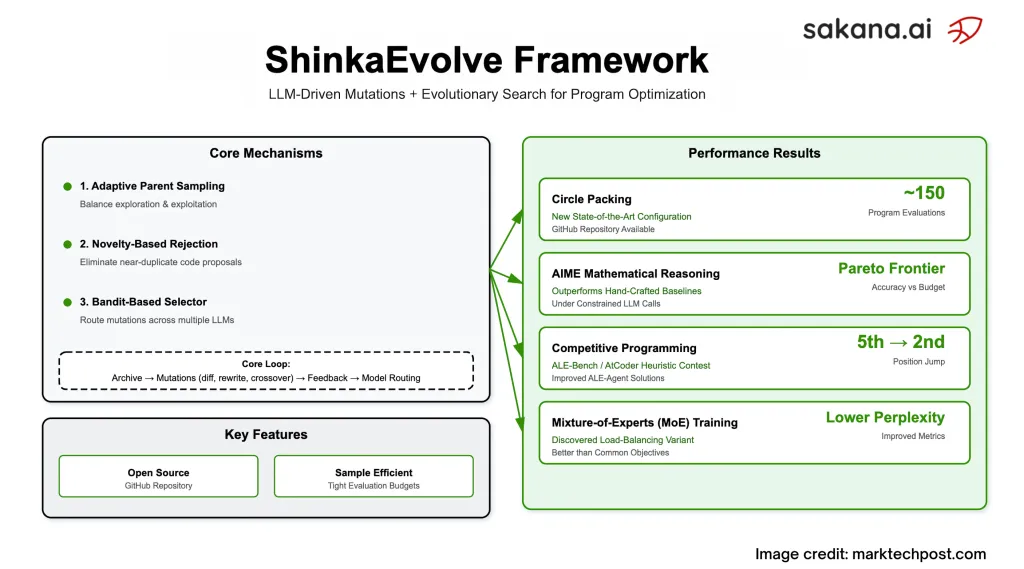

Sakana Ai released ShinkaevolvesOpened Framework Using Large Modes (LLMS) as mutation operators in the loop of evolution to Close the programs Scientific and engineering problems – while they cut the amount of test required for solid solutions. In canonical Packing Indicator Benchmark (n = 26 in unit squares), Shinkaevolve reported new SOTA Configuration using ~ 150 System testWhen the former plans are burned Thousand. Project Ships Under Apache-2.0by research report and public code.

What problem is it actually solve?

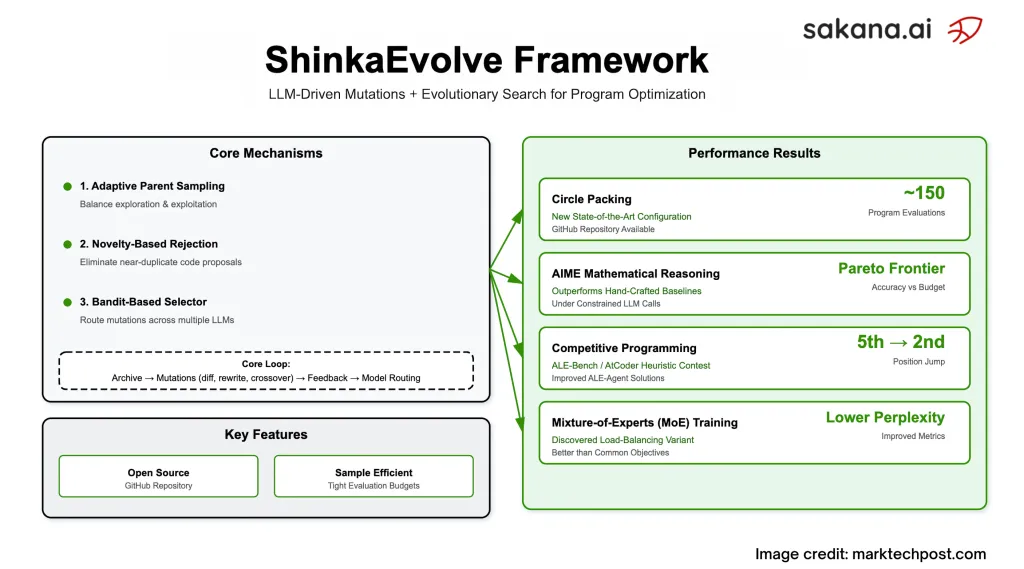

Many of the “Many Code Voloms are checking blue armies: They change, run, score, and multiply the major sample budget. Shinkaevolves intensified. Three elements communicate:

- Sumpling of a permanent measure balancing check / exploitation. Parents are drawn from “islands” from FITNSS- and knowledge policies (power law or weighing about working financing) rather than always increased the best.

- Sorting made of night-based to avoid double reschedulations. Components of the corresponding code is embedded; If Coslinline matches exceeds the limit, the second llm works as “new judgment” before being killed.

- Bandit-Based Best Best So the program reads which model (eg GPT / Geminine / Claude / Deedeek family) to display a big display relative Fitness intensity and moves metations for future future (UCB1 style renewal in parental / basic development).

Is the application of the testing with the claim offering toy problems?

The research team explores four different backgrounds and shows consistent benefits with smaller budgets:

- Consolidating packing (n = 26): It reaches advanced configuration equity 150 examination; The research team also confirms the direct test of stress.

- Aiime Math Reasoning (2024 SET): It appears Agentic Scafffolds that tracks a The border of the pareto (VLM-CALL budgeting), hand-handed bases under a limited number of questions / pareto's accuracy vs Calls and transfer the other AIME's age and llms.

- Competition Plans (Ale-Bench Lite): From the Represent Solutions, Shinkaevolves Submit ~ 2.3% means improving in all 10 jobs and attracts one work solution from 5th → 2nd on a partner atcoder's board.

- LLM training (mixture-professional): A New Loss of Loading That improves extreme confusion and accuracy in many ordinary power vs. Global-Batch LBL used widely.

What does loop of evolution look like?

Shinkaevolve keep the Claim For tested programs with Fitness, public meters, and written reply. For each generation: we sampled the island and parents; form a mutation context with systems “Top K and” Random “programs; and then proposition to edit three-operator-exception, Full Writingbeside LLM-Guided Crossovers– Protecting undeniable circuits of clear marks. They have issued the renewal option for both history and Bandit statistics That guides the next llm / model choice. The program periodically produces a meta-scratchpad That summarizes successful strategies; Those summaries will return to the latest generations.

What are the consequences of concrete?

- Consulting packing: Collected A formal starting (eg, gold-angle) patterns, Hybrid Global-Local Search (Limited Symbols + SLSQP), and Ways to escape (Hot temperature, circular rotation) received by the system-not manually Parti.

- AIME SCAFFOLDS: A three-stage striker (generation → sensitive peer review

- Ale-Bench: Intended intended winner (eg Caching KD-Tree Stufre Stats; “Targeted edge goes to Miscrassumited) pressing scores without re-writing.

- Moe losses: Adds an Entropy's fine-provided entropy to the Untrobopy of the Global-Batch purpose; Empiririolily reduces the memory route and develops confusing / benches as a focus on the focus route.

How is this like alphaevolves and associated systems?

Alphaevolve has shown strong source results but in higher tests. Shinkaevolves Reproach and pass The result of a round packing With fewer few orders And it releases all things open source. The research team also compares diversity (one model of vs model. Business of Bandit) and an abulete Parental selection including Malicious sortingShowing each one has an impact on good performance.

Summary

Shinkaevolves is Apache-2.0 Outline of LLM-DruN Program Evolution that cuts is analyzed from Thousand above Super By combining Fitness / Novelty-Aating Parent Sampling, Incorrect-plus to reject the newno UCB1-style adaptime adaptime. It places a New Sota despite of- Consulting packing (~ 150 Evalls), gets strorker They coincide Scafffolds under a solid budget of questions, development Ale-Bench Solutions (~ 2.3% mean profit, 5th → 2nd work), and they receive a New loss of MOE to sleep of load that improves extreme confusion and accuracy. The code and report are public.

FAQs – Shinkovelve

1) What is Shinkaevolve?

The framework of the open source that Couples of Recommendation Repairs in Environmental Reform Changes and Finding Algorithm and performing well. The code and report are public.

2) How does the operation of a higher sample achieve past evolution plans?

Three ways: Smalling of a variable rating (checking / exploitation balance), refurbishment based on a double test, and the Bandit-Based Auts Auts in the promising lots.

3) What supports the results?

Reaches in conjunction of a circle of state. in AIME-2024 expresses strips under 10 questions for each problem; Enhance Ale-Bench solutions on top of powerful foundations.

4) Where can I use it and what is the license?

GimHub Repo provides for Webui and examples; Shinkovolve is released under Apache-2.0.

Look Technical Details, Paper including GitHub page. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to join ours 100K + ml subreddit Then sign up for Our newspaper.

Asphazzaq is a Markteach Media Inc. According to a View Business and Developer, Asifi is committed to integrating a good social intelligence. His latest attempt is launched by the launch of the chemistrylife plan for an intelligence, MarktechPost, a devastating intimate practice of a machine learning and deep learning issues that are clearly and easily understood. The platform is adhering to more than two million moon visits, indicating its popularity between the audience.

🔥[Recommended Read] NVIDIA AI Open-Spaces Vipe (Video Video Engine): A Powerful and Powerful Tool to Enter the 3D Reference for 3D for Spatial Ai