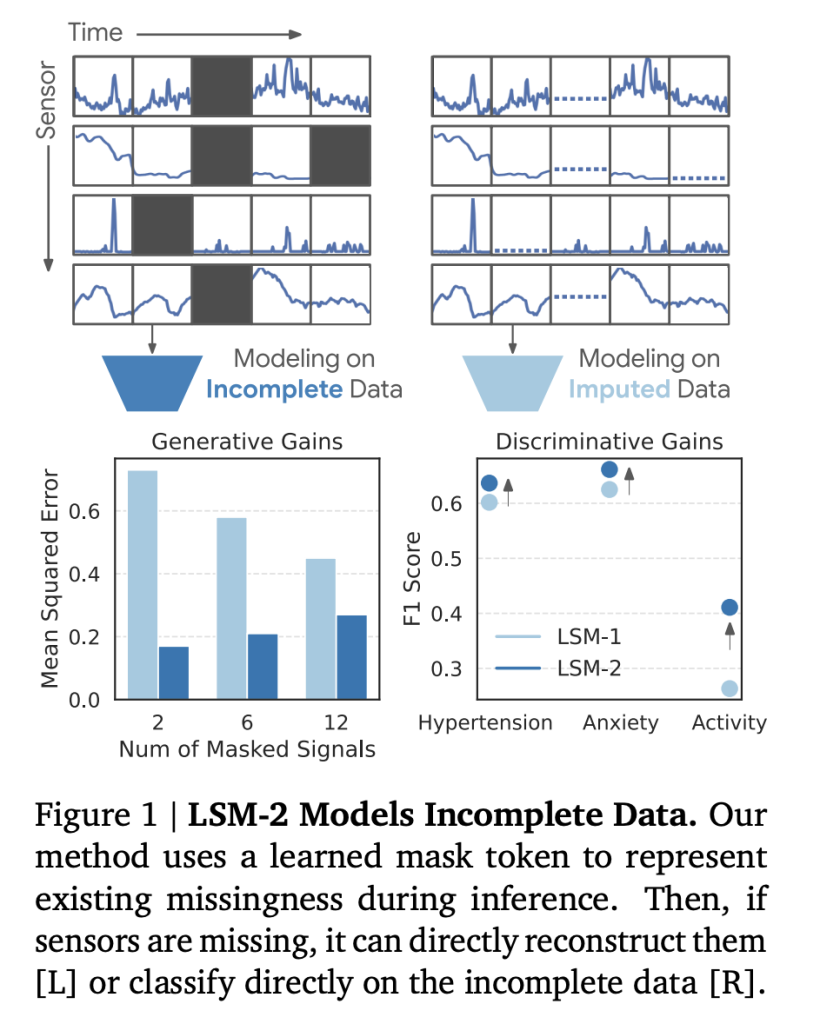

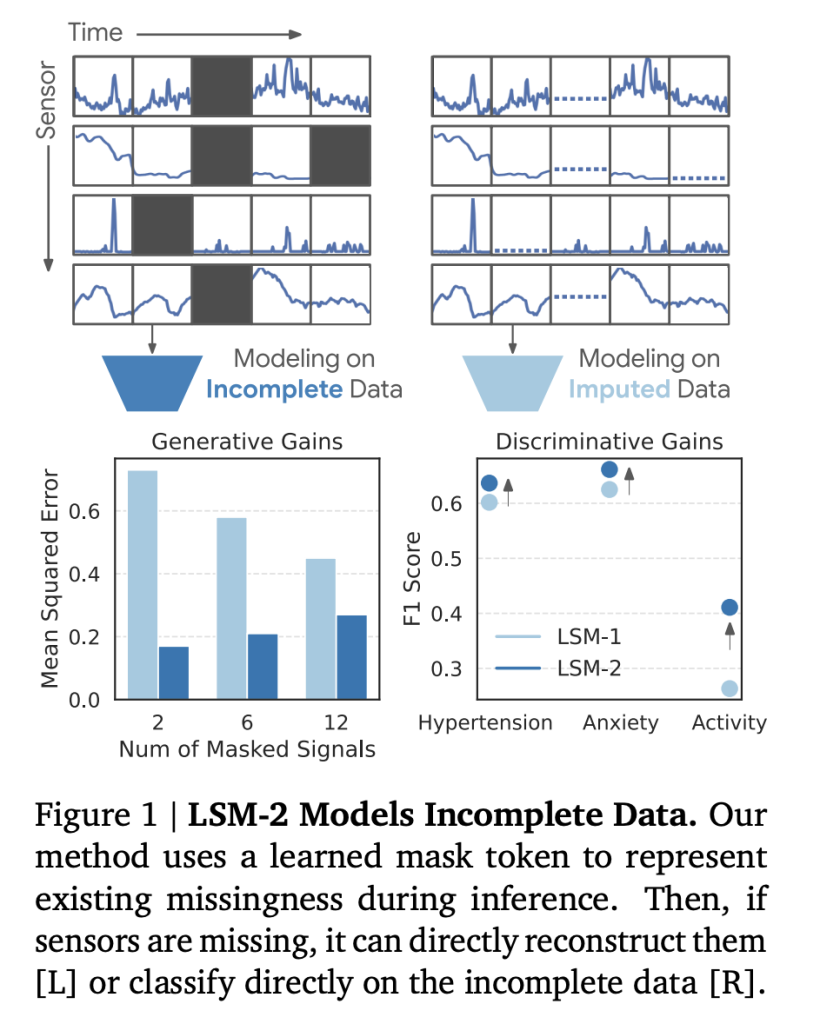

Google investigates LSM-2 with flexible and inherited masking (intention): To enable reading directly from incomplete flow data

Introduction

Special devices convert health monitoring by enabling continuous collection of ethical features and ethical behavior such as quality, function, temperature and skin behavior. However, the authentic data of the country generated by these highly tended devices for the failure of sensor, removal, charging, battery conflicts, and other disturbances, and other interruptions. This produces an important challenge for independent independent models (SSL) and Foundation, which often expects complete, normal data streams. The past solutions often rely on data delivery or dropping incomplete, dangerous conditions introducing bias or spending important information.

A group of researchers from Google Deepmind has introduced LSM-2 (largest model of sensor 2) accompanied by new-effective and relevant problems, a strong readings from sensitivity without restraint. Below, we examine new technologies, powerful effects, and the main understanding of the development.

Challenge: Data for non-data data

- Data classification: To a large dataset of 1,6 million dataset (1440-minutes) data samples, 0% Of perfect samples are fully; Loss is a thought and is usually edited into long spaces, not simple drots random.

- Ways to Lost: Normal causes include:

- Device off (charging or not to be worn)

- Selecting Sensor Deactivation (Savings or Special-Special)

- Motion Artifacts or Environmental Awareness

- Outward or physical readings that are sorted during the previous attack

- Impact on the model: Many phynalical patterns are eligible (eg a Circadian rhythm of a Circadian rhyme, the heart variations) requires a remote chronological analysis – where there is no residence.

Changing masks and inherited (intention): technical approach

Important concepts

Aim It includes two types of tight reading:

- The mask dies as an estate: Marks matches matching real loss in sensor data

- Artificial mask: Random mask saw the tokens to provide a self-construction policies for self-hospice prette

These masks Bolt And hosted by the Ecoder-BaserCper Decoder-Decoder, enables the model to:

- Directly read unattended data, incomplete

- Firmly fix in real-land loss during flattering

- Produce outstanding representations in both formal and formal data data

Preatain rubbish strategies

- Random random: Disposing of 80% tokens for the tensures of the sound of sensor

- Temporary pieces: Dumping 50% of the temporary windows (all the nerves not at random times)

- Pieces of nerve: Dumping 50% of the nerve channels throughout the day (Modeling Sensor Sensor Sensor)

AIM includes the efficiency of masking (removal from the complication) and the flexibility of attention (strong loss of loss), allowing the model to measure several (long term,> 3,000 tokens).

Details of access to information and plerganing

- Rate: 40 million hours of a long, multimodal sensor hours, collected from 60.440 participants between March and May 2024.

- Nerve: Photoplethysyshirts (PPG), acceleroderal, electroderal activity (party), skin temperature, and altimeter. Each device has given good mixed items across the 24-hour window.

- Diversity of people: Participants in the entire range of different age (18-96), men and men and BMI classes.

- Downstreamm in data:

- Metabolic Study (High Blood Pressure, Preserving Anxiety; Users Not 1 1,250)

- Work recognition (20 job classes, 104,086 events).

To evaluate and consequences

Funnel

LSM-based on target LSM-2 was examined in:

- To schedule a particular type: Binary blood pressure, anxiety, and recognition of 20-class work

- Progress: Age and BMI

- Flavorable: Refunds of lost sensor (random conflict, temporary / signal)

The results of the value

| Task | Metric | Best LSM-1 | LSM-2 w / AIM | Recovery |

|---|---|---|---|---|

| Riot with a wet | F1 | 0.640 | 0.651 | + 1.7% |

| Recognition of jobs | F1 | 0.470 | 0.474 | + 0.8% |

| BMI (regression) | H corr | 0.667 | 0.673 | + 1.0% |

| Random frame (80%) | Mse (↓) | 0.30 | 0.20 | + 33% low mistake |

| 2-Signal Reflow | Mse (↓) | 0.73 | 0.17 | + 77% low mistake |

- Deviation from a tricky reference: When certain senses or time windows or sleeves are clearly removed, LSM-2 with a purpose is informed of 73% of the LSM-1. For example, the loss of F1 after removing the Acceleromialation Acceleration was 57% of LSM-2, unlike LSM-1, and fully + 47%.

- COVERY COVERS: The model performance has condemned the background. The most reduced Biosignension-Hypitension / Affairs of the accuracy (indicating the actual amount of diagnosis worldwide.

- Style: LSM-2 showed a better rate than LSM-1 according to the topics, data, and model size, without an observed implementation of working.

Understanding of technology

- The direct management of the actual treacherThe LSM-2 is the first model of a trained basis and inspected directly to imperfect data, without clear authentication.

- Hybrid massage: It's a variable and inherited masculated masculated males (with a dropout removal) flexibility (with attention).

- General embedding: Even in the frozen lowercase and light lines, LSM-2 achieves Kingdom State results in both clinical / level methods and SSL activities.

- Practical and Discriminating PowerThe LSM-2 is the end of the testing model that can renew the lost signals and produce active movements in all various medical activities, which raise the use of actual medical and ethical applications.

Store

LSM-2 with flexible and inherited masking brings a major step forward to sending health information driven by AI using actual SENSor data. By means of direct, formal, systematic, racial skills and discriminatory model, this approach lays a valuable basis for ai-logical Ai, with a logical place.

Look Paper information and technical details. All credit for this study goes to research for this project.

During Ai Dev Newsletter Newspaper learned about 40k + Devs and researchers from Envidia, Open, Deeps, Microsoft, Microsoft, Ambigen, Aflac, Wells Fargo and 100s More [SUBSCRIBE NOW]

Michal Sutter is a Master of Science for Science in Data Science from the University of Padova. On the basis of a solid mathematical, machine-study, and data engineering, Excerels in transforming complex information from effective access.