New NVIDIA Research Shows Predictive Code Release on NeMo RL Achieves 1.8× Faster Generation Release on 8B and 2.5× End-to-End Speedup on 235B Designs

If you've been using reinforcement learning (RL) in a mathematical reasoning language model, code generation, or any realizable task, you've probably stared at the progress bar while your GPU cluster fired up generating output. A team of researchers from NVIDIA proposes fine-tuning by integrating predictive modeling into the RL training loop itself, and doing it in a way that preserves the target model output distribution.

The research team put together a direct projection recording NeMo RL v0.6.0 with the vLLM backend, it delivers lossless output acceleration at both 8B and 235B model scales. The latest NeMo RL v0.6.0 officially releases predictive coding as a supported feature alongside the SGLang backend, Muon optimizer, and YaRN remote content training.

Why the Rollout Generation is a Bottleneck

To understand the problem, it helps to know how the synchronized RL training step breaks down. In NeMo RL, each step contains five sections: data loading, weight synchronization and backend processing (prep), output generation (gen), log-probability recomputation (logprob), and policy development (train).

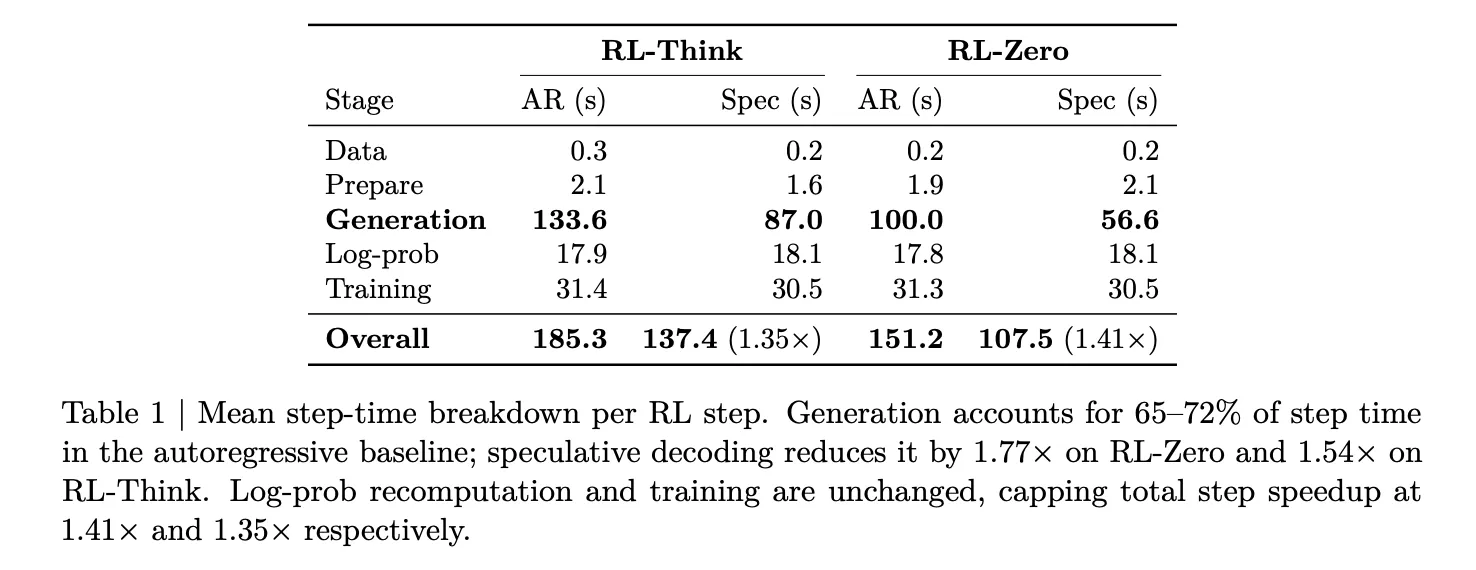

The research team measured this separation in Qwen3-8B below two jobs – RL-Thinkwhich continues to train the model with the ability to think, too RL-Zerowhich starts from a basic model and learns to think from scratch. In both cases, output generation accounts for 65–72% of the total step time. Log-likelihood recalculation and training together take about 27–33%. This makes generation the only stage worth targeting for acceleration, and the one that determines the ceiling for any release-side development.

What Deductive Decoding Actually Does

Predictive coding is a way where it's smaller, faster draft model raises several tokens at once, and large target model (the one you're actually training) validates them using a rejection sampling technique. The main feature and why it is important in RL, is that the rejection process is statistically guaranteed to produce the same output distribution as if the target model had generated those tokens automatically. No distribution mismatch, no policy adjustment required, no change in training signal.

This is important because in RL after training, the training reward depends on the policy samples. Methods such as parallel processing, unpolished replay, or low-precision extraction all trade some amount of training fidelity for implementation. Predictive coding does not trade off: the output is identical in distribution to what the target model would have done on its own, it's just generated faster.

The Challenge of System Integration

Adding a draft model to the deployment backend is straightforward. Adding one to the RL training loop is not. Every time the policy updates, the release engine must find the new weights. The framework model should always be in line with evolving policy. Enrollment opportunities, KL penalties, and GRPO policy losses should all be accounted for in the target policy (of the surety) and not in the draft or target for developing silent damages.

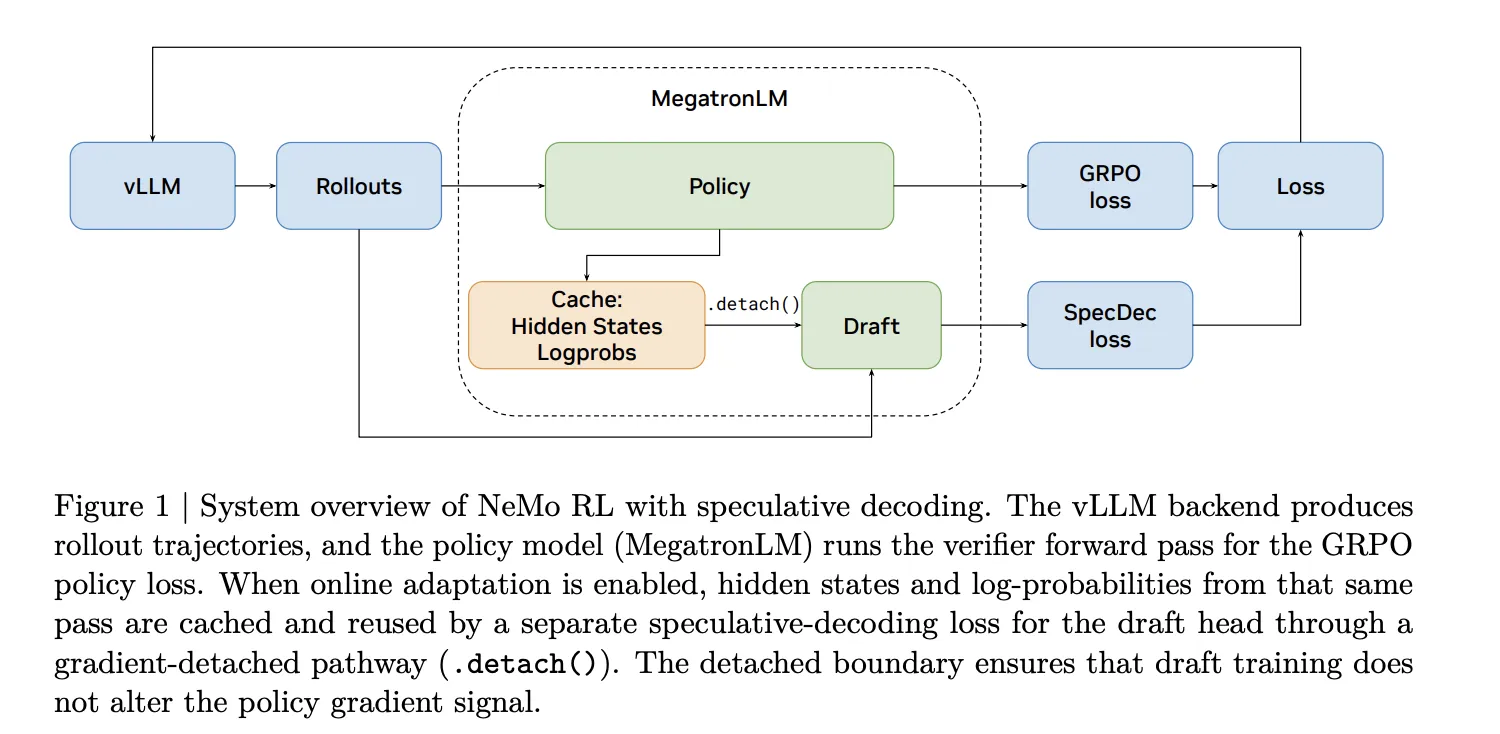

The NVIDIA research team manages this in NeMo RL with two-way construction. A common approach uses EAGLE-3, a draft framework that works with any pre-trained model without requiring traditional multi-token support (MTP). Native routing is also available on models that ship with built-in MTP heads. When online draft adaptation is enabled, the hidden states and input probabilities from the MegatronLM forward verifier are cached and reused to monitor the draft head in a truncated gradient method, so the draft training never interferes with the policy gradient signal.

Measured Results on the 8B Scale

For 32 GB200 GPUs (8 GB200 NVL72 nodes, 4 GPUs per node), EAGLE-3 reduces generation latency from 100 seconds to 56.6 seconds on RL-Zero — a 1.8× generation speedup. On RL-Think, it drops from 133.6 seconds to 87.0 seconds, a 1.54× speedup. Because the log-likelihood recalculation and training are unchanged, these side-by-side gains translate to an overall step speedup of 1.41× for RL-Zero and 1.35× for RL-Think. Validation accuracy in AIME-2024 appears equally under automatic and predictive decoding throughout the training, ensuring that the lossless guarantee is valid.

The research team is also exploring n-gram coding as a model-free prediction base. Despite reaching a reception length of 2.47 in RL-Zero and 2.05 in RL-Think, n-gram writing is slower than the default baseline in both settings — 0.7× and 0.5× respectively. This is an important discovery for doctors: length of consent is necessary but not sufficient. If the verification overhead is high enough, guesswork makes things worse.

Three Configuration Decisions That Determine the Speedup Done

The research team isolates itself three operational decisions that the coaches must behave well.

Implementation of the draft it is more important than general writing ability. EAGLE-3 drafts initialized on the post-training DAPO dataset achieve a generation speed of 1.77× on RL-Zero, while drafts initialized on the general-purpose UltraChat and Magpie datasets only reach 1.51× for the same draft length. The draft should correspond to the distribution of the actual output encountered during the RL, not just the distribution of the extensive discussion.

Draft length it has a lot of ambiguity. For draft length k=3, RL-Zero achieves 1.77× speedup and RL-Think achieves 1.53×. Increasing to k=5 increases the reception length but decreases the speed to 1.44× for RL-Zero and 0.84× for RL-Think — the latter is already slower than automatic. For k=7, RL-Zero drops to 1.21× and RL-Think to 0.71×. The difference is important: RL-Zero outputs are generated from a basic model that starts with short outputs, which makes it easier for the framework to predict even at high k. RL-Think's fully developed thinking methods are difficult to overestimate, so more than a long draft quickly removes the advantage. The extra guess work at each step can wipe out the advantage of high acceptance altogether, especially in heavy generation regimes.

Online draft correction — updating the draft during RL using the output generated by the current policy is very helpful when the draft is weakly initiated. For DAPO-triggered drafts, the offline and online configurations perform almost identically (1.77× vs. 1.78× for RL-Zero). With the activated draft of UltraChat, the online update improves the acceleration from 1.51× to 1.63× on RL-Zero.

Interaction and asynchronous execution it was also tested directly on the 8B scale and not just in simulation. The research team ran RL-Think at policy lag 1 in a 16-node non-colocated, with 12 nodes dedicated to production and 4 to training. In asynchronous mode, most of the output generation is already hidden after the recalculation of log probabilities and policy updates, so the ideal value is the exposed generation time that remains in the critical path. Predictive coding reduces that exposed generation time from 10.4 seconds to 0.6 seconds per step and reduces the active step time from 75.0 seconds to 60.5 seconds (1.24×). The benefit is less than synchronous RL – to be expected, since asynchronous overlap already hides most of the output costs – but it ensures that the two methods are indeed complementary rather than ineffective.

Benefits expected in 235B Scale

Using a benchmark GPU performance simulator calibrated to device-level computing, memory, and networking characteristics, the research team demonstrated the benefits of predictive modeling at large scales. For Qwen3-235B-A22B running synchronous RL on 512 GB200 GPUs, draft length k=3 and receive length of 3 tokens yields 2.72× output speedup and 1.70× end-to-end speedup.

In the most favorable simulated environment – Qwen3-235B-A22B on 2048 GB200 GPUs with asynchronous RL at policy lag 2 – the output speedup reaches about 3.5×, which translates to 2.5× end-to-end speedup. Predictive decoding and asynchronous execution are described as complementary: prediction reduces the cost of each output, while synchronous overlap hides the remaining generation time after training and log-likelihood calculation.

Key Takeaways

- Output generation is a major bottleneck in post-RL trainingcomprising 65–72% of total stride time at synchronized RL workloads — making it the only phase where acceleration has a significant effect on end-to-end training speed.

- Predictive decoding with EAGLE-3 delivers lossless decoding accelerationachieving a 1.8× generation speedup at 8B scale (1.41× overall step speedup) without changing the output distribution of the target model — unlike parallel execution, policy-free replay, or low-precision output, all training trades output fidelity.

- The quality of the draft start is more important than the length of the draftin-domain drafts (trained with DAPO) that outperform standard conversational domain drafts by a significant margin; a long fixed length (k≥5) always backfires on heavy computational loads, making k=3 a reliable default.

- Simulator projections show the gain to be much higherreaching ~3.5× output speedup and a hint of 2.5× end-to-end training speedup at 235B scale on 2048 GB200 GPUs — and the method is already available in NeMo RL v0.6.0 under Apache 2.0.

Check it out Full Paper again Nemo RL Repo. Also, feel free to follow us Twitter and don't forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us