Moonshot Ai unveils Kimi K2 Thinking: An impressive thinking model that can make 200- 300 consecutive calls without human intervention

How do we design AI systems that can plan, consult, and act in a sequence of decisions without constant human supervision? Moonson Ai has released the Kimi K2 concept, an open source conceptual model that demonstrates the full distribution of the Kimi K2 complex of scientific structures. It targets workloads that require critical thinking, long-tail usage, and expensive multi-step behavior.

What is kimi k2 thinking?

The Kimi K2 concept is described as the latest, most powerful model of the MoonShot open source moonshot concept. It is designed as a reasoning agent that has step-by-step reasons and tools that allow power during discovery. The model is designed to break down the chain of thought into function calls to read, think, call the tool, and think again in hundreds of steps.

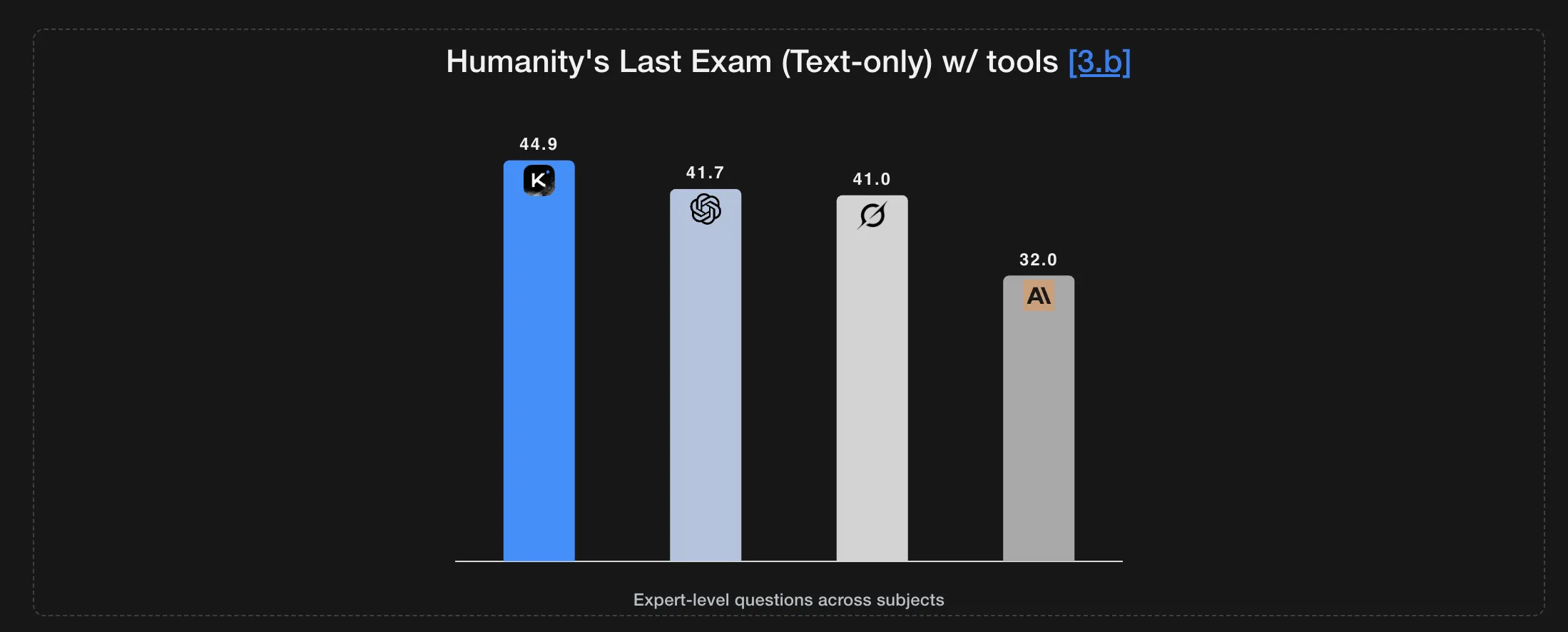

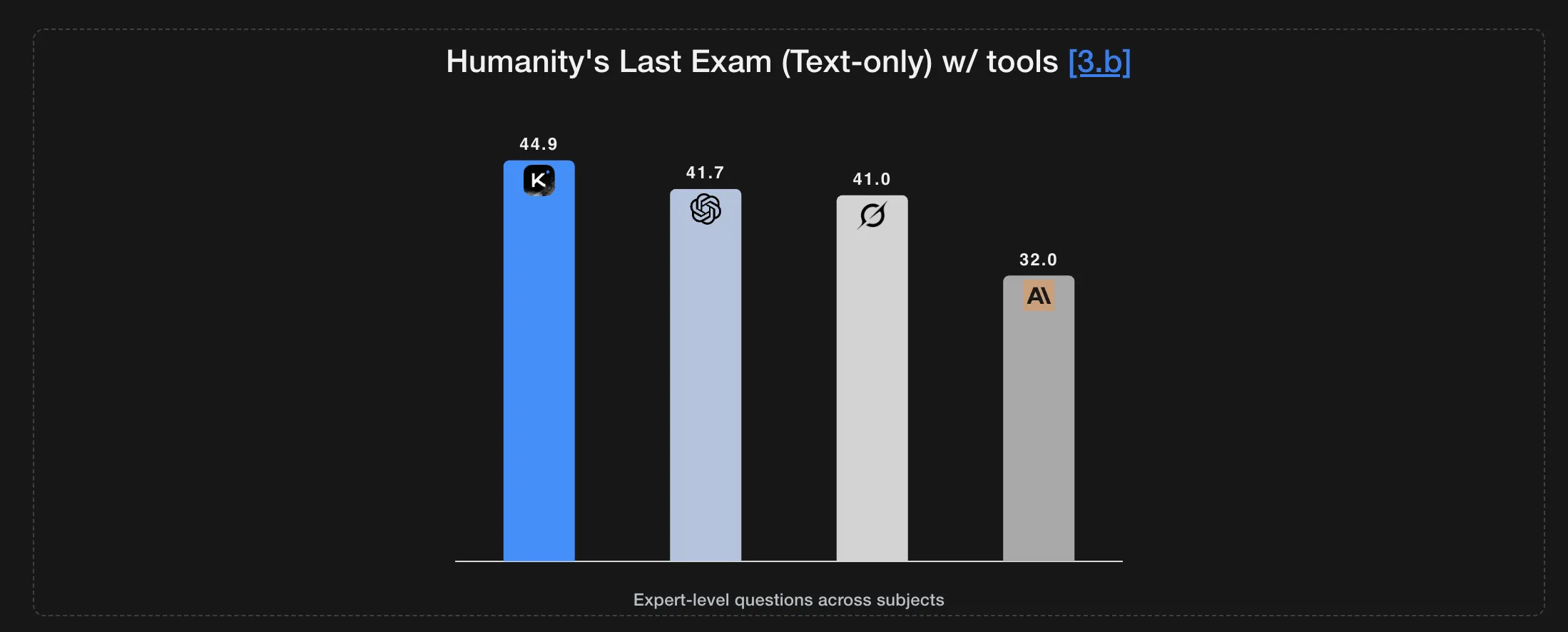

The model sets a new state of the art in the final evaluation of humanity and PresenocP, while maintaining consistent behavior across 200 to 300 consecutive sequencing instruments without human interference.

At the same time, the K2 concept is released as an Open Weight model with 256k content tokens, which reduces latency and GPU memory usage while maintaining benchmark performance.

The K2 concept is already live on imi.com in chat mode and is available in platform moonshot mode, with a dedicated aventic mode designed to reveal the full tool through behavior.

Structures, moe design, and core length

Kimi K2 reflects the legacy of the Kimi K2 Professional Design mix. The model uses MOE properties with 1T parameters and 32 tuned parameters per symbol. It has 61 layers including layer 1, 384 experts with 8 selected experts each, 1 shared expert, and 7168 hidden experts.

Vocabulary size is 160k tokens and context length is 256k. The method of attention is a higher level of attention of the head, and the activation function is puglu.

Time for Exploration and long-term thinking

The Kimi K2 concept is clearly optimized for testing time. The model is trained to increase its length of reasoning and the depth of the tools when faced with difficult tasks, rather than relying on a short thinking case.

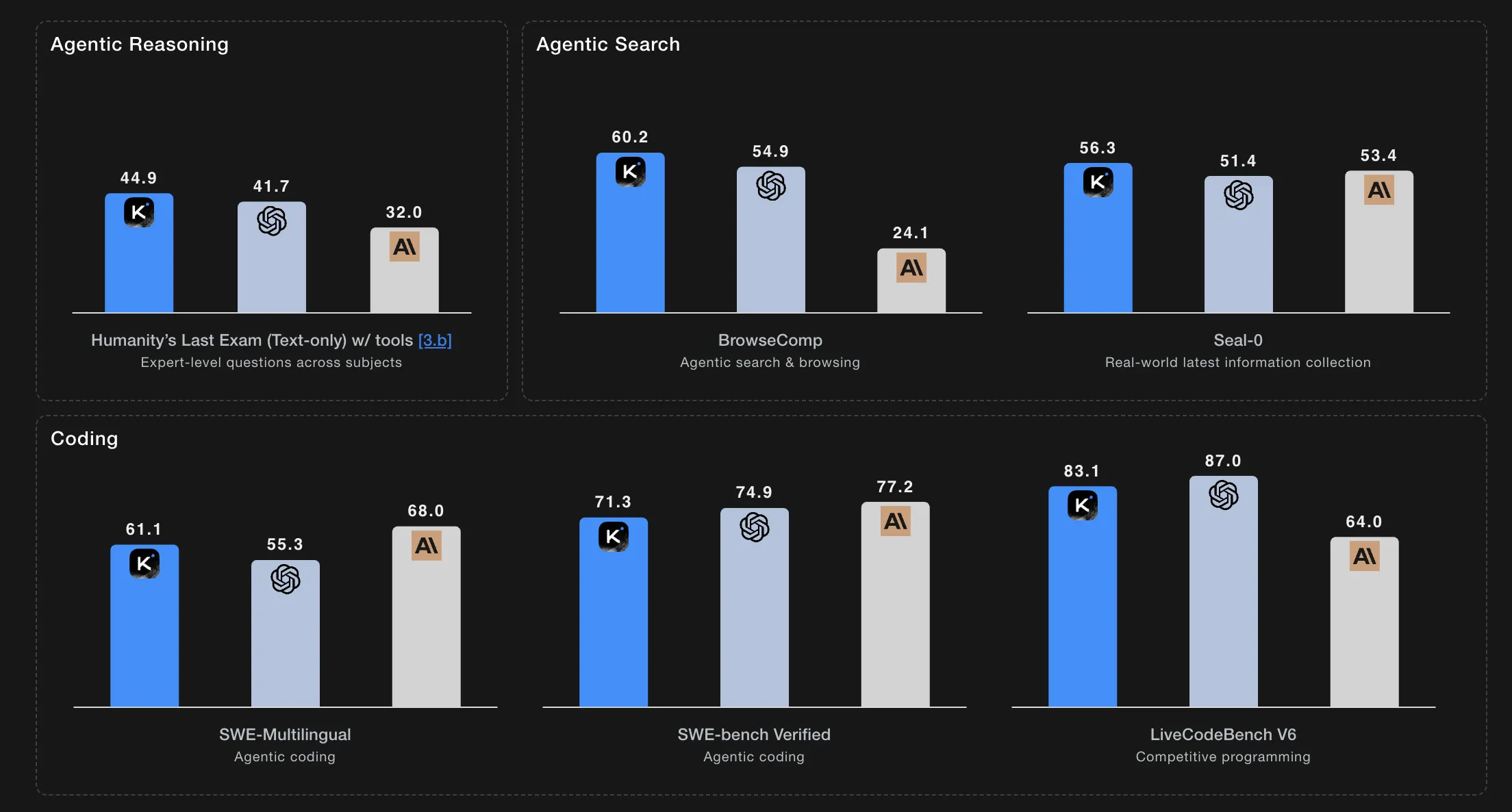

In the final personality test in the instrument design, K2 thinking scores 23.9. With tools, the score increases to 44.9, and in the heavy condition it reaches 51.0. At AIME25 with Python, it reports 99.1, and at HMMT25 with Python It starts reporting 95.1. In IMO Respecturn IT Scores 78.6, and in GPQA it scores 84.5.

Protocol Protocol Cans for token budgets at 96k for Hle, AIESE25, HMMT25, and GPQA. It uses 128k reflection tokens for IMO Represenbench, LiveCodebelch, and OJ Bench, and 32k termination tokens for long writes. For Hle, the maximum step limit is 120 years with a consulting budget of 48K per step. For agentic search jobs, the limit is 300 steps with a consulting budget of 24k per step.

Benchmarks for agentic searches and codes

In agentic search activities with tools, K2 reports 60.2 in PresenoComp, 66.3 in PresenoComp ZH, 56.3 in seal 0, 47.4 in FinsearchComp T3, and 87.0 independently.

In general benchmarks, it reported 84.6 in MMLU Pro, 94.4 in MMLU Redux, 73.8 in Ladeform Wripment, and 58.0 in Healthbench.

For coding, the K2's thinking reaches 71.3 on the SWe bench confirmed with the tools, 41.1 with the LECCHBENCHV6, 48.7 on the OJ Bench on the C Plus Plus configuration, and 47.1 on the terminal bench with the tools used.

The Moonshot team also describes a heavy mode that runs eight trajectories in parallel, then combines them to produce the final answer. This is used in some consulting benchmarking to extract more accuracy from the same base model.

Traditional Int4 size and export

K2 thinking is trained as a traditional Int4 model. The research group used information training during post training and used IT4 weight only for MOE elements. This supports IT4 detection with a 2x speed improvement in low latency mode while maintaining state of the art performance. All benchmark scores reported are below IT4 in accuracy.

CheckPoints are saved in Tonsors compressed format and can be opened in higher resolution formats like FP8 or BF16 using official compressed tools. Recommended generators include vllm, eSuglang, and ktranformers.

Key acquisition

- Kimi K2 thinking is an open-thinking Open Weight agent that achieves Kimi K2's mix of professional properties by consulting a clear experience that shows thinking and using the tool, not just short-style answers.

- The model uses a trillion parameter moe design with tens of billions of active parameters per token, and is trained as an Int4 model for qualization with fast training while maintaining fast flexibility.

- K2 thinking is designed to measure test time, it can perform hundreds of sequential tools in one operation and be tested under large token budgets and hard hats, which is important when trying to reproduce its thinking results and aventic results.

- In social game benches, you lead or compete with consultation, agentic tasks, and coding activities such as Hle with tools, browser, and you are restricted to a variety that offers clear K2 tools.

Thinking Me K2 is a strong indication that the development of test time has become the first method of Design Design for Moder Defect Models. MoonShot AI not only presents a mixture of 1t parameter information system with 32B active parameters and 256K Int4 operating window, native knowledge training, and training tools to run large steps such as settings. Overall, Kimi K2's thinking shows that open instruments agents consult with long-term planning and use of tools become a functional infrastructure, not just research demos.

Look Model weight and Technical details. Feel free to take a look at ours GitHub page for tutorials, code and notebooks. Also, feel free to follow us Kind of stubborn and don't forget to join ours 100K + ML Subreddit and sign up Our newsletter. Wait! Do you telegraph? Now you can join us by telegraph.

AsifAzzaq is the CEO of MarktechPost Media Inc.. as a visionary entrepreneur and developer, Asifi is committed to harnessing the power of social intelligence for good. His latest effort is the launch of a media intelligence platform, MarktechPpost, which stands out for its deep understanding of machine learning and deep learning stories that are technically sound and easily understood by a wide audience. The platform sticks to more than two million monthly views, which shows its popularity among the audience.

Follow Marktechpost: Add us as a favorite source on Google.