Allen Institute for AI (Ai2) Launches OLMO 3: Open Source 7B and 32B LLM Family Built on Dolma 3 and Dolci Stack

The Allen Institute for AI (AI2) releases OLMO 3 as a completely open family that exposes the entire 'Flow model', from raw data and code to centralized and optimized tests.

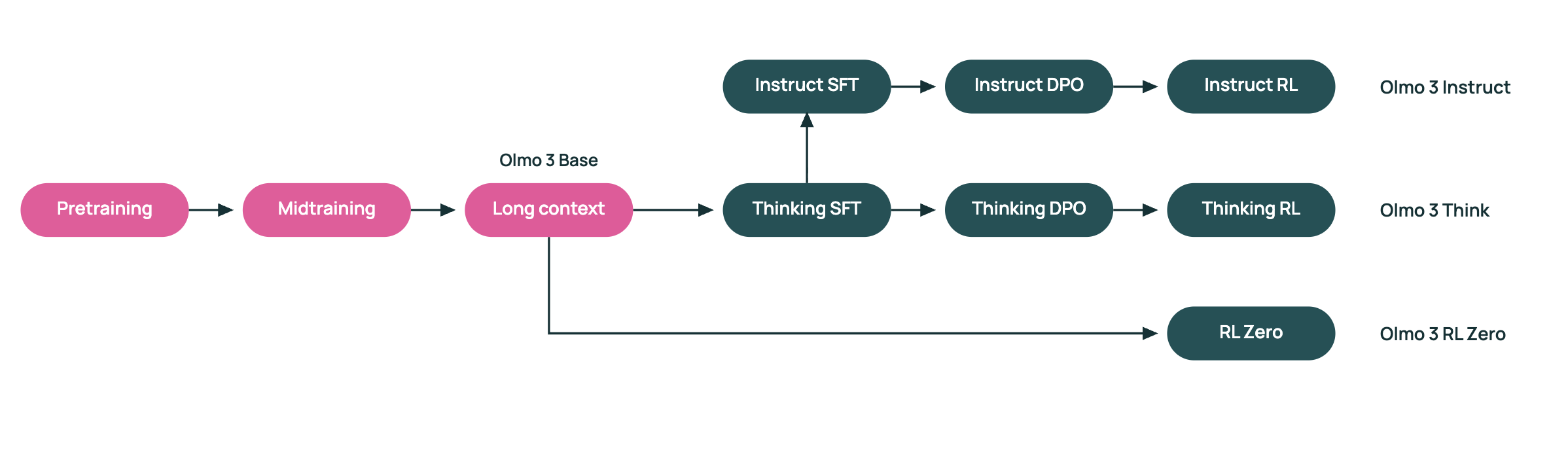

OLMO 3 is a unified dynamic suite with 7B and 32b parameter models. The family includes OLMO 3-Base, Olmo 3-CABANGE, OLMO 3-CORCHEON, and OLMO 3-RL Zero zero. Both types 7B and 32B share a length of 65,556 tokens and use the same set training recipe.

DOLMA 3 Data Suite

At the core of the training pipeline is Dolma 3, a new dataset created for OLMO 3. DOLMA 3 contains Dolma 3 mix, Dolma 3 Dolmino Mix, and Dolma 3 Longmino Mix. DOLMA 3 MIX is a pre-training manual 5.9t token of Web text, scientific PDFs, code recosipories, and other natural information. The Dolmino and Longmino subsets are formed from the dirty, high-grade resources of this lake.

Dolma 3 Mix supports the first phase of the OLMO 3-Base pre-training. The AI2 research team then uses Dolma 3 Dolmino Mix, a mid-100B training set that emphasizes math, coding, subsequent teaching, reading comprehension and cognitive tasks. Finally, Dolma 3 Longmino Mix adds 50b tokens for the 7b model and 100b tokens for the 32b model, focusing on long documents and scientific PDFS processed with OLMOR PIPEline with OLMOR PIPEline and OLMOR PIPEline with OLMOR PIPEline and OLMOR PIPEline and OLMOR PIPEline with OLMOR PIPEline and OLMOCCCR PIPEline and OLMOCC CHFS. This set curriculum is what pushes the content limit to 65,536 tokens while maintaining consistency and quality.

Large Scale Training on H100 Clusters

OLMO 3-Base 7B trains on DOLMA 3 HIX Using 1,024 h100 devices, you reach approximately 7,700 tokens per device per second. The latest stages use 128 h100s for Dolmino Mid Training and 256 H100s for long extension core.

Base Model Performance for open family combat

Among standard benchtops, the OLMO 3-Base 32B is positioned as a completely open base model. The AI2 research team reports that it competes with other open source families such as Qwen 2,5 and Gemma 3 in similar sizes. Compared to a wide suite of functions, the OLMO 3-Base 32B RANDS is close to or above these models while maintaining complete data and training configurations that are open to testing and reuse.

Olmo Focused Consultation 3 Think

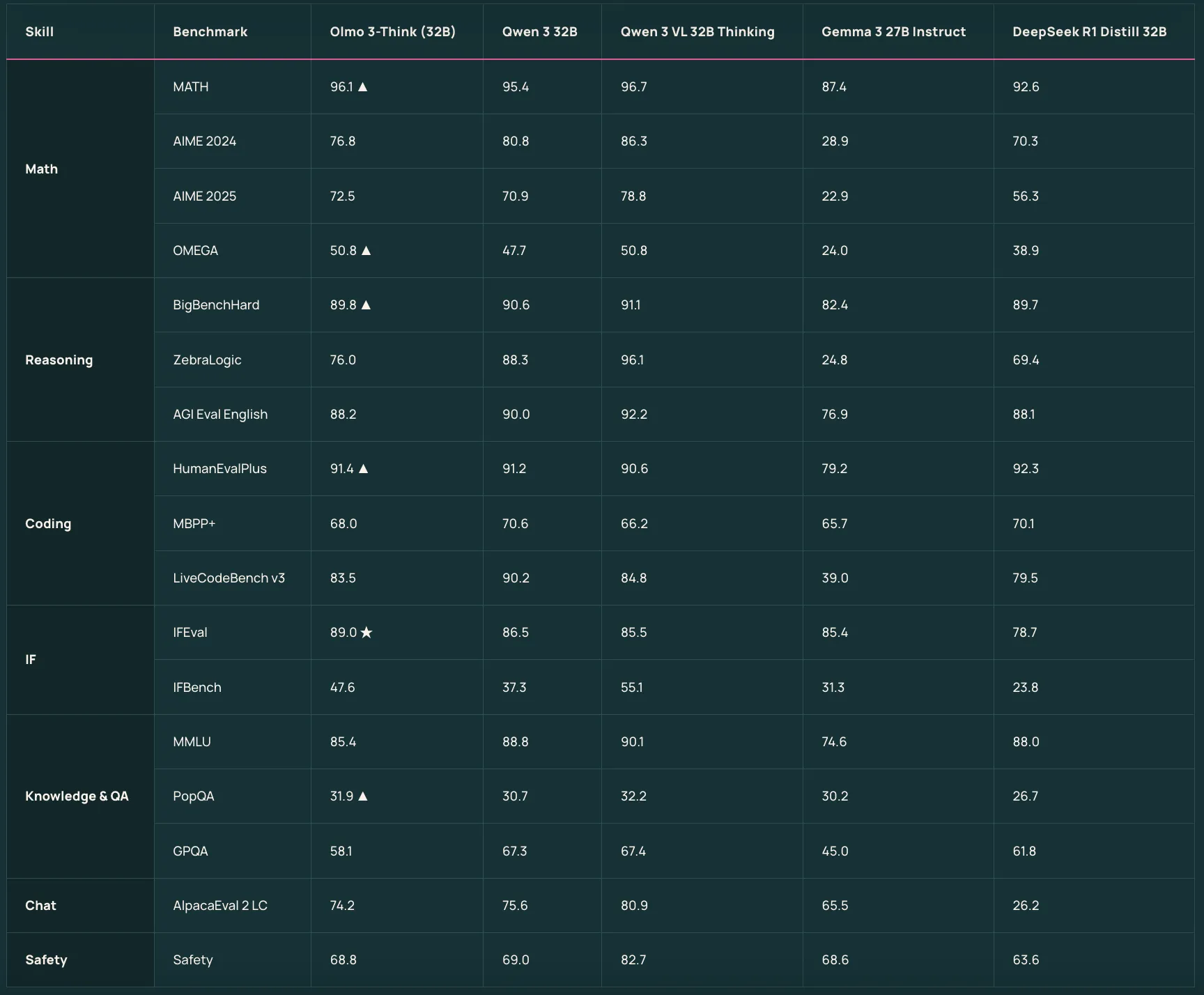

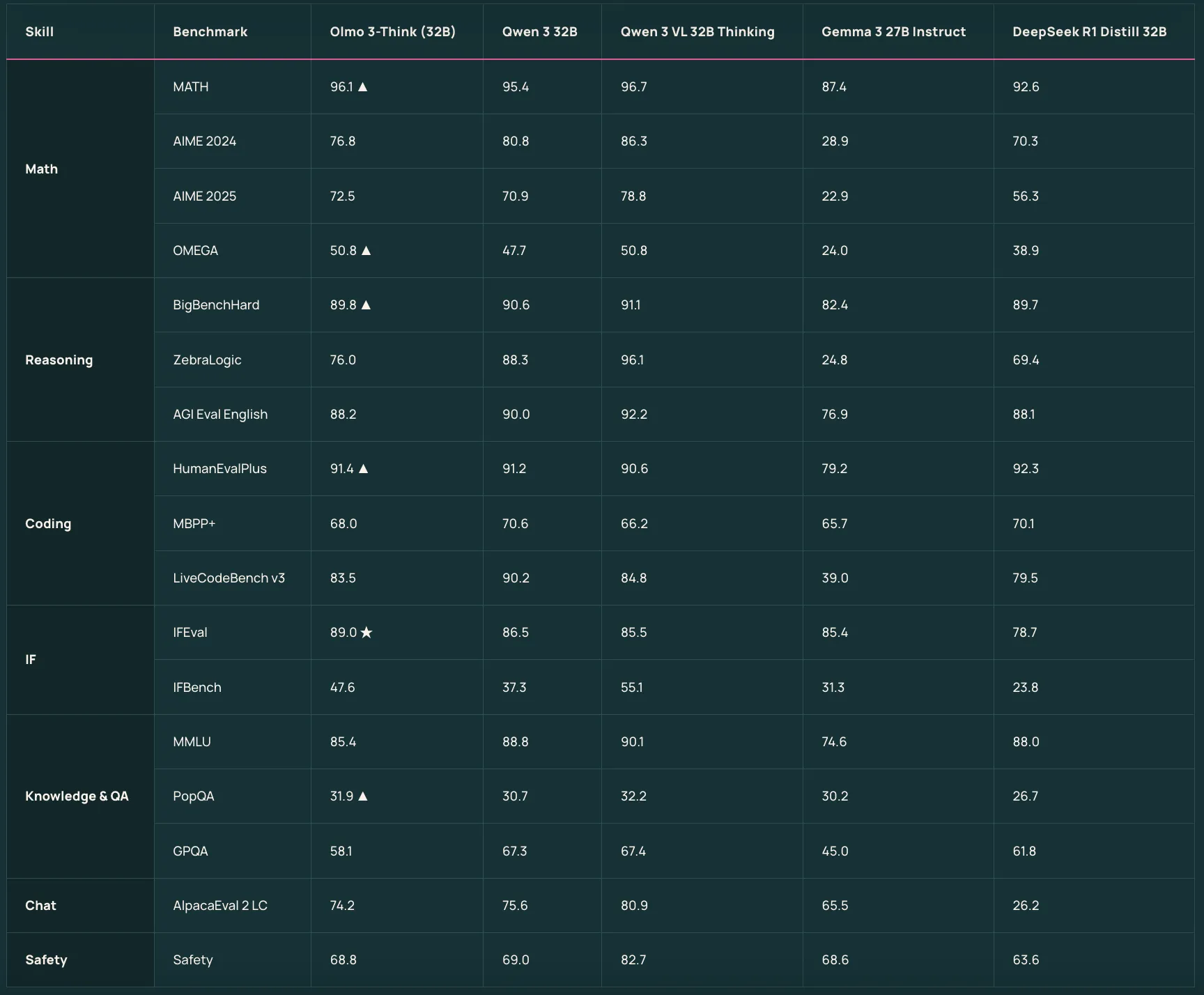

Olmo 3-Think 7b and Olmo 3-Think 32B Sit on top of the base models as a focal point consultation. They use a three-phase post training recipe that includes positive direction, positive feedback, and reinforcement learning with proven rewards through the Olmorl framework within the Olmorl framework. Olmo 3-Cabanga 32B is described as a completely open consulting model and bridges the gap to Qwen 3 32B with fewer thinking tokens while using fewer training tokens.

Olmo 3 is a training tool for discussion and usage

OLMO 3-Make 7B is organized by following quick instructions, Multi Turn Chat, and using tools. It starts from OLMO 3-Base 7B and uses different Dolci Stateary and Pipeline data that includes guiding good rescues, DPO, and RLVR for discussing and performing tasks. The AI2 research team reports that OLMO 3-Develory Matches or Afterforms are open to competitors such as Qwen 2.5, Gemma 3, and L LLama 3.1 and compete with the same 3 families for instruction and consultation.

Rl zero for pure rl research

OLMO 3-RL Zero 7B is designed for researchers who care about strengthening linguistic models but need a clean separation between RL pre-training details. It is designed as a completely open RL port for OLMO 3-Base and uses Dolci RL zero dates released courtesy of Dolma 3.

Comparison table

| A different model | Training or training data | Basic Use Case | Reported Position vs Other open models |

|---|---|---|---|

| OLMO 3 Base 7b | DOLMA 3 Mix Pre-Training, DOLMA 3 Dolmino Mix Main Training, DOLMA 3 Longmino Mix Long Core | Model General Foundation, Long Distance Consulting, Code, Math | 7B's fully open-source solid foundation, designed as a thinking, teaching, RL zero foundation, tested by leading 7B's open-source foundations |

| OLMO 3 Base 32B | Same DOLMA 3 format as 7B, with 100B date tokens for long context | High storage base for research, long context loads, RL setup | Described as a completely open 32B base, compared to qwen 2,5 32B and gemma 3 27b and marinfformffforming marin, apertus, llrm360 |

| Olmo 3 Think 7b | Olmo 3 Base 7b, and Dolci Cabanga Sft, Dolci Cabanga DPO, Dolci Cabanga RL in Olmorl Frame | Consultation is a 7B model that focuses on internal lines of thought | A completely open consulting model at the right level that allows a series of theoretical studies and RL experiments on embedded hardware |

| Olmo 3 Think 32B | OLMO 3 Base 32B, and similar Dolci consider SFT, DPO, RL PIPEline | A model of long-range reasoning | It is said to be a fully open minded model, competing with Qwen 3 32B mind models while being trained for almost 6x fewer tokens |

| Olmo 3 teaches 7b | Olmo 3 Base 7b, Dolci instructs SFT, DOLCI instructs DPO, Dolci instructs RL 7B | Follow Up, Discuss, Job Performance, Tool Usage | Reported to Outperform Qwen 2.5, Gemma 3, LLama 3 and narrowing the gap in 3 Qwen families at the same level |

| OLMO 3 RL Zero 7b | Olmo 3 Base 7B, and Dolci RLzero Math, code, if, combine dasasets, Decontanfon from DOLMA 3 | RLVR research in mathematics, coding, subsequent teaching, integrated activities | It is presented as a fully open RL method for BUSKRAKATING RLVR on top of a fully open basic model with pre-training data |

Key acquisition

- Finish off the exposed pipe: Olmo 3 presents a full 'model flow' from the DOLMA 3 data set, with pre-training, pre-final training, test release, moving suites, enabling comprehensive research, enabling comprehensive research.

- The denser 7b and 32B models have 65K cores: The family includes 7B and 32B transformers, all 65,536 tokens, trained for DOLMA 3 DOLMA 3 TRICAL, and Dolma 3 Longmino for long extension.

- An open source foundation and reasoning models: Olmo 3 Base 32B is positioned as a top base model completely open to its scale, to compete with Qwen 2.5 and Gemma 3, while Olmo 3, high-end thinking models are used by a few tokens.

- Work oriented work and rl zero VariantsIn Olmo 3 Announce the next 7B commands, Multi Turn Chat, and use the tool using Dolci data, and it is reported that it is fash or gemma 3, and LLAMA 3.1 at the same level. Olmo 3 RL Zero 7b provides a fully open RLVR with Dolci RLzero details released for pre-training math, code, follow-up training, and general discussion.

OLMO 3 is a rare release because it works to open up all the complete area, DOLMA 3 for cooking data, pre-training, Dolci training, Olmorl, and testing OLMES and Olmobaseeval. This reduces the ambiguity around data quality, more training, and creates a concrete foundation for the extension of Olmo 3 base, Olmo 3 Think, and Olmo 3 zero zero in controlled trials. Overall, OLMO 3 sets a solid reference point for Transparent, Chean Grade LLM Pipelines.

Look Technical details. Feel free to take a look at ours GitHub page for tutorials, code and notebooks. Also, feel free to follow us Kind of stubborn and don't forget to join ours 100K + ML Subreddit and sign up Our newsletter. Wait! Do you telegraph? Now you can join us by telegraph.

Michal Sutter is a data scientist with a Master of Science in Data Science from the University of PADOVA. With a strong foundation in statistical analysis, machine learning, and data engineering, Mikhali excels at turning complex data into actionable findings.

Follow Marktechpost: Add us as a favorite source on Google.