DMEL: Home Homenization is a simple

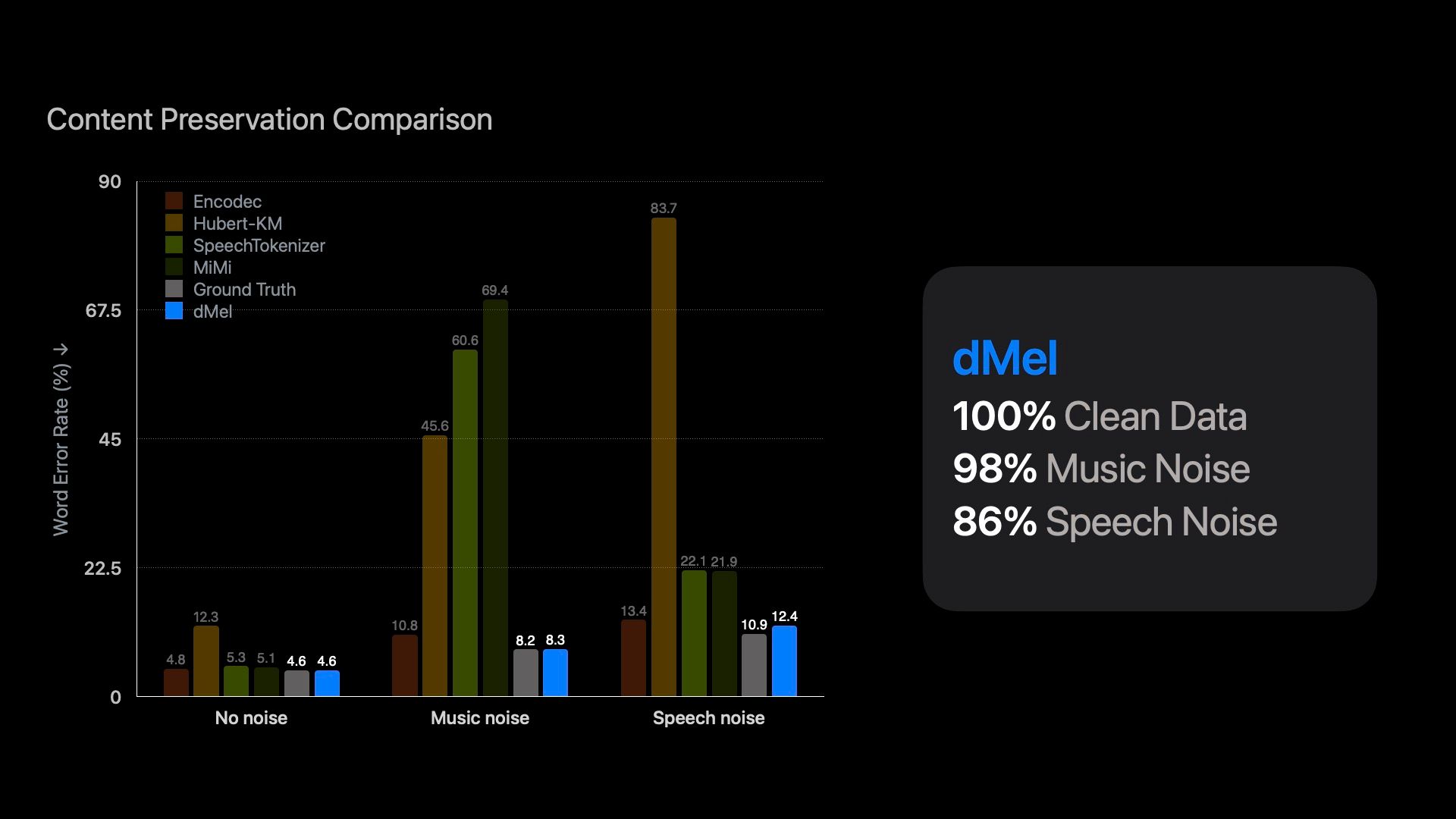

Large-language models change to process natural languages by entering ingredients for the partiality of the main text data. Inspired by this success, researchers investigate the complex ways to speak in ways of showing a sign of continuous speaking so that language comparative strategies can be included in communication details. However, the methods or tokens) tokens, which loses acoustic information, or acoustic acoustic, risks. Having many types of tokens also indicate their construction and requires that it seems later. Here we show that the reduction of Mel-Deventbenben channels in the Discrete Finity drums produce a simple presentation (DMEL), which performs better than other methods of Tokelalization speeches. The construction of the LM-Style Transformer Properties Displays Lectories, fully evaluating the various methods of speaking coordination against phishing (ASR) and TTH Talks (TTS). Our results reflect the effective function of the DMEL to achieve high performance in both tasks within the integrated framework, producing a compilation and effective modeling model.