DeepSeek AI Releases DeepSeek-V4: Compressed Sparse Attention and Highly Compressed Attention Enable Million Token Content

DeepSeek-AI has released a preview version of the DeepSeek-V4 series: two Mixture-of-Experts (MoE) languages designed for the single main challenge of making million-token windows usable and affordable during speculation.

This series consists of DeepSeek-V4-Pro, with 1.6T total parameters and 49B activated per token, and DeepSeek-V4-Flash, with 284B total parameters and 13B activated per token. Both models natively support a context length of one million tokens. DeepSeek-V4-Pro was pre-trained on 33T tokens and DeepSeek-V4-Flash on 32T tokens. Model probes for all four models: DeepSeek-V4-Pro, DeepSeek-V4-Pro-Base, DeepSeek-V4-Flash, and DeepSeek-V4-Flash-Base are publicly available on Hugging Face.

Long Context Building Challenges

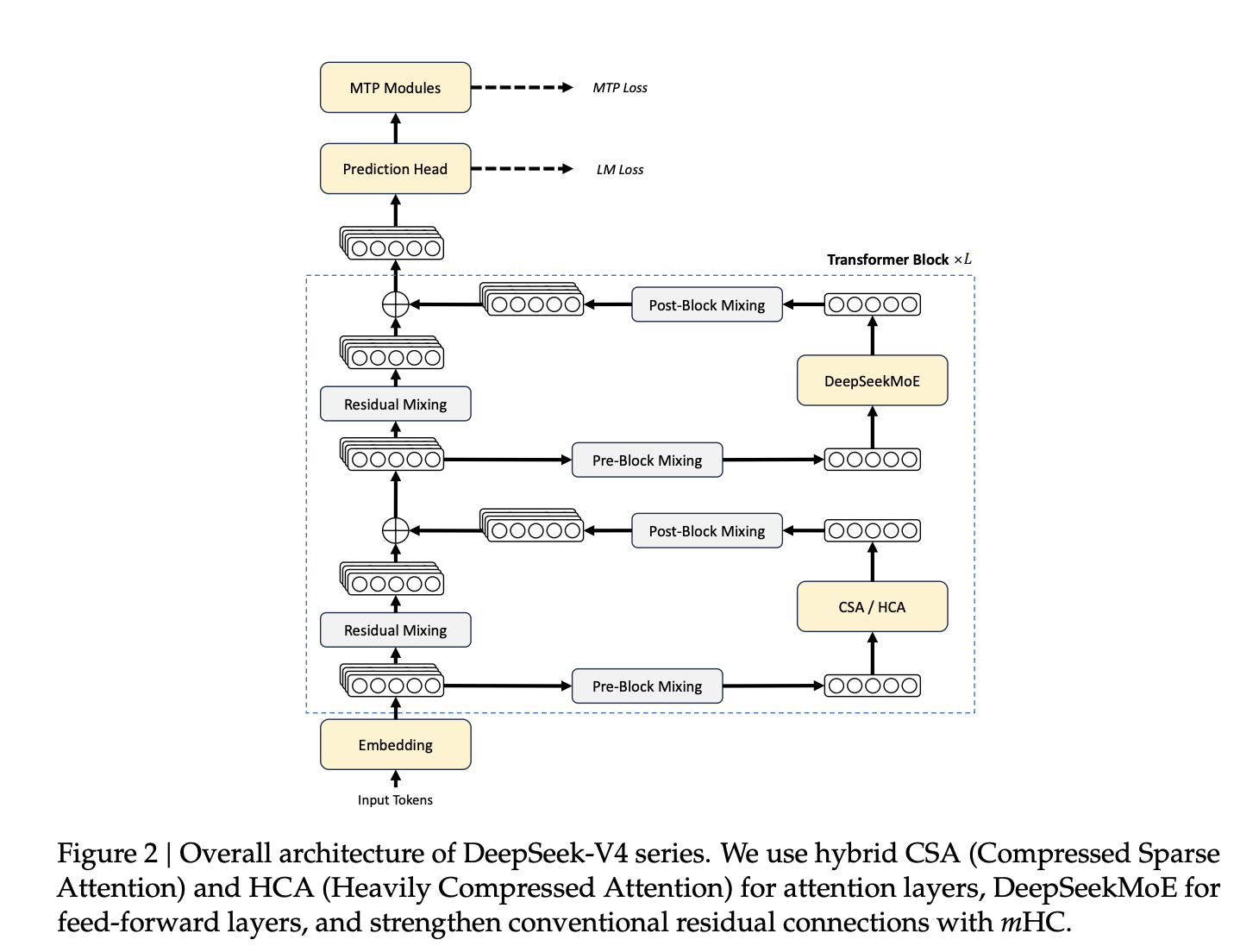

The vanilla attention method in the standard Transformer has a quadratic computational complexity with respect to sequence length, which doubles the core and nearly quadruples the computational attention and memory. For a million tokens, this becomes a deterrent without architectural intervention. DeepSeek-V4 addresses this with new integrated techniques: a hybrid attention structure, a new residual communication design, a different stimulus, and FP4 quantification training.

Hybrid Attention: CSA and HCA

Central architecture innovation is a hybrid approach that includes Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA), combined in all Transformer layers.

CSA compresses the Key-Value (KV) cache for everything m tokens in a single entry using a read-token-level compressor, and then using DeepSeek Sparse Attention (DSA) where each query token only looks up-k selected KV compressed entries. A component called Lightning Indexer handles the micro-select by querying against compressed KV blocks. Both CSA and HCA include a sliding window attention branch that covers the most recent novercome local dependency model tokens.

HCA is very aggressive: it covers all KV entries m' tokens – where m ≫ m of a single compressed input, then dense attention is applied to those representations. No small selection step is required; the compression ratio itself reduces the size of the KV cache.

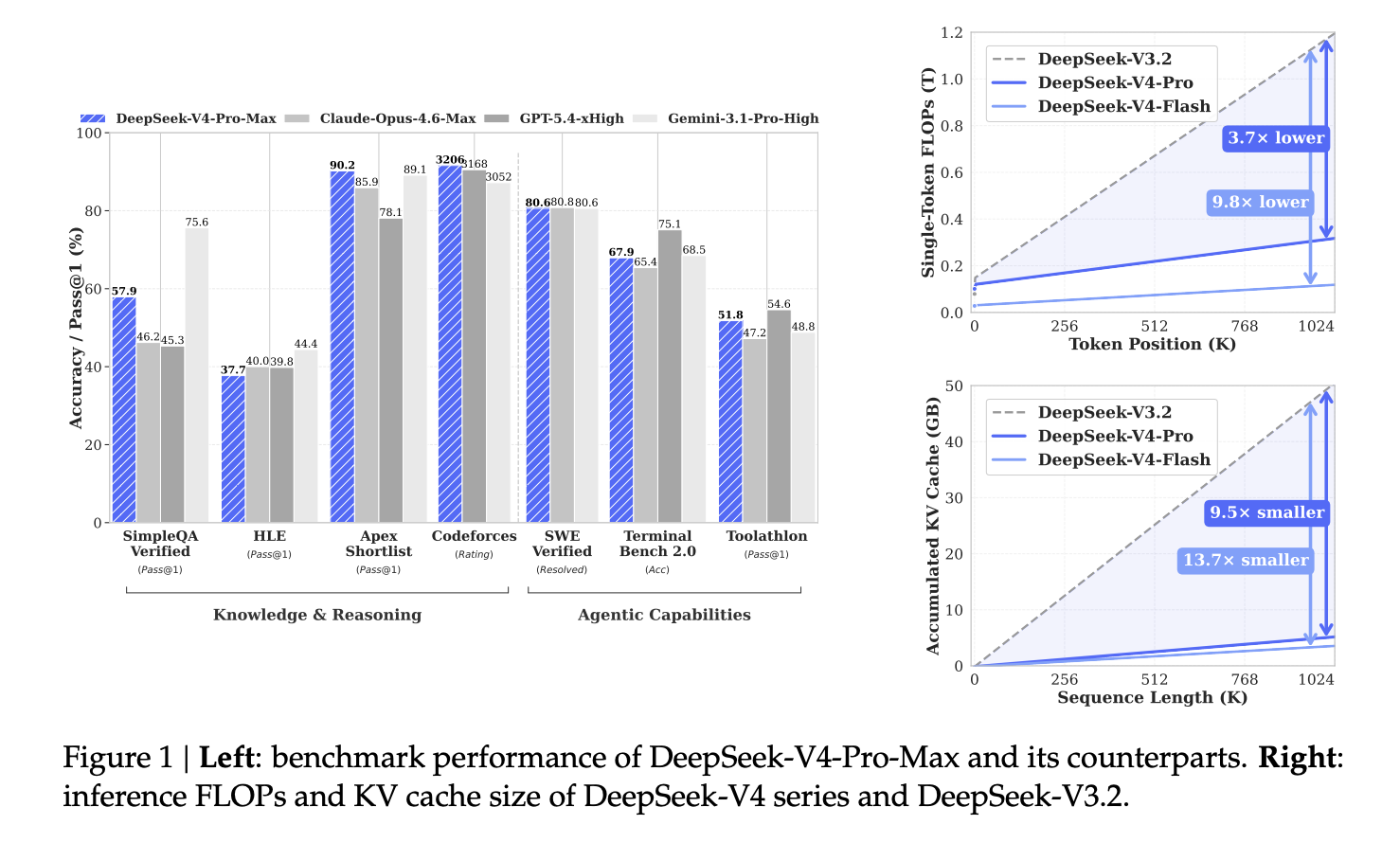

The benefits of efficiency are huge. In a million-token configuration, DeepSeek-V4-Pro requires only 27% of the FLOPs of a single token (with the same FP8 FLOPs) and 10% of the KV cache size of DeepSeek-V3.2. DeepSeek-V4-Flash achieves 10% single token FLOP and 7% KV cache relative to DeepSeek-V3.2.

Multiple Hyper-Constrained Connections (mHC)

DeepSeek-V4 replaces traditional residual connections with Manifold-Constrained Hyper-Connections (mHC). Hyper-Connections (HC) generalize residual connections by increasing the residual stream width by a factor of nhc (set to 4 in both models), we present the learned input, the residual, and the resulting mapping. Naive HC suffers from numerical instability when stacking multiple layers.

The mHC solves this by suppressing the residual mapping matrix Bl on the Birkhoff polytope — the set of double stock matrices in which all rows and columns sum to one and all entries are non-negative. This binds the spectral norm of the map to 1, preventing signal amplification in both forward pass and backpropagation. Threshold is implemented by Sinkhorn-Knopp algorithm with t_plurality = 20 repetitions. Map parameters are generated by each input power for clarity.

Muon Optimizer and FP4 QAT

DeepSeek-V4 adopts the Muon optimizer for most of its parameters. Muon uses Newton-Schulz iterations to approximately orthogonalize the gradient update matrix before using it as a weight update. The implementation uses a two-stage hybrid schedule: 8 iterations with coefficients (3.4445, −4.7750, 2.0315) for fast convergence, then 2 iterations for stabilization with coefficients (2, −1.5, 0.5). AdamW is reserved for the embedding module, prediction header, static bias and calibration features of the mHC modules, and all RMSNorm weights.

For deployment efficiency, FP4 (MXFP4) Quantization-Aware Training (QAT) is used in MoE expert weights and Query-Key (QK) method in CSA's Lightning Indexer. During inference output and RL output, the actual FP4 weights are used directly instead of simulating the estimation, reducing memory traffic and sampling delay.

Stability of Training in Scale

Training MoE models for billions of parameters introduced significant instabilities. Two strategies are successful. Precipatory Routing splits backbone and routing network updates: step by step routing instructions t are calculated using historical parameters θt−Δtbreaking the cycle where routing decisions reinforce external values in MoE layers. SwiGLU Clamping prevents the vertical part of SwiGLU to [−10, 10] and closes the gate portion bound up to 10, directly suppressing odd activation. Both techniques are used during the training of both models.

After Training: Expertise and Policy Making

The post-training pipeline replaces the mixed RL class of DeepSeek-V3.2 with On-Policy Distillation (OPD). Independent domain experts are first trained on statistics, coding, agent functions, and following instructions via Supervised Fine-Tuning (SFT) followed by Reinforcement Learning using Group Relative Policy Optimization (GRPO). The ten teacher models were then combined into a single combined student model by minimizing the between-student KL regression and the distribution of each teacher's effect on the student-generated trajectories, using full-vocabulary logit filtering to estimate stable trends.

The resulting model supports three modes of thinking: Non-Thinking (quick, no clear chain of thought), Think High (deliberate thinking), and Think Max (maximal thinking effort with a dedicated fast schedule and reduced length penalties during RL training).

Benchmark results

DeepSeek-V4-Pro-Max achieves a Codeforces rating of 3206, ahead of GPT-5.4-xHigh (3168) and Gemini-3.1-Pro-High (3052). In SimpleQA Verified, it scores 57.9 Pass@1, Claude Opus 4.6 Max (46.2) and GPT-5.4-xHigh (45.3), although it trails Gemini-3.1-Pro-High (75.6). In SWE-Verified, DeepSeek-V4-Pro-Max reaches 80.6% solved, slightly behind Claude Opus 4.6 Max (80.8%), while Gemini-3.1-Pro-High also gets 80.6%.

In long context benchmarks, DeepSeek-V4-Pro-Max achieves 83.5 MMR in OpenAI MRCR 1M and 62.0 accuracy in CorpusQA 1M, surpassing Gemini-3.1-Pro-High (76.3 and 53.8 respectively), but trailing Claude's 4.9 in Max 7 and Max 9 both.

Key Takeaways

- Hybrid CSA and HCA attention reduces the KV cache to 10% of DeepSeek-V3.2 for 1M tokens.

- Manifold-Constrained Hyper-Connections (mHC) replace residual connections for stable deep layer training.

- The Muon optimizer replaces AdamW for many parameters, delivering fast convergence and training stability.

- Post-training uses On-Policy Distillation from 10+ domain experts instead of traditional mixed RL.

- DeepSeek-V4-Flash-Base outperforms DeepSeek-V3.2-Base despite having 3x fewer activated parameters.

Check it out Paper again Model weights. Also, feel free to follow us Twitter and don't forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us