Moonshot AI Releases Kimi K2.5: A Highly Visual Agentic Intelligence Model with Native Swarm Execution

Moonshot AI has released Kimi K2.5 as an open source agent intelligence model. It includes a Hybrid Expert language core, a native view encoder, and a parallel multi-agent system called Agent Swarm. The model guides coding, multifactorial reasoning, and deep web research with strong benchmark results for agents, vision, and coding suites.

Building and Training Model

Kimi K2.5 is an Expert Mix model with 1T total parameters and 32B activated parameters per token. The network has 61 sections. It uses 384 experts, with 8 selected experts per token and 1 shared expert. The hidden size of attention is 7168 and there are 64 attention heads.

The model uses MLA attention and the SwiGLU activation function. The word size of the tokenizer is 160K. Maximum context length during training and description is 256K tokens. This supports long tool tracking, long scripts, and multi-step research workflows.

The vision is handled by the MoonViT encoder with about 400M parameters. Visual tokens are trained along with text tokens on a single multimodal backbone. Kimi K2.5 is realized by pre-training about 15T tokens of mixed vision and text data on top of Kimi K2 Base. This native multimodal training is important because the model learns the joint structure over images, texts, and language from scratch.

Released checkpoints support standard understanding stacks such as vLLM, SGlang, and KTransformers with transformer version 4.57.1 or newer. Limited INT4 variants are available, re-using the method from Kimi K2 Thinking. This allows for use on commodity GPUs with low memory budgets.

Coding and Multimodal skills

Kimi K2.5 is positioned as a strong model for open source coding, especially if the code generation depends on the virtual context. A model can read UI screenshots, design screenshots, or videos, and generate structured front-end code with structure, style, and interaction logic.

Moonshot shows examples where the model reads a picture of a puzzle, reasons about the shortest path, and then writes code that produces a visual solution. This shows the reverse thinking, where the model combines image recognition, algorithmic programming, and code integration into a single flow.

Because K2.5 has a 256K context window, it can store long histories of context specifics. An efficient workflow for developers to mix design assets, product documentation, and existing code into a single notification. A model can refactor or extend the codebase while keeping the physical constraints consistent with the original design.

Agent Swarm and Parallel Agent Reinforcement Learning

A key feature of Kimi K2.5 is Agent Swarm. This is a multi-agent system trained with Parallel Agent Reinforcement Learning, PARL. In this setup the orchestrator agent divides a complex task into many sub-tasks. It then rotates certain sub agents to work in parallel.

The Kimi team reports that the K2.5 can handle up to 100 sub agents within a task. It supports up to 1,500 integrated steps or tool calls in a single run. This parallelism provides 4.5 times faster completion compared to a single-agent pipeline for extensive search tasks.

PARL introduces a metric called Milestones. The system rewards policies that minimize the number of serial steps required to solve a task. This discourages irrational sequential planning and forces the agent to divide work into parallel branches while maintaining consistency.

One example of the Kimi group is a research workflow where the program needs to find more niche creators. The orchestrator uses Agent Swarm to spawn a large number of research agents. Each agent scans different regions of the web, and the system compiles the results into a structured table.

Benchmark Performance

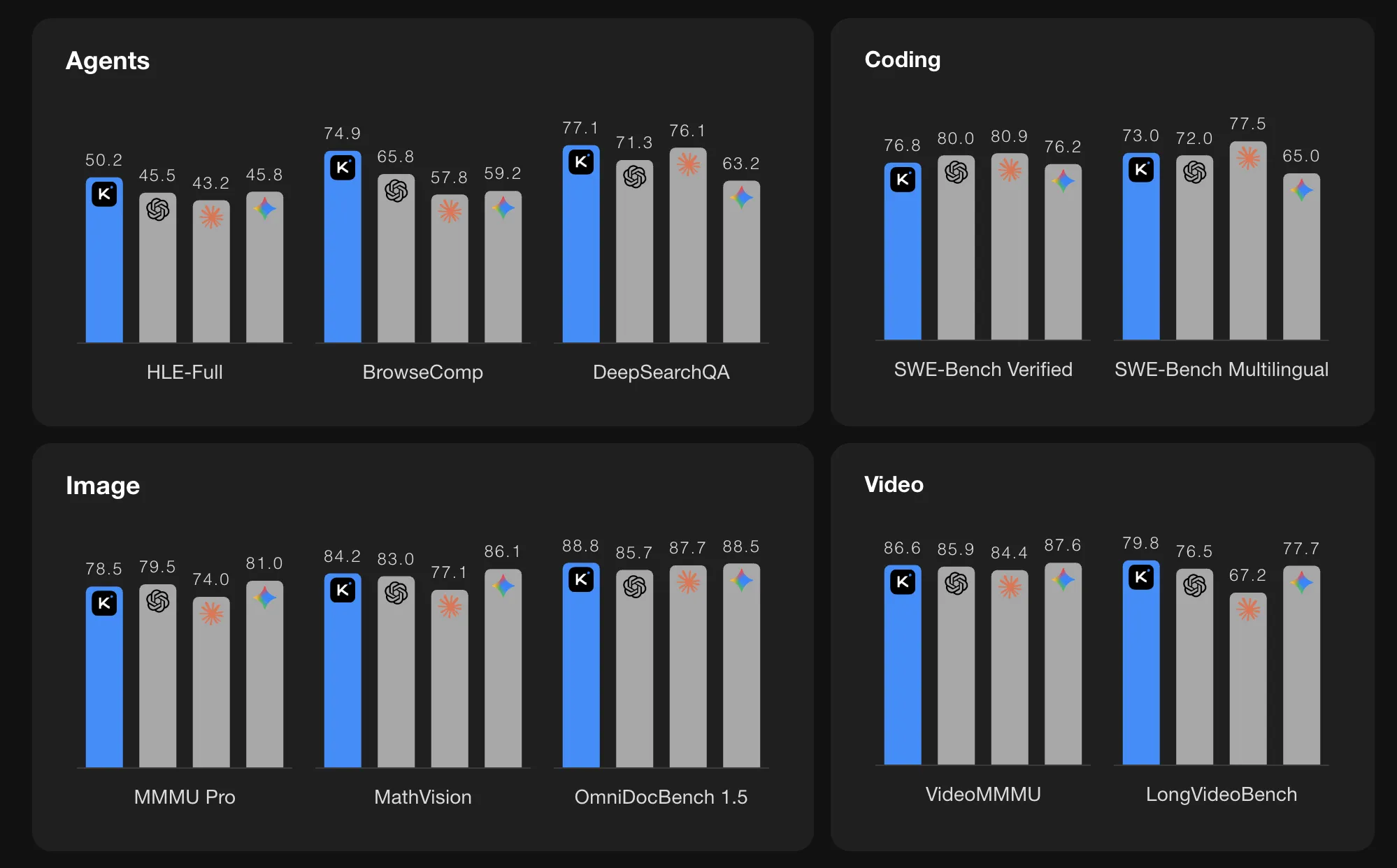

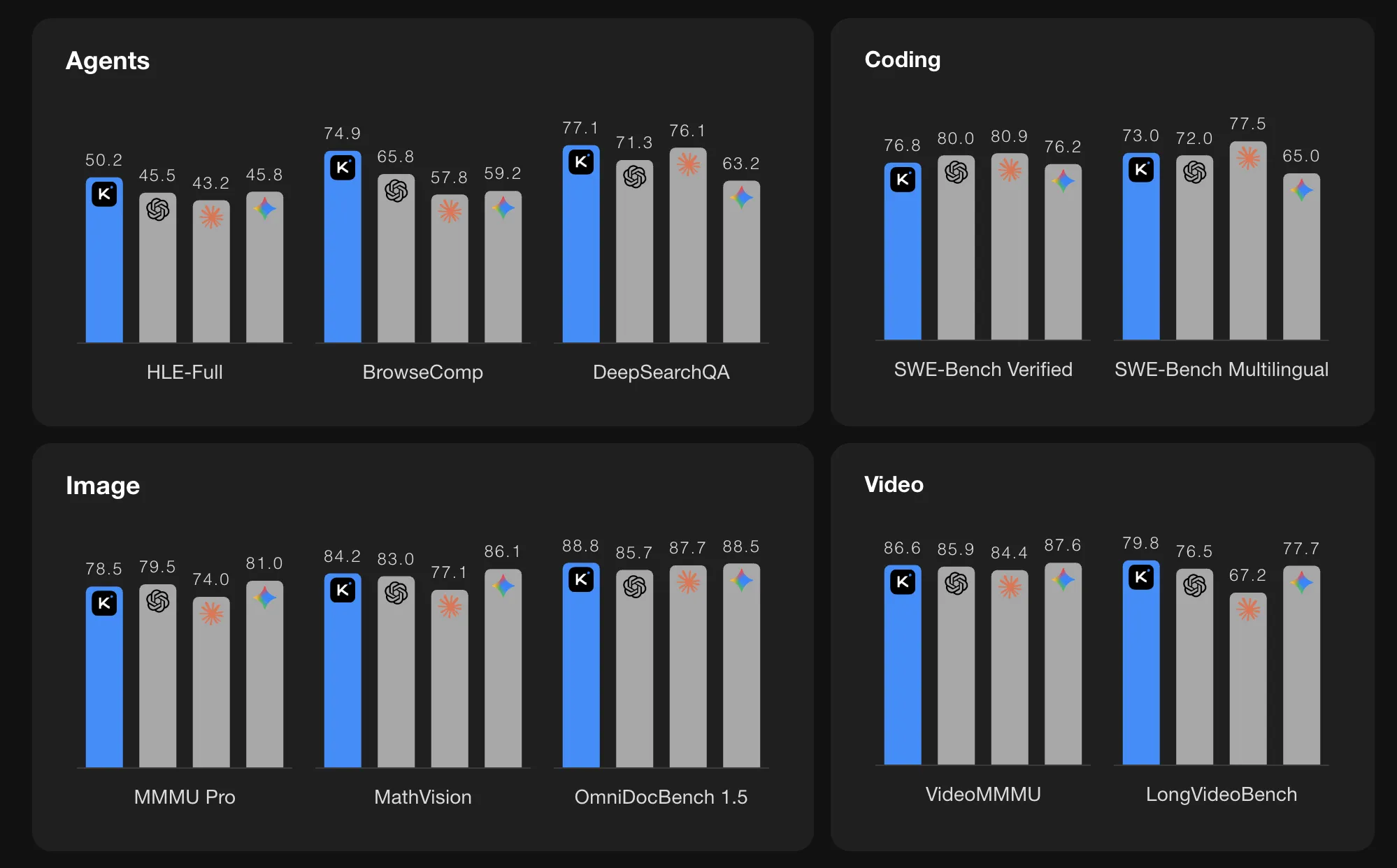

In agent benchmarks, the Kimi K2.5 reports strong numbers. In HLE Full with tools 50.2 points. In BrowseComp for content management 74.9 points. In Agent Swarm mode the BrowseComp score increases to 78.4 and the WideSearch metrics also improve. The Kimi team compares these values with GPT 5.2, Claude 4.5, Gemini 3 Pro, and DeepSeek V3, and the K2.5 shows the highest score among the models listed in these specific agent suits.

In the vision and video benchmarks the K2.5 also reports high scores. MMMU Pro is 78.5 and VideoMMMU is 86.6. The model performs well in OmniDocBench, OCRBench, WorldVQA, and other document and scene recognition tasks. These results show that the MoonViT encoder and long context training are effective in multimodal real-world problems, such as reading complex documents and video consultation.

In code benchmarks it scores SWE Bench Verified at 76.8, SWE Bench Pro at 50.7, SWE Bench Multilingual at 73.0, Terminal Bench 2.0 at 50.8, and LiveCodeBench v6 at 85.0. These numbers place K2.5 among the strongest open source code models reported by these works.

In long context language benchmarks, the K2.5 reaches 61.0 in LongBench V2 and 70.0 in AA LCR under standard test settings. In think benchmarks it scores high in AIME 2025, HMMT 2025 February, GPQA Diamond, and MMLU Pro when used in think mode.

Key Takeaways

- Expert Mix on the scale of billions: Kimi K2.5 uses Imixture of Expert architecture with 1T total parameters and 32B active parameters per token, 61 layers, 384 experts, and 256K context length, optimized for long multimodal workflow and heavy tool.

- Native multimodal training with MoonViT: The model includes a MoonViT vision encoder of about 400M parameters and is trained on 15T mixed vision and text tokens, so images, documents, and language are handled in one unified core.

- Parallel Agent Swarm with PARL: Agent Swarm, trained with Parallel Agent Reinforcement Learning, can coordinate up to 100 agents and up to 1,500 tool calls per task, providing about 4.5 times faster execution compared to a single agent in extensive research tasks.

- Dynamic measurement results in coding, perception, and agents: K2.5 reports 76.8 in SWE Bench Verified, 78.5 in MMMU Pro, 86.6 in VideoMMMU, 50.2 in HLE Full with tools, and 74.9 in BrowseComp, closed models that match or exceed the list in several agent and multimodal suites.

Check it out Technical details again Model weight. Also, feel free to follow us Twitter and don't forget to join our 100k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.