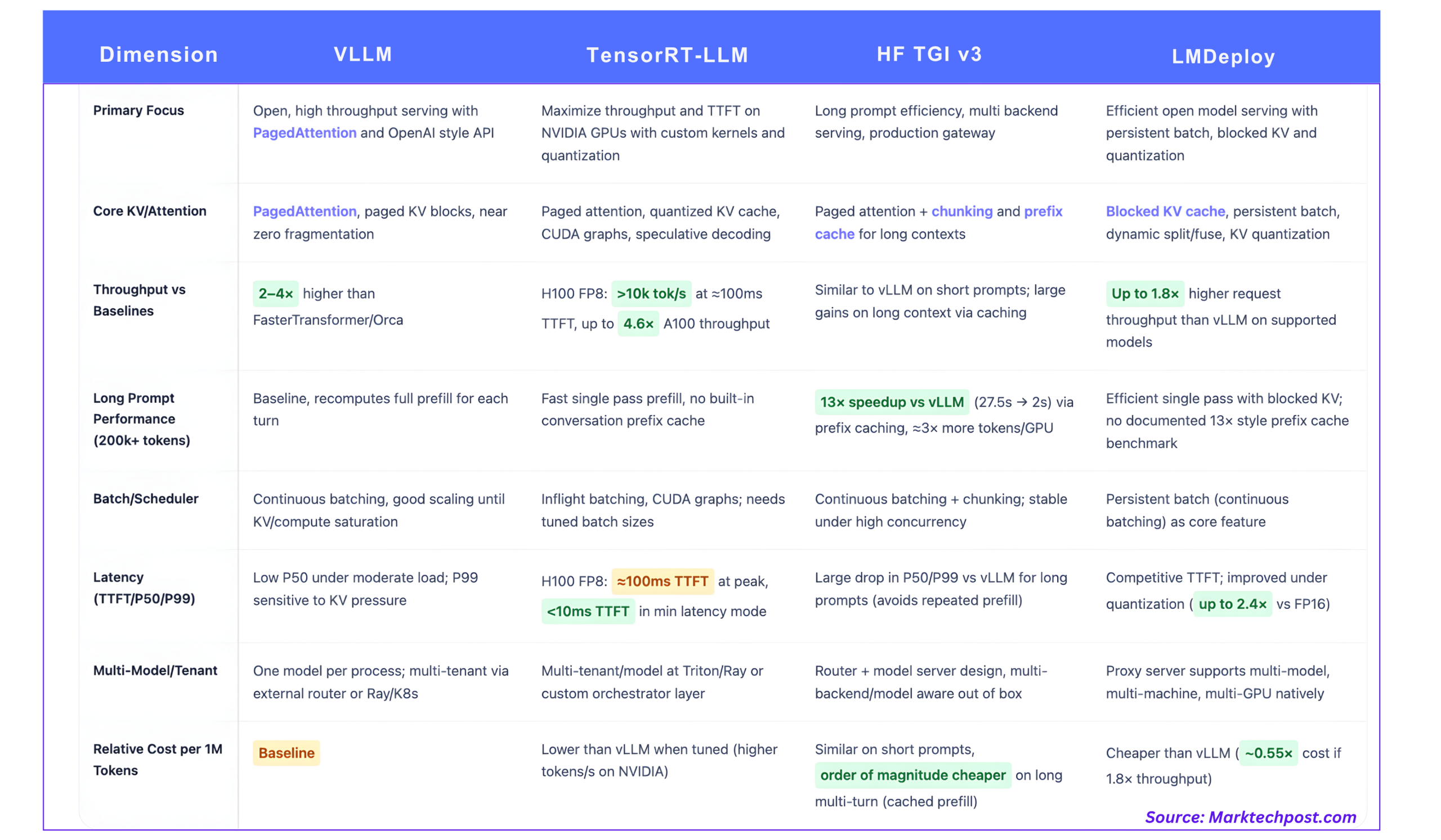

vllm vs tensort-llm vs hf tgi vs lmdeplobhishe, deep technology comparison for generating LLM detection

llm production is now a programming problem, not generate() loop. For real jobs, the choice of stack is up to you tokens per second, tail latencyand finally The cost is in one million tokens For the given fields of GPU.

This comparison focuses on the 4 most widely used stocks:

- vllm

- Nvidia tensort-llm

- Kissing Faces Under Text (TGI V3)

- Fill him up

1

Great idea

VLLM is built around Be informedAn implementation of attention that treats the KV cache as virtual memory is taken rather than having a single sequential pin.

Instead of providing one large KV circuit per request, vllm:

- Divides the KV cache into blocks of fixed size

- It stores a block table that contains logical tokens in physical blocks

- Blocks are shared between sequences wherever computers are started

This reduces external fragmentation and allows the application to program many of the same data in the same vram.

Interruption and latency

VLLM develops the release by 2-4× On top of programs like Fastertransformer and ORCA in the same way, with great benefits for long sequences.

Key supplier properties:

- An ongoing encounter (Also called Fright Batching) merges incoming requests into existing GPU batches instead of waiting for scheduled batch windows.

- In typical conversational tasks, usage scales almost linearly with cocurring recurrences until the KV memory or compute is full.

- The P50 Latenct remains relatively low, but the P99 can expose at once to the lines for a long time or the KV Memory is strong, especially for heavy queries.

vllm reveals an Opelai compatible http api and it integrates well with Ray Khonzani and other orchestrators, which is why it is widely used as an open source.

KV and Multi Tenant

- PenfatTattention gives near zero kv waste and dynamic sharing within and across applications.

- Each VLLM process is active one modelMulti-model and multi-model setups are often built with external routers or API Gateway followers in most VLLM scenarios.

2. Tensorrt-LLM, High-end hardware on NVIDIA GPUS

Great idea

Tensorrt-LLM is a Nvidia Library optimized for their GPUS. The library provides custom attention processing, trigger binding, KV grounding, grounding in FP4 and IT4, and imaginary decoration.

It is tightly integrated into Nvidia Hardware, including FP8 tensor cores in hopper and blackwell.

Performance measurement

NVIDIA test H100 VS A100 A100 is the most concrete public reference:

- For H100 with FP8, Tensorrt-LLM reaches more than 10,000 tokens / s In Peak Foot for 64 similar requestswith ~ 100 ms the time of the first sign.

- The H100 FP8 reaches up to 4.6 × Top Up and 4.4 × Quick Start Token Latency than a100 in the same models.

For latency critical modes:

- Tensorrt-LLM on H100 can drive TTFT less than 10 ms In order of batch 1, at the cost of the lower output.

These numbers are the model and exact design, but they give the power they have.

FURTIll vs Conmode

Tensorrt-llm extends both categories:

- FILFIll benefits from the advanced management of FP8 Attention and Tensor parallelism

- Explore benefits from cuda graphs, assumed transforms, scaled instruments and KV, and kernel fusion

The result is very high tokens for various input and output lengths, especially when the engine is programmed with that batch profile.

KV and Multi Tenant

Tensort-LLM offers:

- KV cache With adjustable layout

- Long sequence support, KV reuse and reload

- Passionate awakening and the importance of strategic planning

Nvidia both do this with ray based or triton orchestration patterns based on multi intern curtains. Support for multiple models is done at the orchestrator level, not within a single instance of tensorrt-LLM.

3

Great idea

Textration Generation Indepecce (TGI) is a Rust and Python based stack that adds:

- Http and grpc apis

- Continuous cleaning schedule

- Recognition and autosculaling hooks

- Pluggable Bacsends, including VLLM-style engines, Tensorrt-LLM, and other instances

Version 3 focuses on several faster processing Wrapping and prefix prefix.

Long Prompt Benchmark vs vllm

The TGI V3 documentation provides a clear benchmark:

- With more and more heights 200,000 tokensinterview response that takes 27.5 s in vllm can be moved approx 2 s in TGI v3.

- This is reported as 13 × Suppep in that work.

- The TGI V3 is capable of processing approx 3 × more tokens in the same GPU memory by reducing memory footprint and exploiting chunking and caching.

The machine is:

- TGI maintains the context of the original discussion at Prefix name saverso the next conversion only pays for the increasing tokens

- The cache is to look up the order cell soldiersrelated to the neglect of correct naming

This is the intended use of tasks where the Prompts are very long and are reused across pipelines, for example pipelines and analytic summaries.

The construction and behavior of latency

Key elements:

- Wrapping uppromoting a very long time divided into manageable parts of KV and planning

- Prefix prefixdata structure to allocate a long rotating context

- An ongoing encounterIncoming requests join sequential batches

- PeantaThention and installed kernels All GPU Back

For short chat style operations, throughput and latency are in the same ballpark as vllm. In the old, fixed state, both P50 and P99 Latency Improve by an order of magnitude because the engine avoids repeated Reventer.

Multiple regressions and multiple models

TGI is designed as Router Plus Model Server of construction. It can:

- Routing applications across multiple models and graphics

- Different Target Backends, for example TonsRort-LLM on H100 Plus CPU or small GPU for low traffic

This makes it good as a middle serving tier in most rental sites.

4. LMDEARK, turbom with blocked KV and power rage

Great idea

LMDEDH from the Interlm Ecosystem Interlm is a compression and service tool for LLMS, focused around He's a fan engine. It focuses on:

- A high pass request is in effect

- Blocked KV cache

- Continuous bankruptcy (continuous discrimination)

- Instrumentation and KV saver

Related to pass vs vllm

The project is:

- 'LMDEPH YELVERWENI TO PREP 1.8 × Top Application Prep', with support from persistent batch, block KV, dynamic split and fuse, tensor matching and optimized ceda kernels.

KV, size and latency

LMDEDH includes:

- Blocked KV cachewhich is like KV taken, which helps to pack more sequences in vram

- Support for KV Cache Racheusually int8 or IT4, to cut KV memory and bandwidth

- The only way to get power is to use 4 bit awq

- A benchmarking integration that reports token throughput, token usage request, and token initialization latency

This makes LMDEARK attractive when you want to use large open models like interlm or Qwen on mid-range GPUs with aggressive compression while keeping good tokens.

Multiple model submissions

LMDEDH provides a proxy server You can manage:

- Multiple model submissions

- More machines, more GPU setups

- The concept of selection is to select models based on the metadata of the application

So Architactaly sits closer to TGI than one engine.

What should you use there?

- If you want high saturation and very low TTFT on nvidia gpus

- Tensorrt-llm the main choice

- It uses FP8 with low precision, low conch and decoration thought to compress tokens/s and keep TTFT below 100 ms with high turrency and below 10 ms with low conch

- If you are controlled by long arguments with reuse, like rag on big situations

- TGI v3 strong default

- Its a prefix saver and chunking ake ake 3 × Token capacity and 13 × lower bottom than the vllm in published long front benches, without additional configuration

- If you want an open, simple engine with basic functionality and an Opelai style API

- vllm remains a standard cover

- PenfatThention and continuous movement do 2-4 × fast there are old stacks at the same time, and it comes together cleanly with ray and k.

- If you target open models like Interllm or QWWEN with aggressive value with Multi Model Proving

- Fill him up it's okay

- Blocked KV saver, continuous betting and Int8 or Int4 KV Up to 1.8 × Higher Application Speed than VLLM on supported models, with the router layer installed

In fact, many Dev teams integrate these systems, for example using Tensorrt-LLM for high-volume analysis, TGI v3 for long-term analysis, VLLM or LMDEPWERY for LEARNING AND MODELING. The key is to synchronize the transfer, latency tails, and KV behavior with the actual token transfer to your traffic, then the cost of each counter in the measured tokens.

Progress

- vllm / petatatuntion

- Tensorrt-LLM performance and overview

- HF HF Tolerance Index (TGI V3) Longitudinal Behavior

- Lmdeploy / turbomind

Michal Sutter is a data scientist with a Master of Science in Data Science from the University of PADOVA. With a strong foundation in statistical analysis, machine learning, and data engineering, Mikhali excels at turning complex data into actionable findings.

Follow Marktechpost: Add us as a favorite source on Google.