WeatherERNext for Google Depmind 2 uses neural networks for 8x faster weather forecasting performance

Google Depmind research has launched Weatherharnext 2, A world-based AI weather forecasting system that now features improved forecasts for Google Search, Gemini, Weather Pixel Weather and Google Maps Location Platform, with future Google Maps integration following. Including FGeneral Generative Network, or FGNa major component development to deliver faster probabilistic forecasting, more accurate and higher resolution than the previous WeatherterText system, and is presented as an early access model to Vertex AI.

From anqustic grids to active inserges

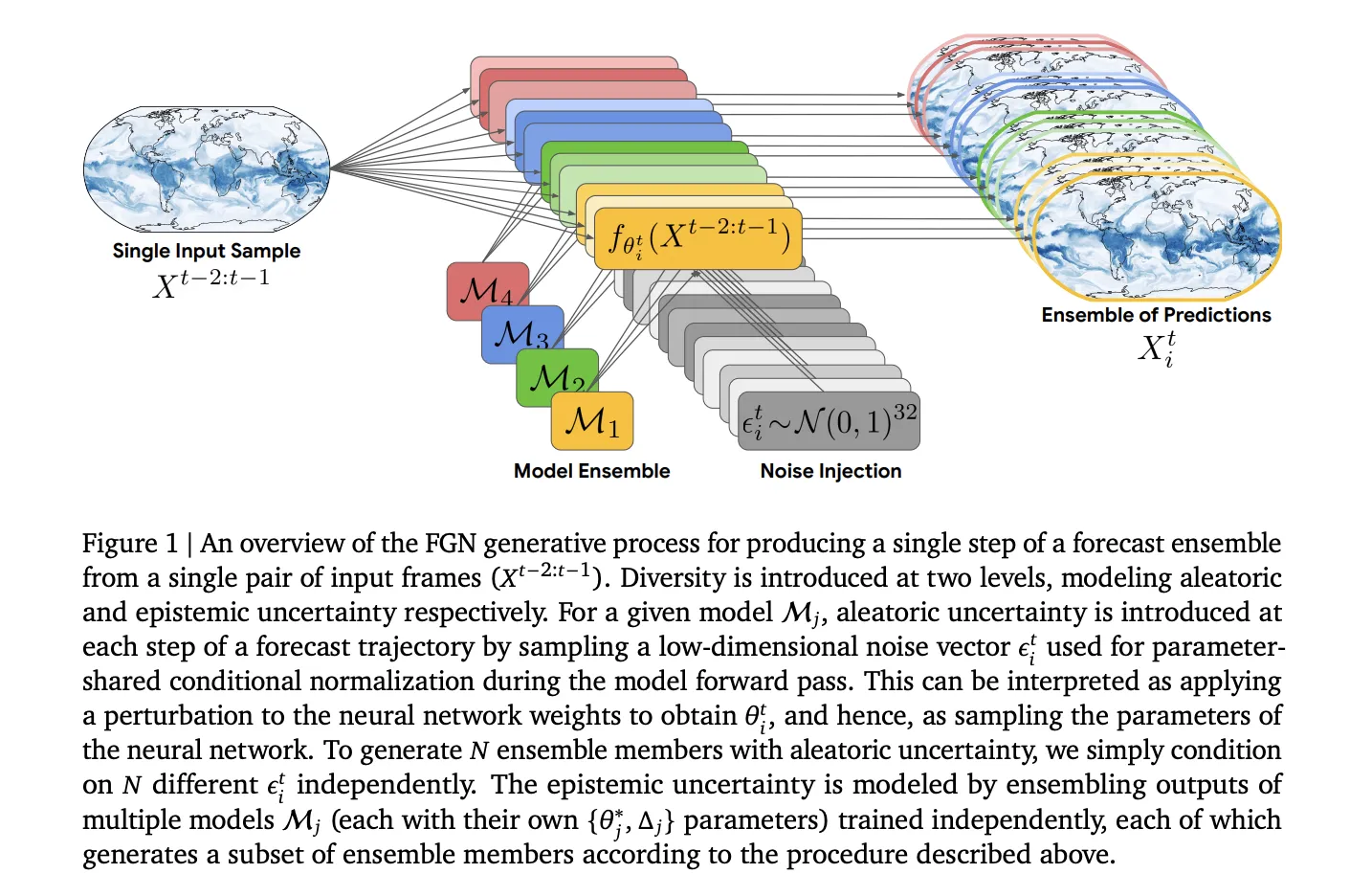

At the core of Weathernext 2 is the FGN model. Instead of predicting a single future field, the model directly samples the joint distribution over 15 Day Weather Trajectories. Each state The model learns the approximation of 𝑝 (𝑋ₜ | 𝑋ₜ₋₂: 𝑡₋₁) and works automatically from the first two analysis frames to generate a combination of trajectories.

Architecturally, each FGN example follows the same architecture as the Jencast Deloiser. A graph neural network Encoder and decoder map between a regular grid and a compact representation defined on a 6-dimensional Icosahedral Mesh. The graph modifier works on the mesh nodes. The FGN production used for Weathertherthext 2 is larger than gencast, about 180 million parameters with 768 seeds and 24 Transformers, compared to 57 512 layers and 16 layers for gecast. FGN also works with a 6 hour timedep, where gecast used 12 hour steps.

Epistemic modeling and fast uncertainty in the task space

FGN separates epistemic and multivariate uncertainties in a practical way from macropredictions. Epistemic uncertainty, arising from limited data and incomplete learning, is handled by intensive meetings of 4 independently initiated and trained models. Each seed in the model described above, and the system generates an equal number of members to include in each seed when generating predictions.

Aleatoric uncertainty, which represents the inherent variability in the atmosphere and uncorrected processes, is handled with practicality. In each prediction phase, the model samples the Gaussian Noise Vector ₜ ₜ and feeds it with a parameter shared by the conditional constraints within the network. This effectively samples a new set of instruments θₜ and therefore passes forward. Different values give different but stronger predictions of the same initial condition, so combining members looks like different weather effects, not independent noise at each grid point.

Training in Marginals with CRPS, Learning Collaborative Structure

The basic choice is that FGN is only trained on a per-site, per-variable basis, not on specific multicarite tests. The model uses continuous points (CRPs) as training losses, which are combined with the appropriate ratio in combining samples at each grid point and are combined by variables, levels and time. CRPs promote a sharp, well-balanced distribution of each scalar value. In the later stages of training, the authors introduce short autoregrouper rolleut, up to 8 steps, and spread back through several releases, which is necessary for a difficult intensity but not necessary for good collective behavior.

Besides using only marginal observations, low noise and effective recovery of the model to learn about the joint structure. For a single 32-dimensional noise vector with global dimensions, the easiest way to reduce the CRPSs everywhere is to include the spatial correlation and the dynamic correlation in these variables. The test ensures that the resulting aggregates are captured by true aggregates in regions and derived values.

Limited benefit over gencast and traditional baselines

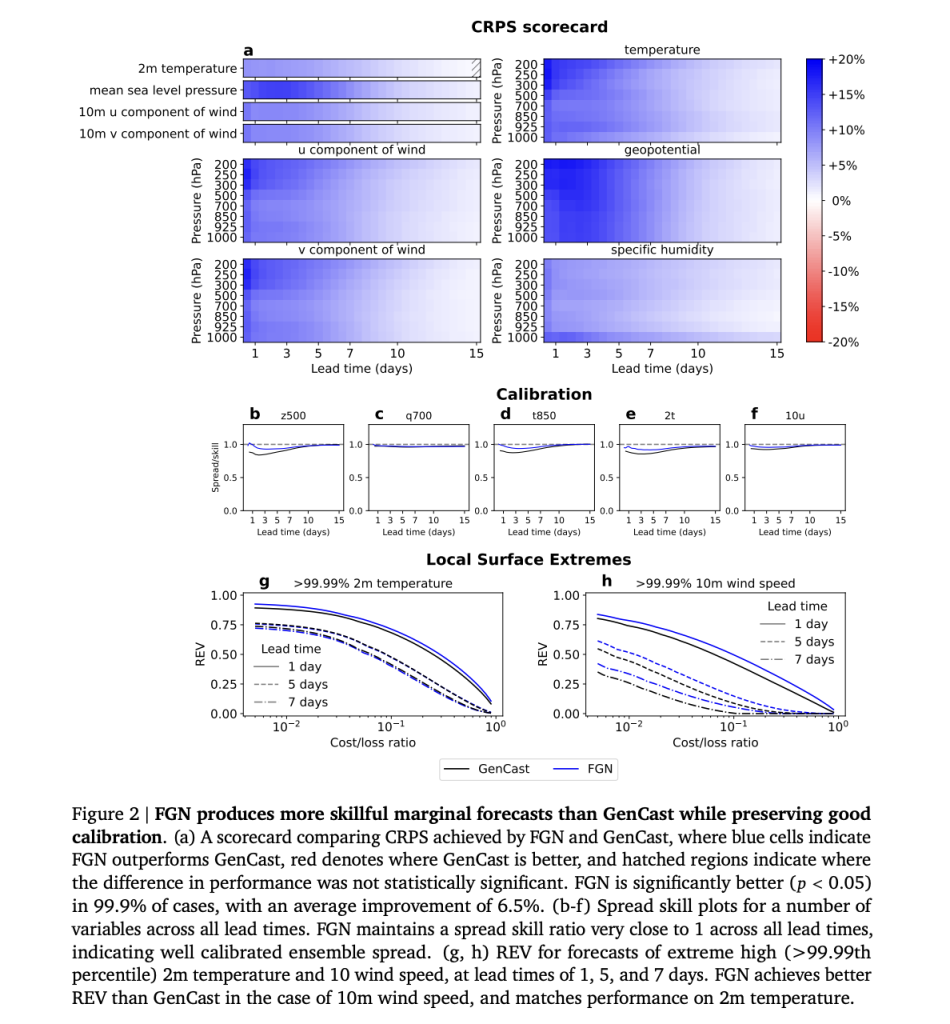

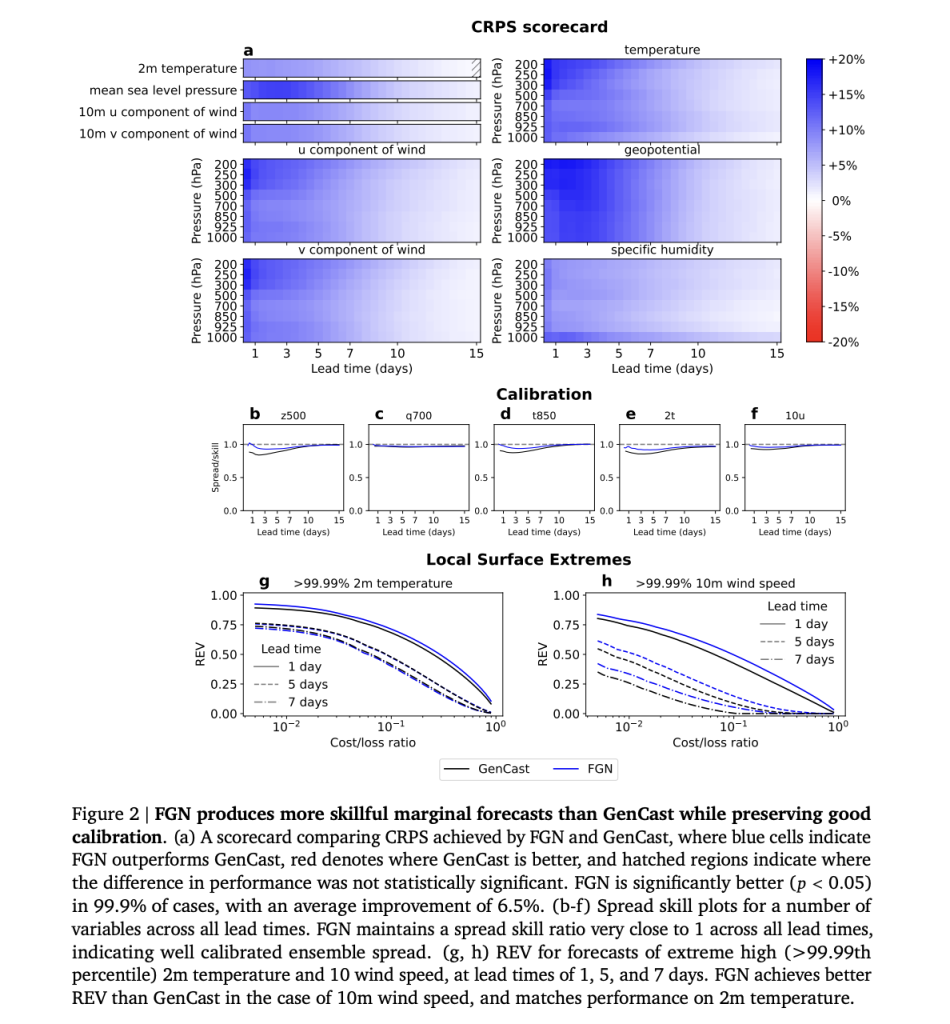

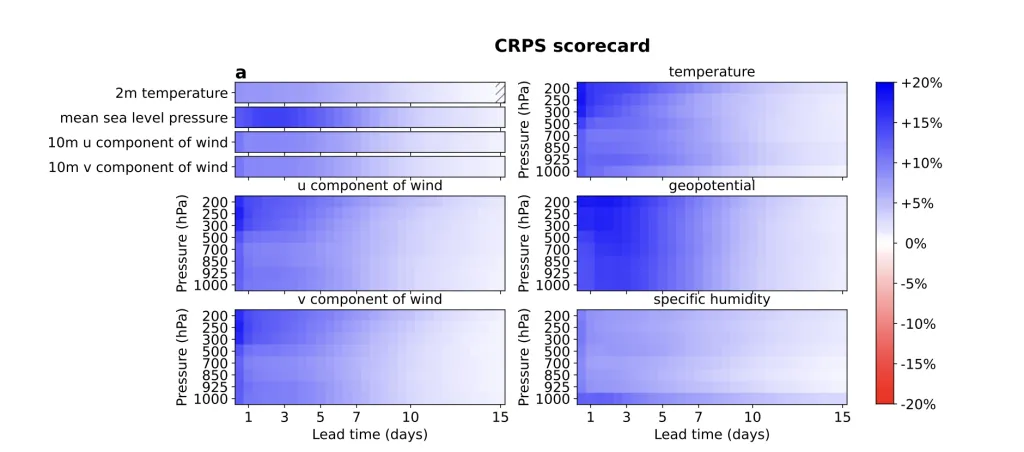

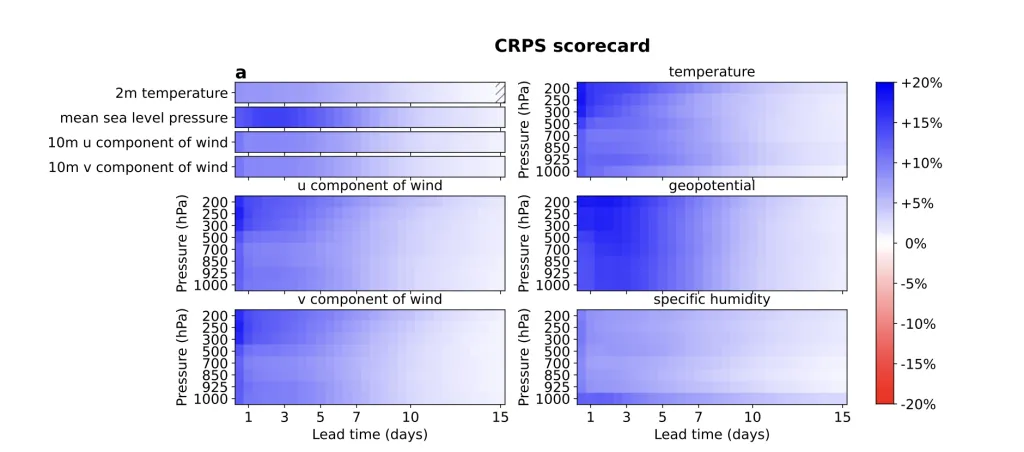

In marginal metrics, weatherthertx 2's FGN Ensemble clearly outperforms Gencast. FGN achieves better CRPs in 99,9% of cases with significant critical benefits, with an average improvement of 6,5% and high benefits close to 18% in other 18 cases. Ensemble mean Root Squed Squared Eless also improves while maintaining a good relationship of the skill spread, which shows that the spread is consistent with the 15-day forecast error.

To evaluate the joint structure, the research group examines the CRPS after installing Spatial windows at different scales and with values taken at 10 meters such as geopositivity between 300 HPA and 500 HPA. FGN improves both median CRPS and max pooled cry relative to gencast, indicating that it is better for models to include levels of cohesion and multivariate relationships, not just discrete values.

Tropical cyclone tracking is a very important use case. Using an external tracker, the research team includes tracking errors. FGN achieves position errors corresponding to one more day of usable forecasting ability compared to gecast. Even if it is forced to the 12 hour TimeStep version, FGN still releases the gencast at these 2 times. The analysis of the economic value related to following the fields is likely to favor and FGN more than the gencast beyond the level of the costs of the loss of costs, which are important for people who are limited to the planning and protection of assets.

Key acquisition

- Active network performance: Weatherthertx 2 is built on an active active network, a graph Transformer ENCLENGEL that predicts the full 15 Day Grid in Trumeplep of 65 ° Levels of 13 different levels.

- An Explicit Model of Epistemic and Aleatoric Uncertainty: The system consists of 4 independently trained seeds of FGN in uncertainty with a shared epistemic oxcation of 32 dimensions of network input that experience general uncertainty, so each sample is a uniform climate.

- Trained for mardals, they develop a unified structure: FGN is trained only in the mardals of each area using the fair CRPS and still separates the joint structure of Weathernery area based on WeatherHervext Geden Featured Forven and geopotent.

- Consistent accuracy of gecast and Weatherthertxt Gen: Weatherthertxt 2 achieves better CRPS than the gencast feed weathertherk model with 99.9% of the combined CRPS around 6,5 percent, the combined economic experen of extreme event limits.

Look Full paper, Technical details and Project page. Feel free to take a look at ours GitHub page for tutorials, code and notebooks. Also, feel free to follow us Kind of stubborn and don't forget to join ours 100K + ML Subreddit and sign up Our newsletter. Wait! Do you telegraph? Now you can join us by telegraph.

Michal Sutter is a data scientist with a Master of Science in Data Science from the University of PADOVA. With a strong foundation in statistical analysis, machine learning, and data engineering, Mikhali excels at turning complex data into actionable findings.

Follow Marktechpost: Add us as a favorite source on Google.