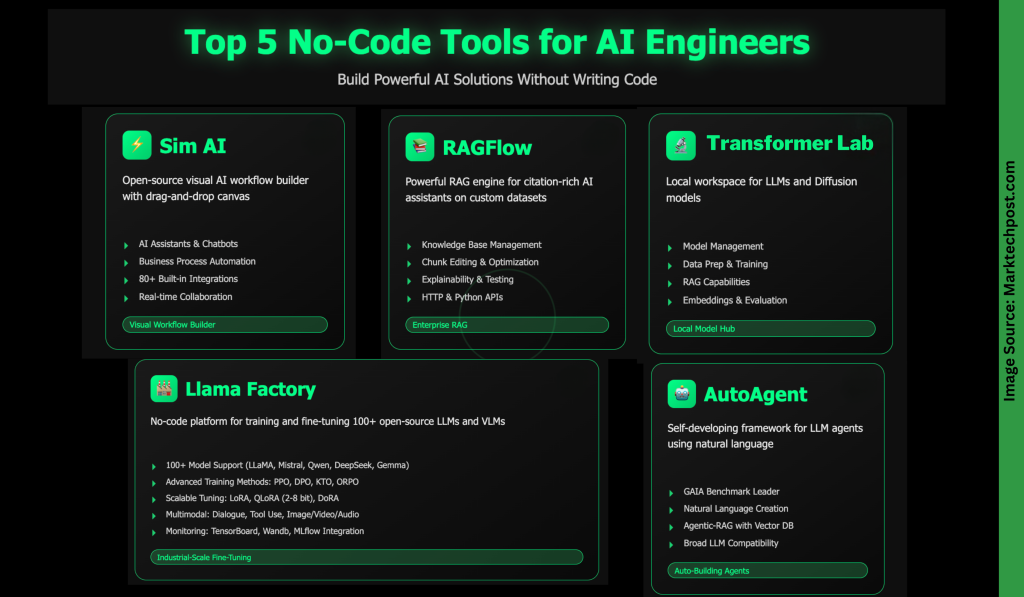

Top 5 Ne-Code Tools of AI / Developers

In today's world driven by AI, NO-CODE tools change people to build and send outtracting apps. They empower anyone – whether they have released solutions immediately and effectively. From the development of Enterprise-Grad Systems development programs for many agents or hundreds of majority of services, these platforms are very slowing to improve time and effort. In this article, we will examine five coding tools that can build AI solutions faster and more access to more.

SIM AI is an open source platform for visualization and sending a function of AI AGENT-no codes required. Using its can-and-drop canvas, you can connect AI models, APIs, details, and business tools to create:

- Ai and Chatbots: Web agents are searching for the Web, access calendars, send emails, and participate in business apps.

- The automated business process: Take up activities such as data entry, reports reporting, customer support, and content content.

- Processing data and analysis: Remove insights, analyze datasets, create reports, and sync data throughout the program.

- API to combine work travel: Orchestrate logic complex, financial management services, and default management of event.

Important features:

- Visible Canvas with “Smart Block” (Ai, API, logic, output).

- Many causes (conversation, an apple, webooks, schedules, slacks / gethuthubs).

- The cooperation of the original time group and permits.

- The built-in integration is 80 + (AI models, communication tools, production apps, Devi plans, search services, and data details).

- MCP support for customized compilation.

Documents to write:

- Cloud-SAIDS (infrastructure managed by measuring and caution).

- Self-control (with docker, with support for data private models).

Ragflow is a powerful repair engine – unpleasant dislikes (RAG) that helps create ai-based AI assistant assistants, rich rich than your information. It works on X86 CPUS or NVIDIA GPUS (Outntal ARM builds) and provides full or slim docker photos for immediate submission. After enforce a local server, you can connect the LLM-Via API or local lloma – to deal with the conversations, embark on, or image-to-text. Ragflow supports the most popular languages and allows you to set the default or performing custom models to each assistant.

Key skills include:

- Basic Management of Information: Enter PARSE files (PDF, name, CSV, Photos, Slides, Slides) in Datasch, select the installation model, and edit the content to return.

- Schunk order and good use: Check out mixed chunks, add keywords, or correct content materials to improve search accuracy.

- AI chat assistants: Create contacts linked to one or more information, preparing fall answers, and good promotions or model settings.

- Explanation and Evaluation: Use internal built-in tools to verify a return quality, monitoring performance, and view real time quotations.

- Compilation and accumulation: Leverage HTTP and Python Apis for app compilation, with optional Sandbox for a safe code within the negotiations.

Transformer Lab is a free workplace, open for large-language models (llMS models) and Deffion models, designed to run on your local machine – whether it is Apple MC-or in the cloud. It lets you download, chat again, and check out the llms, produce pictures using disturbance models, as well as payment, everything from one variable.

Key skills include:

- Figure Management: Download and collaborate with llms, or generate photos using Shop-of-The-Art models.

- Data preparation and training: Create Datassets, Fine-Tune models, or rail models, including RLHF support and preference.

- RAG) generation: Use your documents to strengthen smart, based conversations.

- Embed and testing: Calculate the embedding and evaluate the functioning of model in different nominations.

- Pleasing and Public: Create plugins, offer to the basic app, and participate in the working community of Discord.

The Llama-Factory is a powerful platform for the training code and large opened models of the well (llMS) source (vlms) models. It supports more than 100 models, good multimodal models, advanced algorithms, and the configuration of the size. Designed for researchers and ownerships, providing pre-train training tools, good guidance, Rewarding, and Strengthening PPO and DPO and simple testing and immediate assessment.

Highlights main points include:

- Support of a wide model: Works with LLAMA, MISTA, MISTH, QWEN, DEEPSEEK, Gemma, Chatglm, Pho, Mixtral-Moe, and many others.

- Training Ways: Supports advanced training, Multimodal SFT, reward model, PPO, DPO, OPO, orpo, and more.

- The best editing options: Full search, associated lorery, Lora, Qlora (2-8 bit), Co. Dora, and other well-functioning methods.

- Advanced algorithms & of TityMation: Includes Galo Galo, Badam, Apollo, MUNO, Flashattwe-2, measuring threads, and Ftune, rslora, and others.

- Jobs & Modalities: Discussion Topic, tool usage, photo / video / audio, visual recognition, and more.

- Monitoring and moving: It meets LLAMABOARD, TENSORBOARD, WANDB, MLLLOW, AND SWANLAB, AND GIVE EMPLOYED OPPOSITH OPENAI APIS, GRADIO UI, or VLLM / SGLAN / SGLAN / SGLANS.

- Flexible Infrastructure: It is compatible with the Younttorch, could not be modified, depth, Bsandbytes, and supports the CPU / GPU setting with the fair value of the memory.

Autoagent is automatically applicable, developing self-improvement that allows you to create and place the powerful llm agents using only natural language. Designed to facilitate difficulty movement of work, allowing you to build, customize, and use wise and assistants without writing a single code line.

Important features include:

- High performance: It reaches the highest tier results in Gaia Benchmark, which is affected by the auditors of the deepest good.

- Agent & Workflewflew: Build tools, agents, and travel in a simple language of moving nature – no codes are required.

- Agentic-Rag with the Vector traditional database: It also comes with the domain of administrative information, which provides higher returns as compared to traditional solutions such as Langchain.

- A broad consistency of llm: It meets outside the fast-earned models such as Openai, Anthropic, Deepseek, VLMM, Grok, Face, and more.

- Changing Co-operatives: Supports both function-Call-Calling and in the afternoon and the style of displaying various cases.

Finding and expanding: Ai-inspiring AI assistant to customize and widen while you are always working well.

I am the student of the community engineering (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in data science, especially neural networks and their application at various locations.