Supertone Releases Supertonic v3: An On-Device Text-to-Speech Model With 31 Language Support, Fewer Reading Failures, and Highlighter

Supertone has released Supertonic 3, the third generation of its on-device, ONNX-based text-to-speech system. Supertonic 3 ships with support for 31 languages, improved reading accuracy, fewer repetitive failures and skips, and ONNX public goods compatible with v2. Lightning Fast, On-Device, Multilingual and Accurate TTS.

Changed from v2 to v3

Compared to Supertonic 2, Supertonic 3 reduces repetitive failures and skips, improves speaker uniformity in a shared language set, and expands language usage from 5 to 31 languages. Version 2 supports English, Korean, Spanish, Portuguese, and French. Version 3 adds Japanese, Arabic, Bulgarian, Czech, Danish, German, Greek, Estonian, Finnish, Croatian, Hungarian, Indonesian, Italian, Lithuanian, Latvian, Dutch, Polish, Romanian, Russian, Slovak, Slovenian, Swedish, Turkish, Ukrainian, and Vietnamese — 31 ISO language codes. There is also a special one na a backup of a document whose language is unknown or without a supported set.

The model is slowly growing to accommodate more languages. At about 99M parameters across ONNX public assets, Supertonic 3 is significantly smaller than open TTS systems by 0.7B to 2B. The small size of the model is a practical advantage for download size, startup time, and definition on the device. The update also brings a total of ONNX public goods printing disc 404 MB. In addition, Supertone has recently introduced a system Voice Builderwhich allows developers to create custom, native TTS models from their voice recordings.

One new capability in v3 that wasn't in v2 is clear tag support. Supertonic 3 supports simple speech tags like this

Architecture and Runtime

Basic architectures carry over from previous versions: a speech autoencoder that encodes waveforms into continuous latent representations, a flow-to-latency module that maps text to audio features, and a time predictor that controls natural timing. Flow matching is a generative modeling technique that learns a vector field to transform a simple distribution into a target distribution – it samples faster than distribution models with lower computational steps, which is why Supertonic can produce usable output in just 2 decision steps. To further refine the output, v3 includes Length-Aware Rotary Position Embedding (LARoPE) advanced speech-to-text alignment and uses a Self-Cleaning Flow Simulation method during training to remain robust against noisy data labels.

In terms of performance, Supertonic 3 runs faster on the CPU, even when compared to larger benchmarks measured on the A100 GPU, and uses very little memory. It doesn't require a GPU, which makes local, browser, and limited deployments much easier.

Learning Accuracy

For all languages measured, Supertonic 3 stays within the WER/CER range that competes with larger TTS models such as VoxCPM2, while maintaining a lightweight approach to the device. WER (Word Error Rate) and CER (Character Error Rate) are standard metrics for TTS reading: you compile a passage, apply ASR over the output, and compare the transcription to the original text. CER is used for languages that do not have clear word boundaries; others use WER. The efficiency of the system is best reflected in the hardware at the extreme edge; reaches the average 0.3x RTF of Onyx Boox Go 6 (iE-ink e-reader) in airplane mode. In addition, the ecosystem has been expanded to include Flutter (with macOS support), .NET 9again Go awaywhile web usage is growing onnxruntime-web with pure client-side functionality.

General Documentation

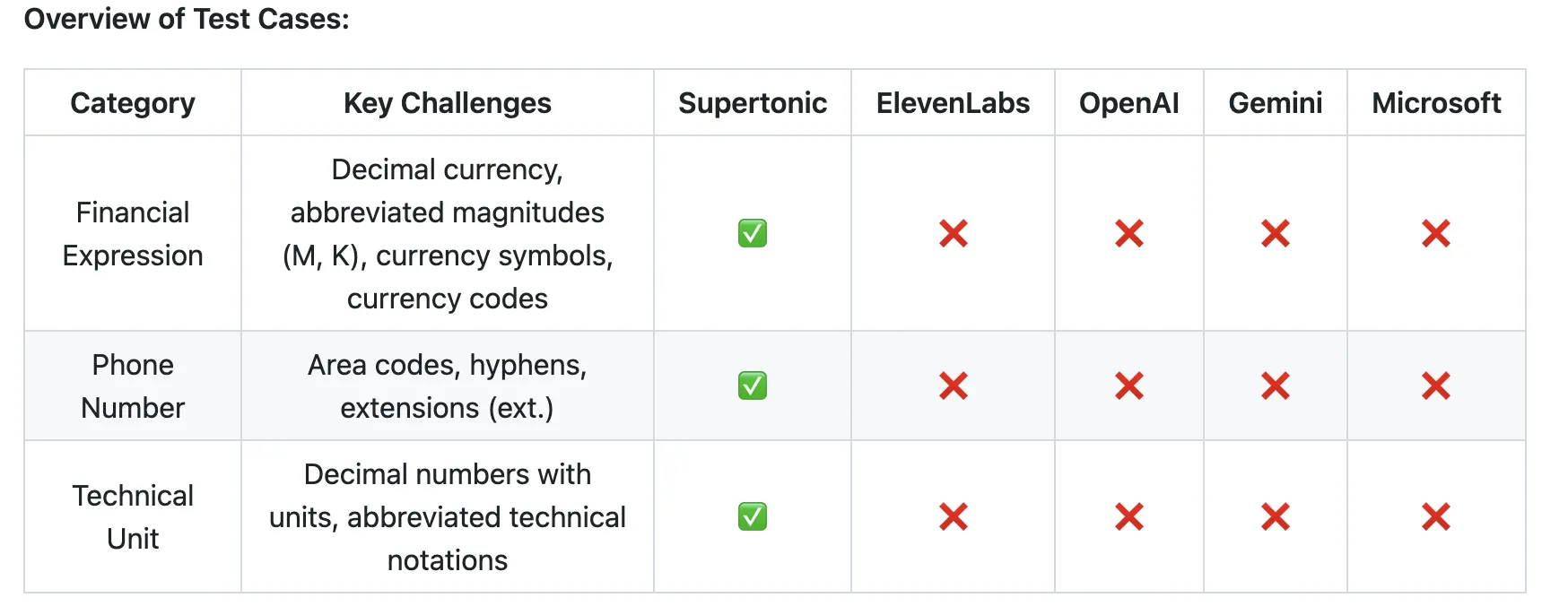

A distinguishing feature carried forward from v2 is the adaptation of the built-in script. Supertonic handles more complex forms – financial expressions such as $5.2Mphone numbers with area codes and extensions such as (212) 555-0142 ext. 402time and date formats are similar 4:45 PM on Wed, Apr 3, 2024and technical units like 2.3h again 30kph – without any pre-processing pipeline or phonetic annotations. The financial expression “$5.2M” should be read as “five point two million dollars,” and “$450K” as “four hundred fifty thousand dollars.” All four competing programs failed this. The technical unit “2.3h” should be read as “two points three hours” and “30kph” as “thirty kilometers per hour.” All four contestants failed at this stage. Competing systems tested include ElevenLabs Flash v2.5, OpenAI TTS-1, Gemini 2.5 Flash TTS, and Microsoft.

Getting started

The installation of the Python SDK is pip install supertonic. On first launch, the SDK downloads model properties from Hugging Face by default. A small example:

from supertonic import TTS

tts = TTS(auto_download=True)

style = tts.get_voice_style(voice_name="M1")

text = "A gentle breeze moved through the open window while everyone listened to the story."

wav, duration = tts.synthesize(text, voice_style=style, lang="en")

tts.save_audio(wav, "output.wav")

print(f"Generated {duration:.2f}s of audio")Marktechpost Visual Explainer

Key Takeaways

- Supertonic 3 extends language support from 5 (v2) to 31 languages, growing from 66M to ~99M parameters with a total ONNX legacy size of 404 MB

- New in v3: clear tags (

- ONNX public interface compatible with v2 — existing integration improves without changing the inference code

- Reading accuracy benchmarked against VoxCPM2; The v3 stays within the competitive WER/CER range while being very small

- RTF/throughput numbers specific to v3 have not been published; the 167× faster than real-time calculation is a v2 benchmark and should not be considered the same as v3

- Native output of WAV files are 16-bit ensuring reliable sound for engineering applications

Check it out GitHub Repo again Hugging Face Space. Also, feel free to follow us Twitter and don't forget to join our 150k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us