NVIDIA and AI Mistral AI Bring 10x Faster Detection of Mistral 3 Family to GB200 NVL72 GPU

Nvidia announced today a significant expansion of its strategic partnership with arbitrary AI. This collaboration coincides with the release of the new open 3 model family, marking a pivotal moment in which Hardware acceleration and open line modeling combined to redefine performance benchmarks.

This collaboration is a great way for Speence to infence speed: new models now run up to 10x Faster on NVIDIA GB200 NVL72 Systems compare to previous H200 plans H200. This breakthrough unlocks the efficiency revealed by Enterprise-grade AI, which promises to solve the latency and expensive bottlenecks that have plagued large-scale deployments of high-end models.

Standard jump: 10x faster on Blackwell

As Enterprise needs move from simple Chatbots to highly interactive, contextual agents, the usability of anfence has become a critical bottleneck. The collaboration between Nvidia and Mistral AI looks at this head by expanding the family of 3 exclusively for Nvidia Blackwell architecture.

Where AI AI systems must deliver a solid user experience (UX) with a fair standard, the NVIDIA GB200 NVL72 achieves 10x higher performance than the previous H200 H200. This is not just an advantage in green speed; it translates into higher energy efficiency. The program passes 5,000,000 tokens per megawatt (MW) second At interactive levels users are 40 tokens per second.

In data centers facing energy problems, this efficiency gain is important as performance is encouraged. This productivity leap ensures a low token cost while maintaining the high throughput required for real-time applications.

New New Family 3

The engine driving this performance is the newly released three-year-old family. This suite of models delivers industry-leading accuracy, efficiency, and customization capabilities, covering the spectrum from big data operations to enterprise devices.

Most Inappropriate 3: Flagship MOE

On the position that resides in the 3rd largest setting, the sparse model is multi-sparse and multi-language hybrid.

- Absolute parameters: 675 billion

- Active parameters: 41 billion

- Context Window: 256K tokens

Trained on NVIDIA HIPPER GPUS, MISTRALENG CORT GRACH is designed to handle complex computing tasks, providing compatibility with high-tier closed models while maintaining the flexibility of open weight.

3: Power is dense at the edges

Compatibility is a great model 3 Series, a suite of compact, compact, high-performance models designed for speed and flexibility.

- Sizes: 3B, 8B, and 14b parameters.

- Miscellaneous: Base, order, and consult each size (nine models).

- Context Window: 256k tokens fall on the board.

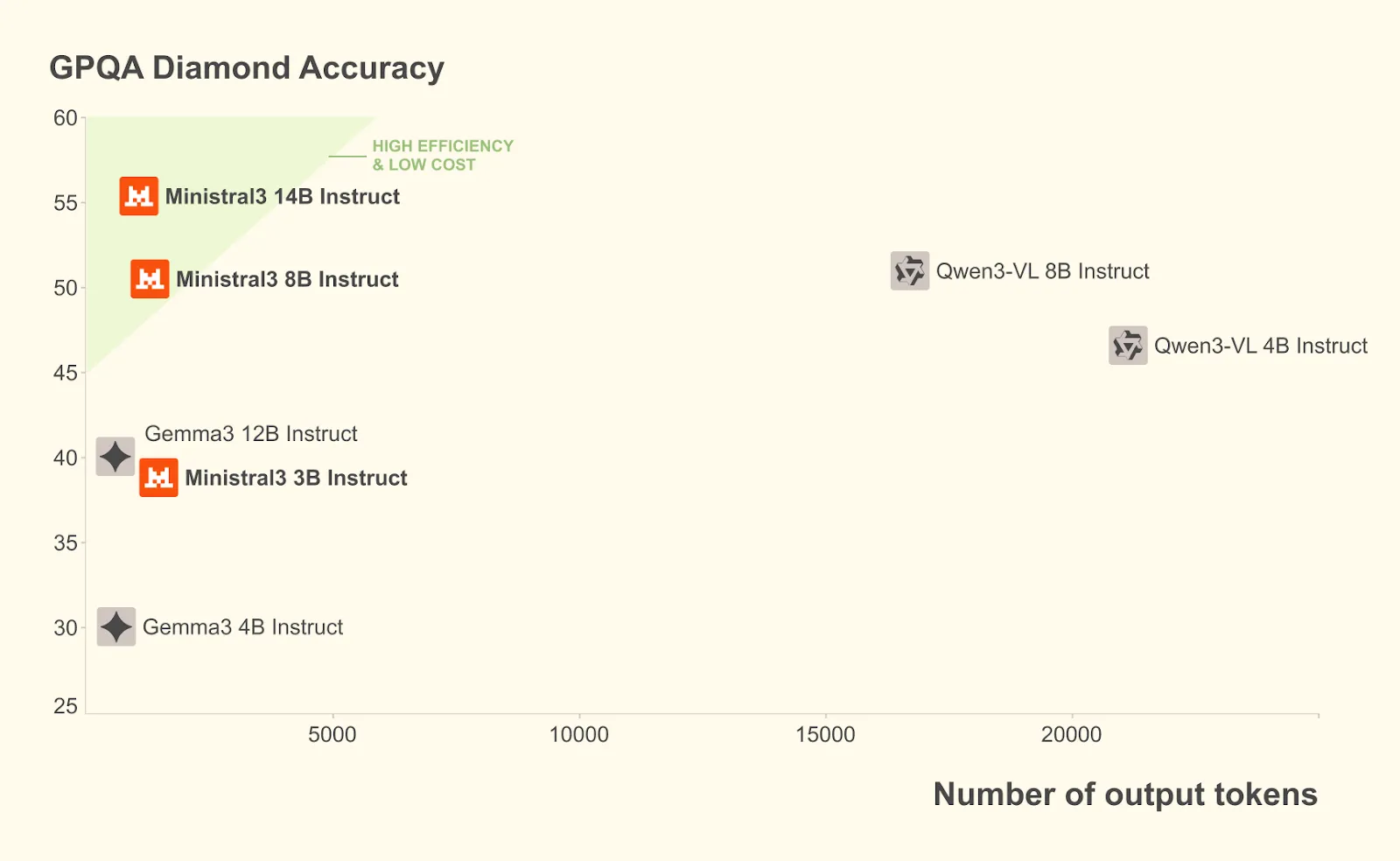

This page 3 Series of Excel Exclackark bent for GPQA by using 100 small tokens while high delivery:

Great Engineering behind Speed: A comprehensive stack for efficiency

The “10x” performance claim is driven by a whole bunch of optimizations made by poor developers with Nvidia. The teams have adopted a “consolidation of capital” approach, combining hardware capabilities with the optimization of the construction model.

Tensort-LLM Broad Scholar (Broad-EP)

To fully exploit the large scale of the GB200 NVL72, Nvidia was recognized for its extensive technology within Tensorrt-LLM. This technology provides Moe Groupgemm Kernels, expert distribution, and load balancing.

Obviously, EP-width exploits NVL72's NVL72 parallel memory and nvlink fabric. It is highly resistant to structural breakdown in all major pores. For example, Critical Risk-3 uses approximately 128 sensors per layer, about half as many as comparable models like the Deepseek-R1. In addition to this difference, the wide eP enables the model to realize the high-bandwidth, low-latency, non-blocking advantages of the nvlink fabric, ensuring that the large size of the nvlink does not cause communication bottlenecks.

Native NVFP4 size

One of the most important technical improvements in this release is the support for NVFP4, a benchmarking format that belongs to Blackwell's invention.

With 3 major personalities, developers can use a fully optimized NVFP4 test environment offline using the Open-Source LLM-Compressor Library.

This approach reduces the computational and memory costs while maintaining accuracy. It achieves high FPFP4 NVFP4 high FP8 features for measurement and manipulation of measurement to control the error of using values. The recipe specifically targets the MOE instruments while keeping other parts with original accuracy, allowing the model to seamlessly transfer to the GB200 NVL72 with minimal loss of accuracy.

Disabled serving with nvidia dynamo

You're a big boss 3 using Nvidia Dynamo, a low-end framework, so you don't have to protect your READS and STORAGE.

In a traditional setup, the initial phase (fast processing of the input) and the call phase (producing the output) compete for resources. By matching the ratio and blocking these sections, the dynamo greatly strengthens the performance of long context work areas, such as 8k / 1K output editing. This ensures high response even when using the 256K model mode window.

From cloud to edge: Ninist 3 performance

Efficiency efforts extend beyond large data centers. Recognizing the growing demand for local AI, the Nininyi Laminyile series will increase the deployment of EDDLEs, providing flexibility for various needs.

RTX and jetson acceleration

Many Mini models are optimized for platforms such as NVIDIA GEFORE RTX AI PC and NVIDIA Jetson Robotic Modules.

- RTX 5090: The Ministerund-3B Variants can reach the permissible speed of 385 tokens per second On NVIDIA RTX 5090 GPU. This brings World-Class AI performance to local PCs, enabling fast iTeation and Great Data privacy.

- Jetson Thor: For robotics and ai, developers can use vllm container on nvidia jetson thor. The Ministring-3-3b-Model I'm teaching reaches 52 tokens per second for one consensus, scaling up to 273 tokens per second for the 8th meeting.

Comprehensive framework support

Nvidia has partnered with the open source community to ensure these models are used everywhere.

- Llama.cpp and ollama: Nvidia collaborated with these popular frameworks to ensure fast scaling and low latency for local development.

- Slang: Nvidia teamed up with Suglang to create a big personality implementation that supported both non-conformist and assumed assumptions.

- vllm: Nvidia worked with vllm to increase support for kernel integration, including prediction compression (Eagle), Blackwell support, and similar extensions.

Production – Good for Nvidia Nim

To move business adoption, new models will be available Nvidia nim microservices.

Illegal Three and Tutorial 14B are currently available through the NVIDIA API catalog and API preview. Soon, enterprise developers will be able to use Nvidia Nim microservices. This provides a leading, production-ready solution that allows enterprises to deploy the Mitral 3 host family with minimal setup on any GPU-accelerated infrastructure.

This discovery ensures that the specific “10X” benefit of the GB200 NVL72 can be realized in production environments without complex engineering, democratizing access to Frontier class intelligence.

Conclusion: A new measure of open intelligence

The release of the NVIDIA-accelerated open source model 3 represents a giant leap for AI in the open source community. By offering front-end performance under an open source license, and paying for it with a powerful hardware stack, it's true that nvidia meets developers where they are.

From the large scale of the GB200 NVL72 using wide-ep and NVFP4, to the depth of the lunar mass on the RTX 5090, this interactive work brings a method of great intelligence. With future functions such as multitoken prediction (MTP) and Eagle-3 expected to force more performance, the Mistral 3 host family is poised to become the foundation of the next generation of AI Applications.

Available for testing!

If you are a developer who wants to document these performance benefits, you can download the Mistral 3 models directly from the surface immersion or testing of the uncaught versions that are sent to build structures.

Check out the models at Face binding. You can get details Company blog and A technical / engineering blog.

Thanks to the Nvidia AI team for thought leadership / resources for this article. Nvidia AI team supported this content / article.

Jean-Marc is the company's AI business manager. He leads and accelerates the development of powerful AI solutions and started a computer company founded in 2006. He is a featured speaker at AI conferences and has an MBA from Stanford.

Follow Marktechpost: Add us as a favorite source on Google.