Gemini 3 Pro by GEA 3 PRO transforms the context of sparse moe and 1m, token token into an efficient engine for loading multimodal agentic tasks

How do we move from linguistic models that only respond to systems that can represent more than a million token states, understand real-world signals, and act reliably as set agents? Google recently released the Gemini 3 family With the Gemini 3 Pro as couteplepeapeats the position as a big step up from conventional AI systems. The research group describes the Gemini 3 as its most intelligent model to date, in the form of artistic consultation, strong multimodal understanding, and aventic and aventic aventic capabilities. Gemini 3 Pro launches in preview and is already wired into the Gemini app, AI mode in search, Gendini API, Vertex AI aventic agentic Development Platform.

Sparse Moe Transformer with 1m token core

Gemini 3 Pro is a sparse mix of professional Transformer Model with native support for most text, images, audio and video inputs. Sparse Moe layers each basic path with a high level of expertise, so the model can estimate the parameter without paying a proportional cost per token. Input can reach 1m tokens and the model can generate 64k output tokens, which is important for codebases, long documents, or multi-hour scripts. The model is trained from the beginning of the moment rather than a good thing of Gemini 2.5.

Training data includes large-scale web text, code in multiple languages, images, audio and video, combined with licensed data, user interaction data, and synthetic data. Post Training uses multimodal instructional guidance and reinforcement of personal feedback and criticism to develop multimodal communication, problem-solving and assertiveness behaviors. The program works on google tensor units processing TPU, with training done in Jax and ML Pakays.

Consult benchmarks and trendsetting functions

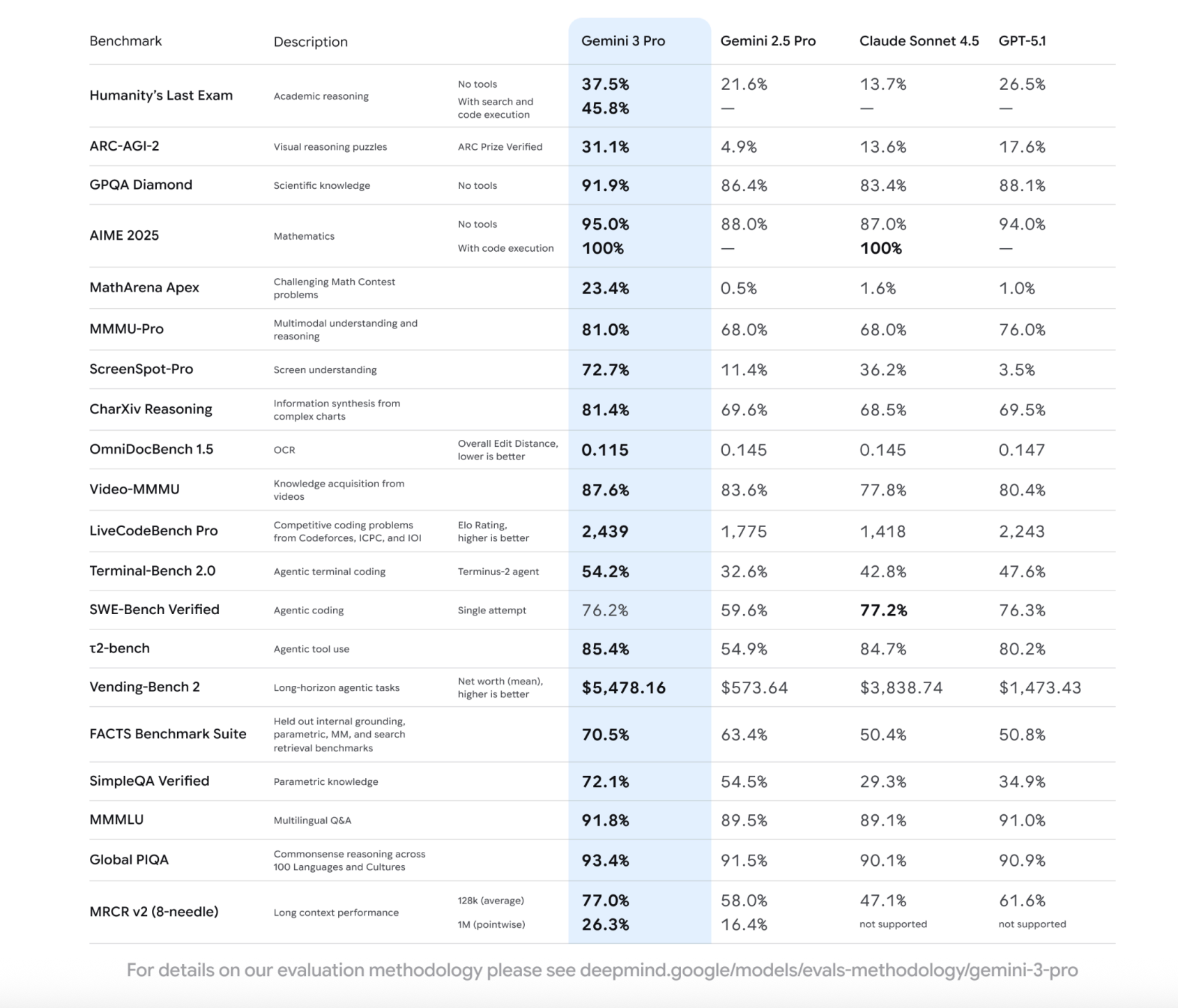

In the Middle Benches, the Gemini 3 Pro clearly outperforms the Gemini 2.5 Pro and competes with other Frontier models such as the GPT 5.1 and Claude Sonnet 4.5. In the final personality test, which includes PHD Level questions across many scientific and human domains, 37.6 percent of GEMINI 2.5 Pro, 26.7 percent of Claude Sonnet 4.5. With Search and Code Enter Reception enabled, the Gemini 3 Pro achieves 45.8 percent.

In Arc AGI 2 visual puzzles, the percentage of Gemini 3 pro reaches 4,9 percent of Getini 2.5 Pro, and before GPT 5.1 with 17,6 percent. With the scientific question answering with the GPQA diamond, Gemini 3 Pro reaches 91.9 percent, below GPT 5.1 with 88.5 percent and 83.4 percent. In mathematics, the model reaches 95.0 percent in aime 2025 without tools and 100.0 percent with the execution of the code, while placing 23.4 percent in mathrena apex, a competitive challenge in style.

A lot of understanding and behavior that is a long context

Gemini 3 Pro is designed as a traditional multimodal model instead of a text model I added ONS. In MMMU Pro, which measures thinking about multimoral topics in many university courses, it gets 81.0 percent compared to 68.0 percent in Gemini 2.5 Pro and Tlaude Soude 4.5, and 76.0 percent in GPT 5.1. In video mmmu, which tests the availability of information from videos, the gemini 3 pro reaches 87.6 percent, ahead of the gemini 2.5 pro with 83.6 percent and other models.

User interface and document understanding are also improved. Screenspot Pro, a benchmark for detecting things on the screen, shows Gemini 3 Pro at 72.7 percent, compared to 11.4 percent of Gemini 2.5 Pro, 36.2 percent of GPT 5.1. In Omnidocbench 1.5, which reports the overall editing grade of OCR and structured document understanding, Gemini 3 Pro reaches 0.115, the lowest of all shares in the comparison table.

Long story short, the Gemini 3 Pro was tested on the MRCR v2 with the 8 needle restored. In the medium 128k condition, we get 77.0 percent, and the 1M token token shows up to 26.3 percent, ahead of the Gemini 2.5 Pro with 16.4 percent, while competing models do not support that condition compared to the publication.

Codes, agents and Google antigravity

For software developers, the key issue is coding and agentic behavior. Gemini 3 Pro reaches the LMARERENA leaderboard with an Elo Score of 1501 and reaches 1487 Elo in the Webdev Arena, which evaluates Web development activities. In Terminal Bench 2.0, which tests the ability to use a computer with a terminal with an agent, it reaches 44.6 percent, 42.5 percent, 42.5 percent and 320 Pro with 32.6 percent. In Bench Bench is verified, which measures one code to try the changes in the github forums, the percentage of gemini 3 pro is compared to 59.5 percent of Pro, 76,3 percent of claude sonnet 4.5.

Gemini 3 Pro is also doing well2 Bench for using the tool, by 85.4 percent, and in Vending Bench 2, which analyzes the long-term business planning, where it generates 5478.4 dollars for gedini 2.5 Pro and 1473.43 dollars for GPT 5.1.

These capabilities were revealed at Google Antigravity, the first development site of the development. Antigravity combines Gemini 3 Pro with the Gemini 2.5 browser-based computer operating system and the Nano Banana graphics model, so agents can plan, code, and verify results within a single workflow.

Key acquisition

- Gemini 3 Pro is a sparse mix of experts Terformer with traditional multimodal support and windows 1m token token, designed for large display with remote input display.

- The model shows a significant advantage over the Gemini 2.5 Pro in difficult benchmarks such as the final personality test, ARC AGI 2, GPQA Diamond and Matharena Apex 4.5 and Claude Sonnet 4.5 and Claude Sonnet 4.5.

- Gemini 3 Pro delivers strong multimodal performance on benchmarks such as MMMU Pro, MMMU Pro, video MMMU, Pro Screen Pro and Omnidocbelch, which are university-level questions, complex comprehension or complex comprehension or complex comprehension or complex comprehension or complex comprehension

- Coding and agentic usage cases are highly focused, with high scores on Be Bench certified, WebDev Arena, Terminal Bench and using benchmarks such as wench 2.

Gemini 3 Pro is a visible expression of Google's plan to reach more AGI, 1m architecture core, and strong performance of ARC AGI 2, GPQA Apex, Matharena Apex, WebMU Pro, and WebMu Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebMU Pro, and WebDev Arena. Focusing on the use of tools, terminal and browser control, and testing under the Government of Security Under the Frontiers position as an API ready for the preparation of APORTE Overall, Gemini 3 Pro is a bench-driven, agent-oriented response to the next phase of multimodal ai large.

Look Technical details and documentation. Feel free to take a look at ours GitHub page for tutorials, code and notebooks. Also, feel free to follow us Kind of stubborn and don't forget to join ours 100K + ML Subreddit and sign up Our newsletter. Wait! Do you telegraph? Now you can join us by telegraph.

Max is an AI analyst at Marktechpost, based in Silicon Valley, who are actively shaping the future of technology. He teaches robotics at Brainvyne, fights spam with compreememail, and applies AI every day to translate complex technological advances into clear, understandable content.

Follow Marktechpost: Add us as a favorite source on Google.