Alaba QWEN Team has just brought the FP8 to create QWEN3-NEXT-NEXT-80B-A3B (adhesive), bringing 80b / 3b-3b-active

Alpheni's Qwen's team recently issued FP8-DIVELPOINTS are designed for its new QWEN3-70B-A3B models in two training training.Coach including SpeculateAccording to the upper infection with Ultra-Contect Motion and the efficient MOE functionality. FP8 REPOS Mirror is issued but the best FP8 (FP8 size “benches on the first BF16 memory;

What is a 3b cell

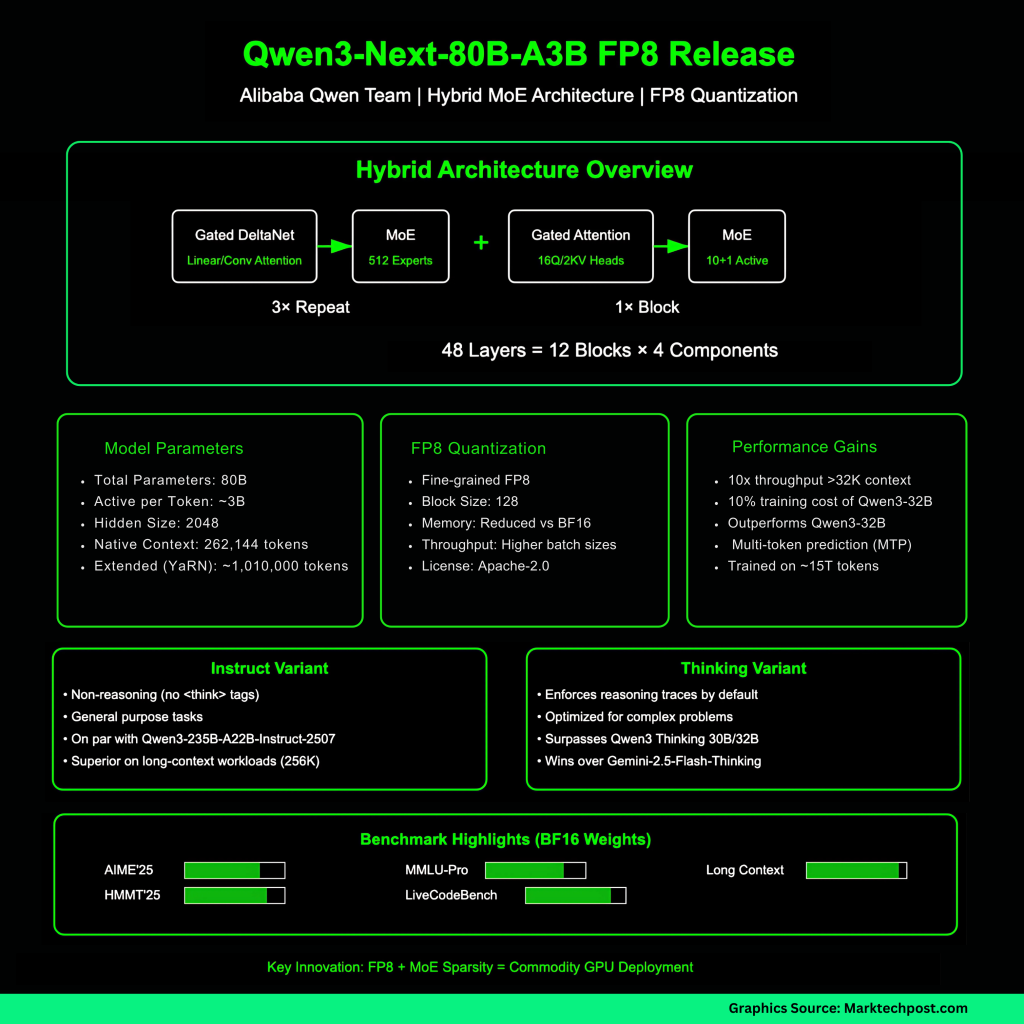

QWEN3-NEXT-80B-A3B Construction of Hybrid Controls DelTanet Deltanet (Surse / ANCY-style Surrogate) with Gated ignition, combined with Ultra-Sparse-Sparse-professional mixture (MOE). The total 80B budget of parameter We worked ~ 3B paramas with a 512 technicians (10 target + 1 shared). The building is described as 48 layers organized into 12 blocks: 3×(Gated DeltaNet → MoE) followed by 1×(Gated Attention → MoE). The traditional condition is 262,144 tokens, confirmed up to 1,010,000 tokens using wires (YARN). Hidden size is 2048; Attention is using 16 heads Q and 2 kV heads at Head Dim 256; Deltanet uses 32 V heads and 16 qk right head arrived 128.

QWEN Team reports on 80B-A3B-A3B-A3B-A3B-A3B-A3B-A3B-A3B-A3B-A3B-A3B-A3BS

FP8 Release: What really has changed

FP8 model cards say that the size “is well organized by FP8” Block 128 FP8 FP8 FP8 CARD Necutes the Passer consulting flag (eg --reasoning-parser deepseek-r1 in Suglang, deepseek_r1 to vllm). This release keeps Apache-2.0 licenses.

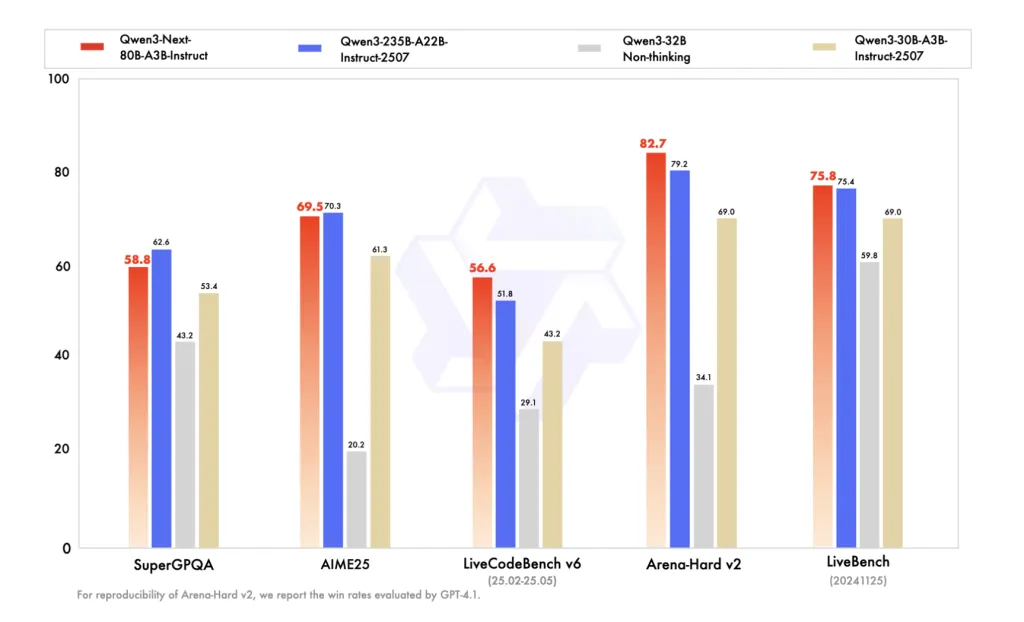

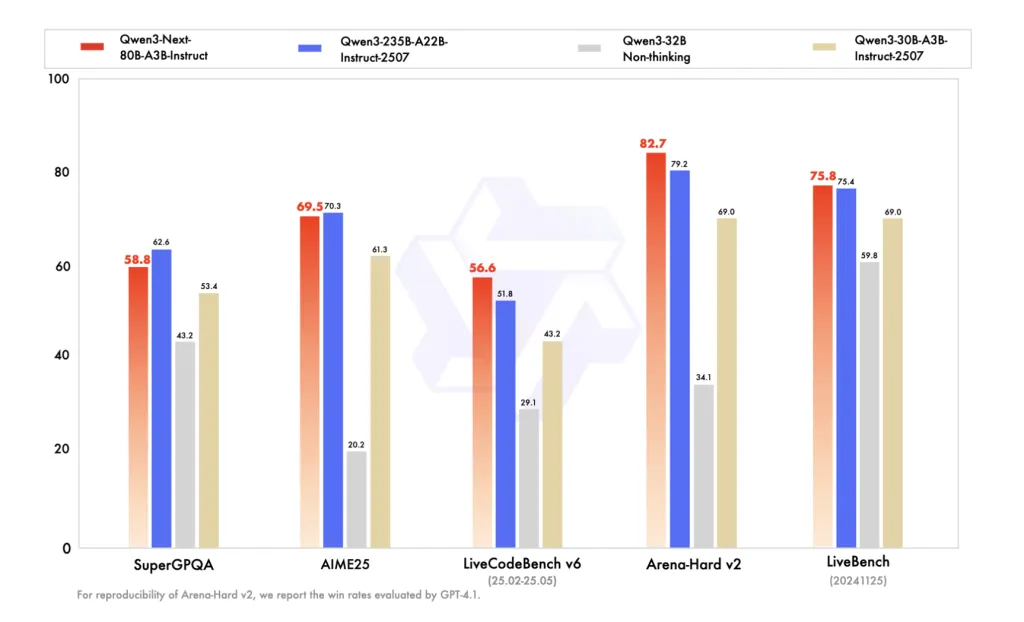

Benches (reported to BF16 metal)

FREED FP8 CARD BF16 BF16 Different Table, Putting QWEN3-80B-A3B-A3BB-A22B FP8 Card Card Suise Suete

Symptoms of training and background of training

A series trained in 15T tokens before training in the background. QWEN highlights the addition of stiffness of stability (limited focus, etc.) and using GSPO in the training of the RL-imaginary model. MTP is used to rush to accelerate and improve the pretense sign.

Why FP8 News?

In modern accelerators, FP8 performance reduces memory bandwidth pressure and footprint of the foot of the BX16, allows large sizes batch or several sequences at the same time. Because A3B routes only ~ 3B parameters with each token, a combination of FP8 + MOE sparsity compound benefit of long-term governments, especially when elected by MTP consideration. That means, rate users interact with competitive ways and attention; The actual expectancy of the imaginary process and the accuracy of the last work can vary from the engine and use of the Kernel – therefore the guidance of Current Sglang / Vallm and display contemplated settings.

Summary

FP8 FP8 FP8 / 3B-3B-based stack of active A3B to work on 256k City in normal engines, preserves the hybrid-moe design method and MTP a way to pass. Model cards Save benchmarks from BF16, so groups should ensure the accuracy of FP8 and latency with its stacks, especially in consultation of the PARSERS and imaginary settings. The net effect: low memory bandwidth and improved Turniven without restoration of information, placed loads of content production.

Look QWEN3-NEXT-80B-A3B Model model model-Coach including Speculate. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to join ours 100K + ml subreddit Then sign up for Our newspaper.

Asphazzaq is a Markteach Media Inc. According to a View Business and Developer, Asifi is committed to integrating a good social intelligence. His latest attempt is launched by the launch of the chemistrylife plan for an intelligence, MarktechPost, a devastating intimate practice of a machine learning and deep learning issues that are clearly and easily understood. The platform is adhering to more than two million moon visits, indicating its popularity between the audience.

🔥[Recommended Read] NVIDIA AI Open-Spaces Vipe (Video Video Engine): A Powerful and Powerful Tool to Enter the 3D Reference for 3D for Spatial Ai