Alabenen QWENEN Reveals QWEN3-4B-Stime-2507 and QWEN3-4B-thinking-2507: Revitalization of small languages

Small-effective minor models and capital support

Alenaba's QWen group commanded two powerful additives to its small language list: QWEN3-4B-STME-2507 including QWEN3-4B-thinking-2507. Without 4 parameters, these models brings different skills to all purposes in prison and Expert services. Both are designed with Windows 256K Token Windowsmeaning that they can process the longest installation as a large code of codes, are many Scriptural cabrics, and discussed discussions without external conversion.

Architecture and Core Design

Both of these conditions Four billion parameters (3.6B without depositing) built across The layers of 36 transformer. They use The attention of a distinctive question (snip) reference Questions of Questions 32 including 8 key / prices pricesDeveloping the efficiency and memory management about the largest circumstances. That's right Art of Great Buildings-The mixture-guarantees consistent work performance. Support of long-contest until 2622,144 tokens Baked directly to the construction of model, and each model is mostly available before proceeding Compliance with Training Safety to ensure responsible, high quality output.

QWEN3-4B-Stream-2507 – A common language of many languages, teaching

This page QWEN3-4B-STME-2507 The model is designed for speed, clarity, and orders that the following user is aligned. It is designed to bring straightforward answers without the steps in step.

Different languages More than 100 languagesMaking the best preparation of land on Chatbots, customer support, education and language search of the cross. Definite 256k magical support It enables us to manage jobs such as legal official documents, a number of hours processing, or summarizing many details without separating content.

Topic benchmarks:

| Benchmark work | Result |

|---|---|

| General Information (MMLU-Pro) | 69.6 |

| Reasoning (Aiese25) | 47.4 |

| Super line (Qa) | 42.8 |

| Codes (LiveCodebelch) | 35.1 |

| Old writing | 83.5 |

| Multiple (many) languages | 69.0 |

In operation, this means QWEN3-4B-STI-2507 can handle everything from Teaching the language in many languages above Creates rich content narrativeWhile it is still offering proper performance in consultation, codes, and information relating to background.

QWEN3-4B-thinking-2507 – Ratings for consideration of consideration

When the teaching model focuses on short response, QWEN3-4B-thinking-2507 The model is designed deep thinking and solving problems. It is automatically produced Thinking chains In its results, making its process of decisions seen – especially for the beneficial domains such as statistics, science and system.

This model exceeds Technical diagnosis, The translation of scientific informationbeside A more logical analysis. Ready for developed AI, research assistance, and friends who find codes that need to show problems before answering.

Topic benchmarks:

| Benchmark work | Result |

|---|---|

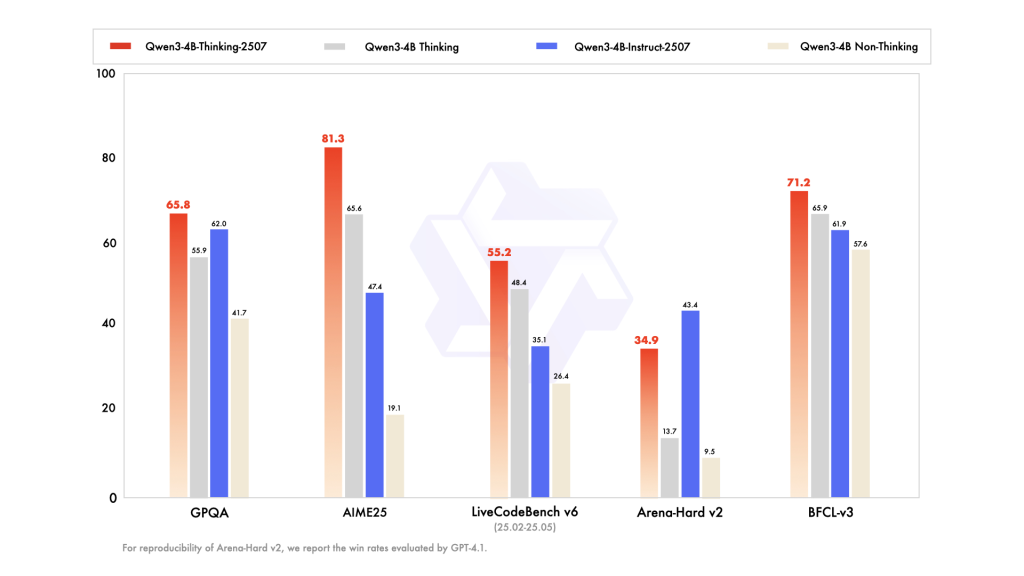

| Math (AIs25) | 81.3% |

| Science (Hmmt25) | 55.5% |

| General Qa (GPQA) | 65.8% |

| Codes (LiveCodebelch) | 55.2% |

| The use of tools (BFCL) | 71.2% |

| Alignment | 87.4% |

These scores indicate that the QWEN3-4B-thinking-2507 can match or exceed the largest models in consultation – severe benches, allowing accurate and descriptive effects on the critical use of equipment.

In both models

Both different tuition and thinking broadly share the main advances. This page 256k window of traditional content It allows for a task that is not a very long installment without external memory hacks. They also show advanced complianceIt produces many natural, unity, and natural discussions in creative and many discussions. Moreover, both of them are Agent-ReadySupport API call, consultation with many measures, and the flow of the workplace of-the-box operations.

In the viewing view, they work very well – can't run GPUS for the Kind of As the amount of the use of low memory, and it is completely compatible with the structures of modern renewal. This means that the developers Run in your area or measure them in clouds without investing an important income.

Active shipment and applications

Shipment is straight, with A broader outline to comply Enabling integration from any modern ML pipe. They can be used on the EDGE devices, assistants of the virtual Enterprise, research centers, coded areas, and creative studio. Phase conditions include:

- Following mode Teaching: Customer Support Bots, Provincial Assistants in many languages, the true generation of content.

- Imaginative mode: Analysis of Science research, legal thinking, tools to install advanced codes, and Agentic Automation.

Store

QWEN3-4B-ISA – 2507 and QWEN3-4B-thinking-2507 proves that Small-language models can participate with hundreds of models and offerms when they were to be improved. Their tall contraction is, strong, deep thinking (in thought mode), and the alignment mode makes them running two-day tools for daily apps and OI AI Ai Ai. Through these free, Alila is the new bench 256k okayer models, working well ai Available to worldwide developers.

Look QWEN3-4B-I Reward Thems – 2507 Model including QWEN3-4B-thinking-2507 model. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to sign up Our newspaper.

Michal Sutter is a Master of Science for Science in Data Science from the University of Padova. On the basis of a solid mathematical, machine-study, and data engineering, Excerels in transforming complex information from effective access.