I-Apple Machine Learning Research e-ICLR 2026

I-Apple ithuthukisa i-AI ne-ML ngocwaningo oluyisisekelo, oluningi lwalo olwabiwa ngokushicilelwa nokuzibandakanya ezingqungqutheleni ukuze kusheshiswe inqubekelaphambili kulo mkhakha obalulekile futhi kusekelwe umphakathi obanzi. Kuleli sonto, iNkomfa Yeshumi Nane Yamazwe Ngamazwe Yokumela Ukufunda (ICLR) izoba seRio de Janeiro, eBrazil, futhi i-Apple iyaziqhenya ngokuphinda ibambe iqhaza kulo mcimbi obalulekile womphakathi wocwaningo nokuwusekela ngoxhaso.

Engqungqutheleni enkulu nezinkundla zokucobelelana ngolwazi ezihambisanayo, abacwaningi bakwa-Apple bazokwethula ucwaningo olusha kuzo zonke izihloko ezihlukene, okuhlanganisa umsebenzi wokuvula ukuqeqeshwa okukhulu kwe-Recurrent Neural Networks, indlela yokuthuthukisa Amamodeli Wendawo Yesifunda, indlela entsha yokuhlanganisa ukuqonda kwesithombe nokwenza, indlela yokukhiqiza izigcawu ze-3D kusuka esithombeni esisodwa, kanye nendlela entsha yokugoqa amaprotheni.

Ngamahora ombukiso, abahambele umcimbi bazokwazi ukuzibonela ngawabo ucwaningo lwe-ML ye-Apple kudokodo lethu elingu-#204, okuhlanganisa nokusikisela kwe-LLM kwasendaweni nge-Apple silicon ne-MLX kanye ne-Sharp Monocular View Synthesis Ngaphansi Kokwesibili. I-Apple iphinde ixhase futhi ibambe iqhaza emicimbini eminingi ephethwe ngamaqembu esekela amaqembu angameleli kahle emphakathini we-ML.

Uhlolojikelele olubanzi lokubamba iqhaza kwe-Apple negalelo ku-ICLR 2026 lingatholakala lapha, kanye nokukhethwa kwamaphuzu avelele alandelayo ngezansi.

I-Recurrent Neural Networks (RNNs) ngokwemvelo ifaneleka ekuqondeni kahle, edinga inkumbulo encane kakhulu nokubala kunezakhiwo ezisekelwe ekunakekelweni, kodwa imvelo elandelanayo yokubala kwawo ngokomlando ikwenze kwaba nzima ukukhuphula ama-RNN abe izigidigidi zamapharamitha. Intuthuko entsha evela kubacwaningi be-Apple yenza ukuqeqeshwa kwe-RNN kusebenze kahle kakhulu – okuvumela ukuqeqeshwa kwezinga elikhulu okokuqala ngqa futhi kwandiswe isethi yezinketho zezakhiwo ezitholakalayo kubasebenzi ekuklameni ama-LLM, ikakhulukazi ukuthunyelwa okuvinjelwe yizinsiza.

Ku-ParaRNN: Ukuvula Ukuqeqeshwa Okuhambisanayo Kwama-RNN Angaqondile Amamodeli Olimi Olukhulu, iphepha elisha elamukelwa ku-ICLR 2026 njenge-Oral, abacwaningi be-Apple babelana ngohlaka olusha lokuqeqeshwa kwe-RNN okuhambisanayo okuzuza ukusheshisa okungu-665× ngaphezu kwendlela yokulandelana evamile (bona Umfanekiso 1). Le nzuzo esebenza kahle inika amandla ukuqeqeshwa kwama-RNN asendulo angamabhiliyoni ayi-7 angafinyelela ukusebenza kokumodela kolimi ngokuncintisana nama-transformer (bona Umfanekiso 2).

Ukuze kusheshiswe ucwaningo ekumodeleni okulandelanayo okuphumelelayo nokwenza abacwaningi nodokotela bakwazi ukuhlola amamodeli e-RNN amasha angaqondile esikalini, i-codebase ye-ParaRNN ikhishwe njengohlaka lomthombo ovulekile lokuqeqeshwa okuzenzakalelayo-ukufana kwama-RNN angaqondile.

Kwa-ICLR, umbhali wokuqala wephepha uzophinde ethule i-Expo Talk mayelana nalolu cwaningo.

I-Speedup evela ku-Parallel RNN Training

Ukusebenza kwe-Large-Scale Classic RNNs

Ama-State Space Models (ama-SSM) afana ne-Mamba asephenduke enye indlela ehamba phambili yama-Transformers emisebenzini yokumodela ngokulandelana. Inzuzo yabo eyinhloko ukusebenza kahle kokuqukethwe okude kanye nesizukulwane sefomu elide, esinikwe amandla inkumbulo yosayizi ongashintshi kanye nokukalwa komugqa kokuyinkimbinkimbi kwekhompyutha. Ukuya Okungapheli Nangaphezulu: Ukusebenzisa Ithuluzi Kuvula Ubude Okujwayelekile Kumamodeli Wendawo Yesifunda, iphepha elisha le-Apple elamukelwa njenge-Oral ku-ICLR, lihlola amandla kanye nemikhawulo yama-SSM emisebenzini yokukhiqiza yefomu elide. Iphepha libonisa ukuthi ukusebenza kahle kwama-SSM kuza ngezindleko zokuwohloka kokusebenza okungokwemvelo. Eqinisweni, ama-SSM ayehluleka ukuxazulula imisebenzi yokukhiqiza yefomu ende lapho ubunkimbinkimbi bomsebenzi bukhuphuka ngaphezu komthamo wemodeli, ngisho noma imodeli ivunyelwe ukukhiqiza iketango lokucabanga (CoT) lanoma ibuphi ubude. Lo mkhawulo uvela kumemori eboshiwe yemodeli, ekhawulela amandla okuvezayo lapho ikhiqiza ukulandelana okude.

Iphepha libonisa ukuthi lo mkhawulo ungancishiswa ngokuvumela ukufinyelela okusebenzisanayo kwama-SSM kumathuluzi angaphandle. Njengoba kunikezwe ukukhetha okulungile kokufinyelela kwamathuluzi kanye nedatha yokuqeqeshwa encike ezinkingeni, ama-SSM angafunda ukuxazulula noma iyiphi inkinga elungisekayo futhi ahlanganise ubude benkinga nobunkimbinkimbi okungahleliwe (bona Umfanekiso 3). Umsebenzi ubonisa ukuthi ama-SSM athuthukisiwe afinyelela ubude obuqinile obujwayelekile emisebenzini ehlukahlukene ye-arithmetic, ukucabanga, kanye nekhodi. Lokhu okutholakele kugqamisa ama-SSM njengenye indlela esebenza ngempumelelo yama-Transformers kuzilungiselelo ezisebenzisanayo ezisuselwe kumathuluzi neze-ejenti.

Ama-LLM e-multimodal ahlanganisiwe angakwazi kokubili ukuqonda futhi akhiqize izithombe awakhangi kuphela ngobulula bezakhiwo nokusebenza kahle, kodwa futhi ngenxa yokuthi izethulo ezabiwe zingaholela ekuqondeni okujulile nokuqondanisa okungcono kolimi lombono, futhi zinganika amandla amakhono ayingqayizivele njengokuhlela isithombe ngokusebenzisa imiyalelo.

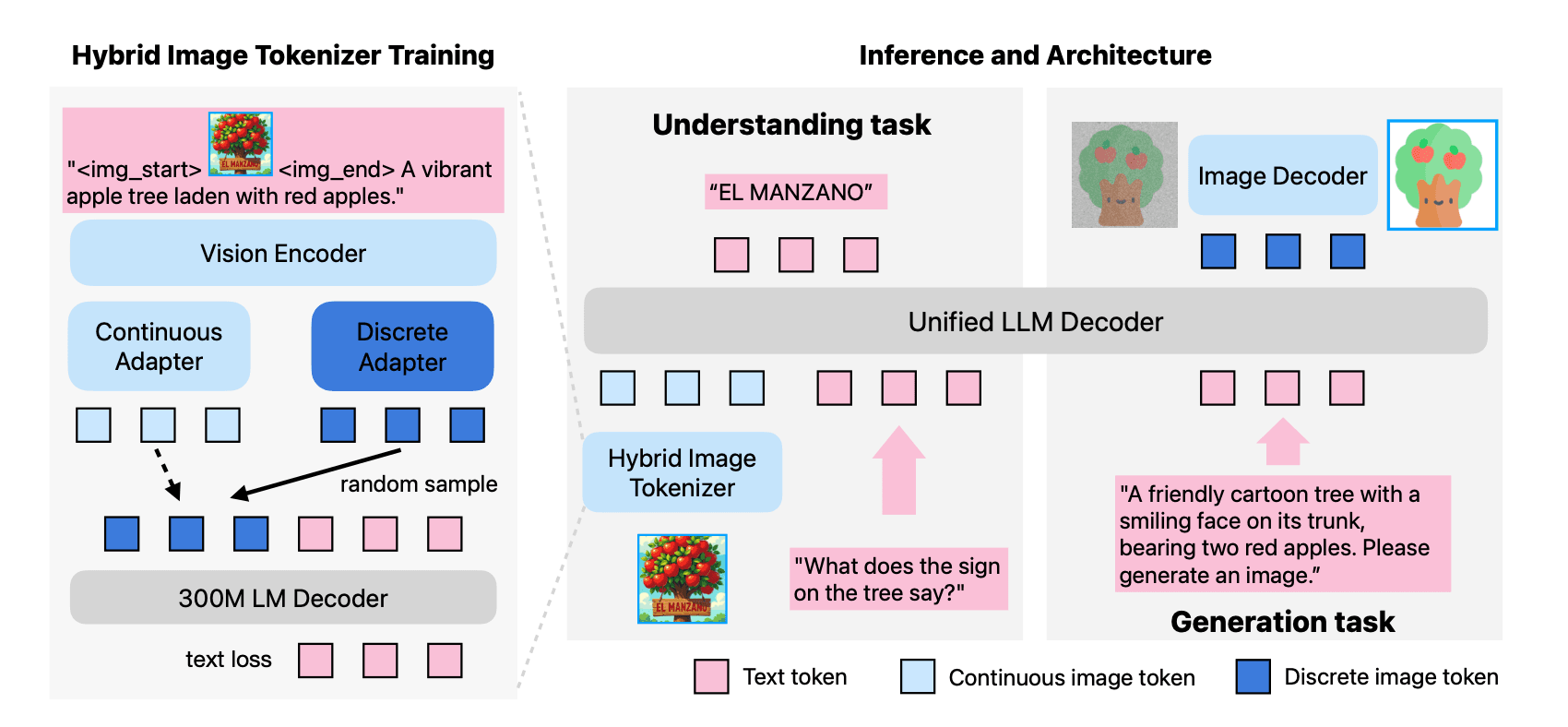

Kodwa-ke, amamodeli akhona omthombo ovulekile ngokuvamile ahlushwa ukuhwebelana phakathi kokuqonda kwesithombe namandla okwenza. Ku-ICLR, abacwaningi be-Apple bazokwabelana nge-MANZANO: Imodeli Elula Ehlanganisiwe Ehlanganisiwe Ye-Multimodal ene-Hybrid Vision Tokenizer. Njengoba kuchazwe ephepheni, i-Manzano iwuhlaka oluhlanganisiwe oluklanyelwe ukunciphisa lokhu kushintshaniswa kokusebenza ngombono olula wokwakha (bheka Umfanekiso 4) kanye neresiphi yokuqeqesha ekala kahle kuwo wonke amamodeli amasayizi.

UManzano usebenzisa isishumeki sombono esisodwa okwabelwana ngaso ukuze aphakele ama-adaptha amabili angasindi akhiqiza ukushumeka okuqhubekayo kokuqonda kwesithombe kuya kombhalo kanye namathokheni ahlukene okukhiqiza umbhalo uye kwesithombe ngaphakathi kwesikhala esabiwe se-semantic. I-LLM ehlanganisiwe ye-autoregressive ibikezela i-semantics yezinga eliphezulu ngendlela yombhalo nethokheni yesithombe, futhi isiqophi sokuhlukanisa esiyisisetshenziswa bese sihumusha amathokheni esithombe abe amaphikseli. Lesi sakhiwo, kanye neresiphi yokuqeqeshwa okuhlanganisiwe ekuqondeni nasekukhiqizeni idatha, inika amandla ukufundwa okuhlanganyelwe okukhulayo kwawo womabili amakhono. I-Manzano izuza imiphumela yezinga eliphezulu phakathi kwamamodeli ahlanganisiwe, futhi inokuncintisana namamodeli akhethekile, ikakhulukazi ekuhloleni okunothe ngombhalo.

Kwa-ICLR, abacwaningi bakwa-Apple bazophinde babelane nge-Sharp Monocular View Synthesis Ngaphansi Kokwesibili, eveza indlela yokukhiqiza isethulo se-3D Gaussian esithathwe esithombeni, kusetshenziswa ukudlula okukodwa okuya phambili kunethiwekhi ye-neural ngaphansi kwesekhondi ku-GPU evamile. Isethulo esiwumphumela singabe sesenziwe ngesikhathi sangempela kusukela ekubukeni okuseduze, njengesigcawu se-3D esinesithombe esibonakalayo esiphezulu (bona Umfanekiso 5).

Ibizwa ngokuthi i-SHARP (Isithombe-Single-Accuracy Real-time Parallax), le nqubo iletha isethulo esiyimethrikhi, esinesikali esiphelele, esisekela ukunyakaza kwekhamera ye-metric. Imiphumela yokuhlola ibonisa ukuthi i-SHARP iletha ukukhiqizwa okungashintshiwe okuqinile kuwo wonke amasethi wedatha. Iphinde isethe isimo esisha sobuciko kumadathasethi amaningi, inciphisa i-LPIPS ngo-25-34% kanye ne-DISTS ngo-21-43% uma iqhathaniswa nemodeli yangaphambili ehamba phambili, kuyilapho yehlisa isikhathi sokuhlanganisa ngama-oda amathathu obukhulu.

Ukuze umphakathi ukwazi ukuqhubeka uhlole futhi wakhe kule ndlela, ikhodi iyatholakala lapha.

Abahambele i-ICLR bazokwazi ukuzibonela mathupha lo msebenzi kudemo e-Apple booth #204 phakathi namahora ombukiso.

Ukugoqa amaprotheni kuyinkinga eyisisekelo nokho eyaziwa kakhulu eyinselele kubhayoloji yekhompyutha. Emgogodleni wayo, le nkinga ihilela ukubikezela izixhumanisi ezinezinhlangothi ezintathu ezinembayo ze-athomu ngayinye ngaphakathi kwesakhiwo seprotheni, okusekelwe kuphela ekulandelaneni kwayo kwe-amino acid (okungukuthi, uchungechunge lwezinhlamvu ezinamavelu angaba ngu-20 ohlamvu ngalunye). Ukubikezela ukwakheka kwe-3D kwamaphrotheni kubaluleke kakhulu ngoba umsebenzi wephrotheni uxhumene ngokwemvelo nokucushwa kwawo kwendawo. Ukuphumelela kule ndawo kwenza abacwaningi bakwazi ukuklama nokuqonda ngokushesha amaprotheni, okungase kube nokuguqula ukutholakala kwezidakamizwa, i-biotechnology, nokunye.

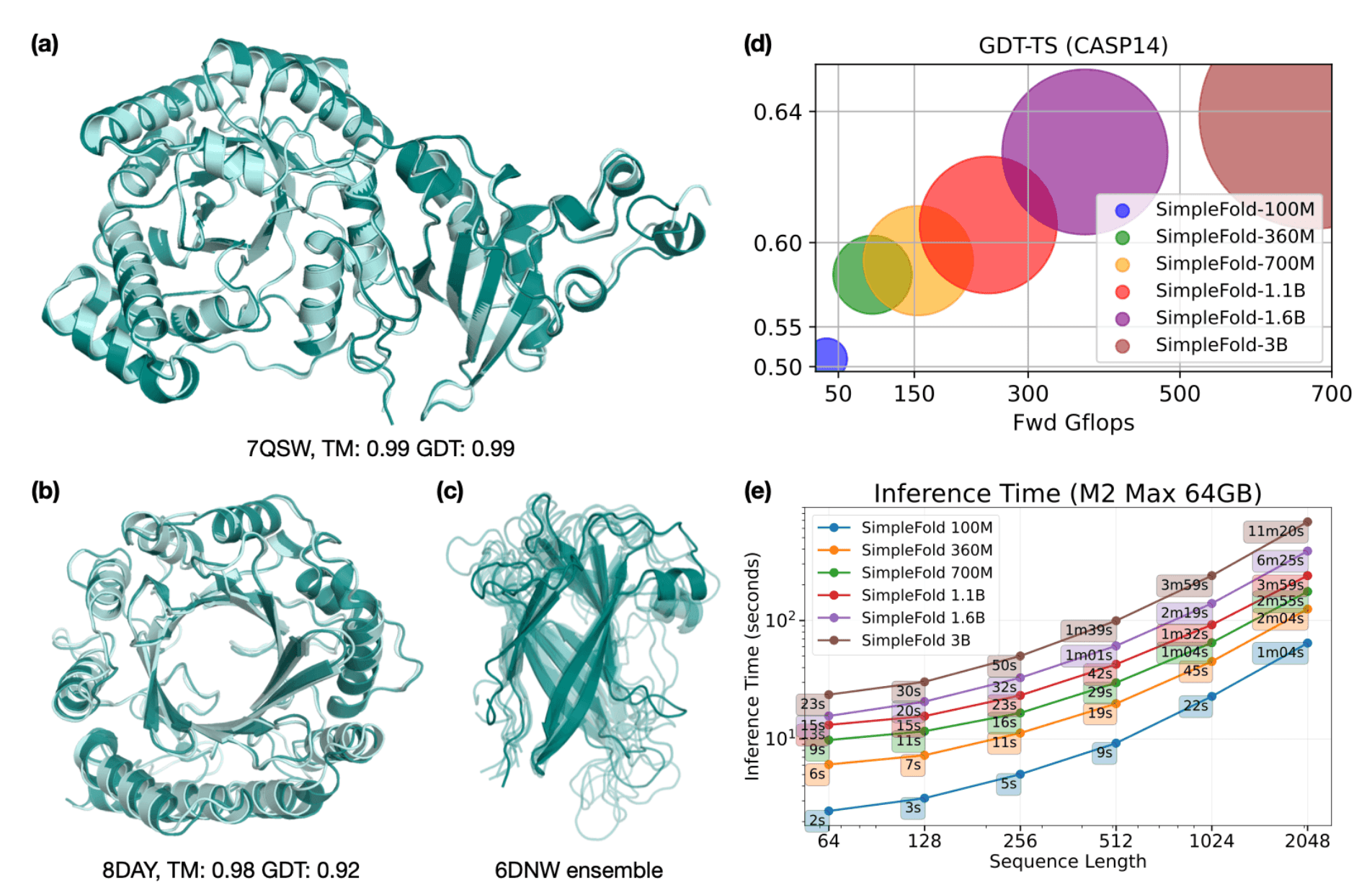

Kwa-ICLR, abacwaningi be-Apple bazokwabelana nge-SimpleFold: Ukugoqa Amaprotheni Kulula Kunokuthi Ucabanga Ngayo, echaza indlela entsha esebenzisa ukwakheka kwenjongo evamile okusekelwe kuphela kumabhulokhi avamile we-transformer (afana namamodeli wombhalo kuya esithombeni noma osuka ku-3D). Le ndlela ivumela i-SimpleFold ukuthi ihlukanise nemiklamo yezakhiwo eyinkimbinkimbi yezindlela zangaphambili, kuyilapho igcina ukusebenza (bheka Umfanekiso 6). Ukuze umphakathi wocwaningo ukwazi ukwakha ngale ndlela, iphepha lihambisana nekhodi kanye nezindawo zokuhlola eziyimodeli ezingasebenza kahle endaweni ku-Mac nge-Apple silicon isebenzisa i-MLX.

Ngamahora ombukiso, abahambele i-ICLR bazokwazi ukusebenzisana namademo abukhoma ocwaningo lwe-Apple ML ku-booth #204 okuhlanganisa:

- SHARP – Le demo ibonisa i-SHARP isebenza kusethi yezithombe ezirekhodiwe kusengaphambili noma izithombe ezithwetshulwe ngokuqondile ngumsebenzisi ngesikhathi sedemo. Izivakashi zizojabulela inqubo esheshayo kusukela ekukhetheni isithombe, ukusicubungula nge-SHARP, nokubuka ifu elikhiqiziwe lephoyinti le-3D Gaussian ku-iPad Pro nge-M5 chip.

- Incazelo yendawo ye-LLM ku-Apple silicon ene-MLX – Le demo izobonisa ukucatshangwa kwe-LLM ekudivayisi ku-MacBook Pro ene-M5 Max isebenzisa i-MLX, inhloso yohlaka lomthombo ovulekile we-Apple eyakhelwe i-Apple silicon, esebenzisa imodeli yekhodi yomngcele olinganiselwe endaweni yonke endaweni ngaphakathi kwendawo yokuthuthukiswa komdabu ye-Xcode. Isitaki esigcwele – i-MLX, mlx-lm, nezisindo eziyimodeli – siwumthombo ovulekile, umema umphakathi wocwaningo ukuthi wakhele phezu kwawo futhi wandise lezi zindlela ngokuzimela.

Siyaziqhenya ngokuphinde sixhase amaqembu asondelene abambe imicimbi esizeni e-ICLR, okuhlanganisa i-Women in Machine Learning (WiML) (somphakathi ngo-April 24), kanye ne-Queer ku-AI (somphakathi ngo-Ephreli 25). Ngaphezu kokweseka la maqembu ngoxhaso, abasebenzi bakwa-Apple bazophinde babambe iqhaza kule micimbi neminye.

I-ICLR ihlanganisa ndawonye ochwepheshe abazinikezele ekuthuthukiseni ukufunda okujulile, futhi i-Apple iyaziqhenya ngokuphinde yabelane ngocwaningo olusha olusha emcimbini futhi ixhumane nomphakathi owuhambele. Lokhu okuthunyelwe kuqokomisa ukukhethwa kwemisebenzi abacwaningi be-Apple ML abazoyethula ku-ICLR 2026, futhi ukubuka konke okuphelele nohlelo lokubamba kwethu iqhaza kungatholakala lapha.