Alibaba Qwen Team Releases Qwen3.6-27B: High Performance Weight Dense Model with 397B MoE in Agentic Code Benchmarks

Alibaba's Qwen Team has released Qwen3.6-27B, the first dense open weight model in the Qwen3.6 family – and arguably the 27 billionth parameter model available today for coding agents. It brings major improvements in agent coding, a novel approach to Conservation Thinking, and a hybrid architecture that combines Gated DeltaNet direct attention with traditional attention – all under the Apache 2.0 license.

The release comes a few weeks after the Qwen3.6-35B-A3B, a small Mixture-of-Experts (MoE) model with 3B active components that itself followed the extensive Qwen3.5 series. The Qwen3.6-27B is the second model of the family and the first fully compact variant – and in several key benchmarks, it actually outperforms both the Qwen3.6-35B-A3B and the much larger MoE model Qwen3.5-397B-A17B. The Qwen team describes the release as prioritizing “stability and real-world service,” shaped by direct community feedback rather than benchmark improvements.

The Qwen team releases two types of weight on the Hugging Face Hub: Qwen/Qwen3.6-27B in BF16 and Qwen/Qwen3.6-27B-FP8scaled version using a fine-tuned FP8 benchmark with a block size of 128, with performance metrics almost identical to the original model. Both variants are compatible with SGlang (>=0.5.10), vLLM (>=0.19.0), KTransformers, and Hugging Face Transformers.

What's New: Two Main Features

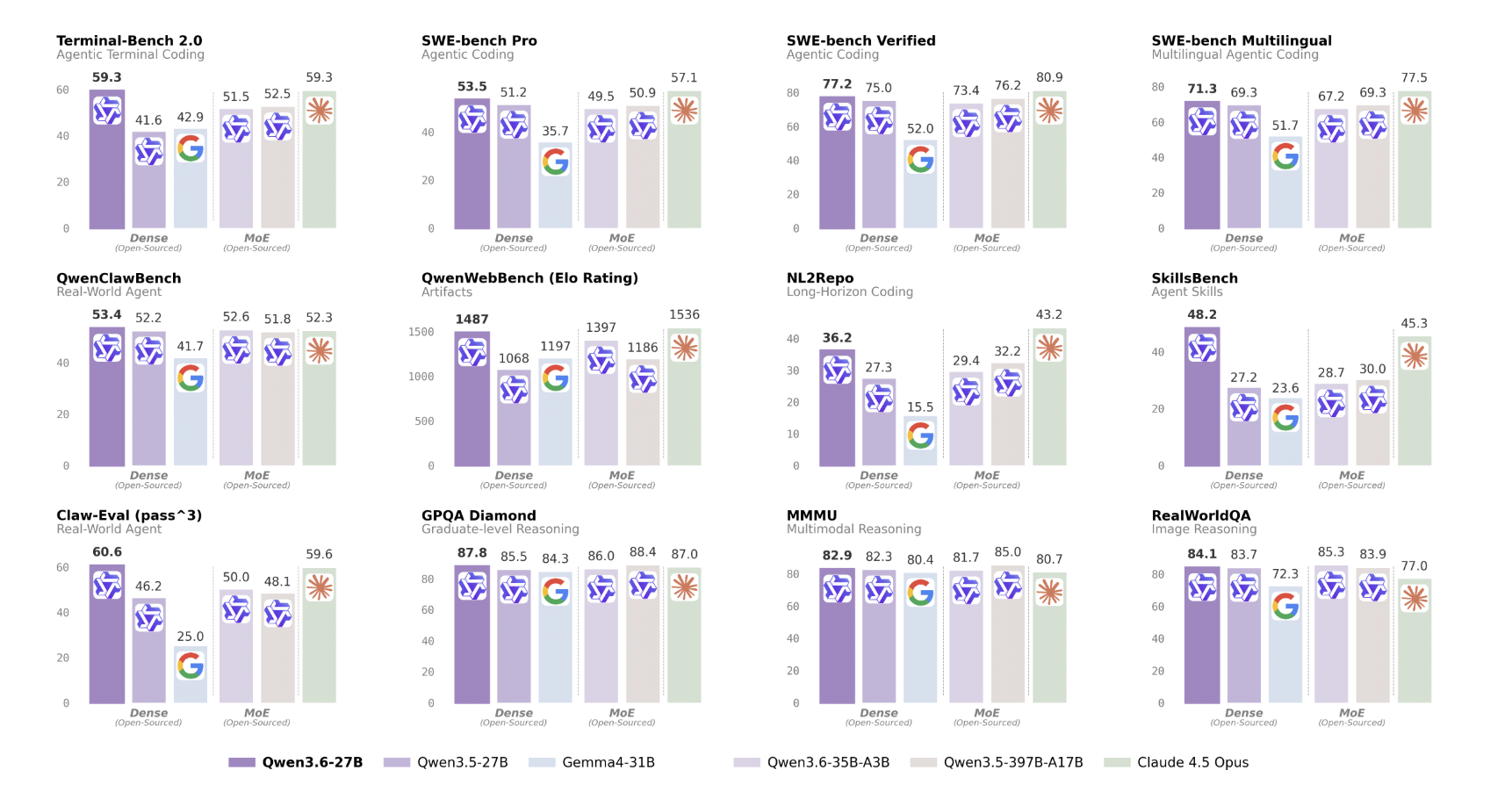

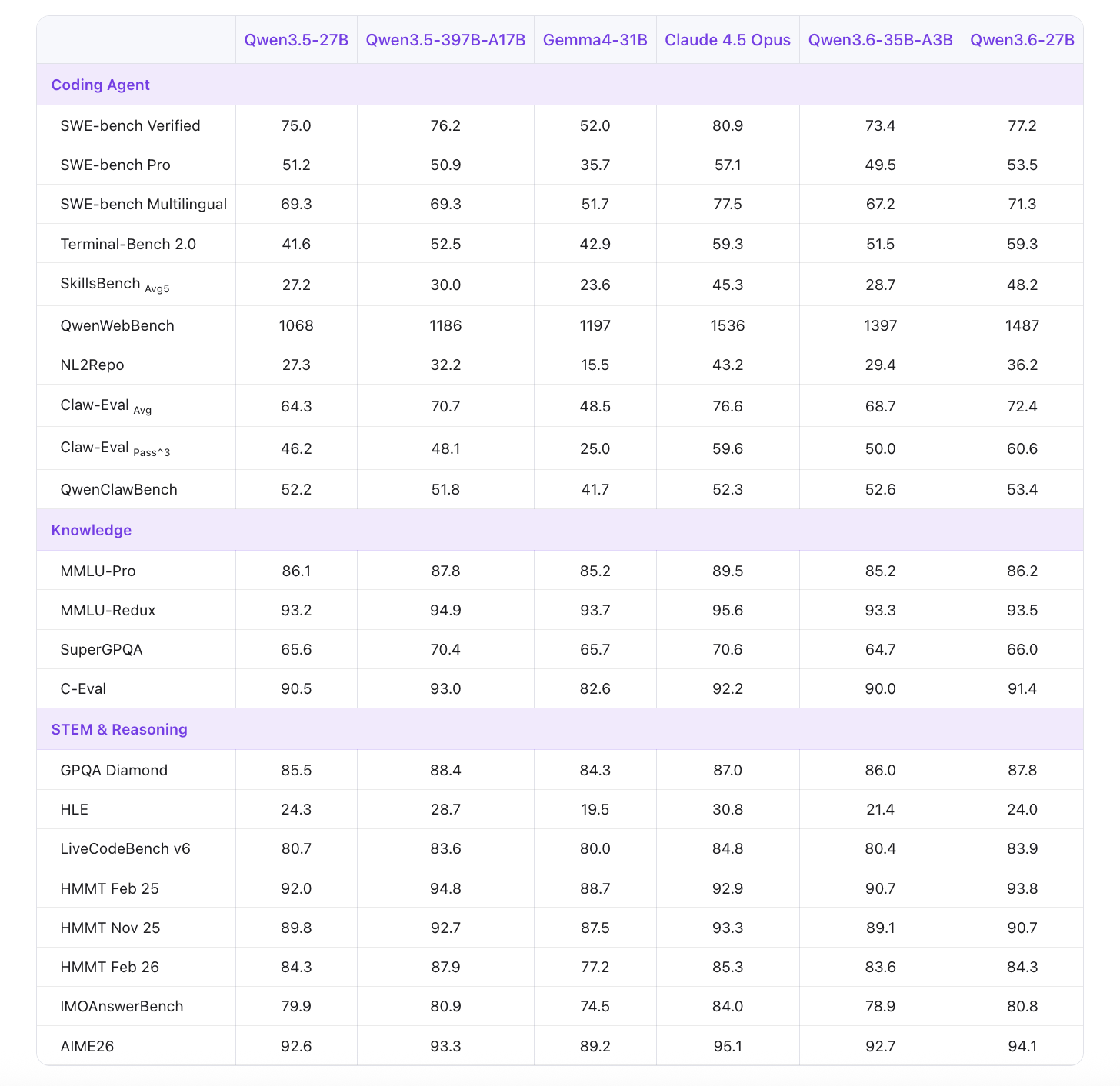

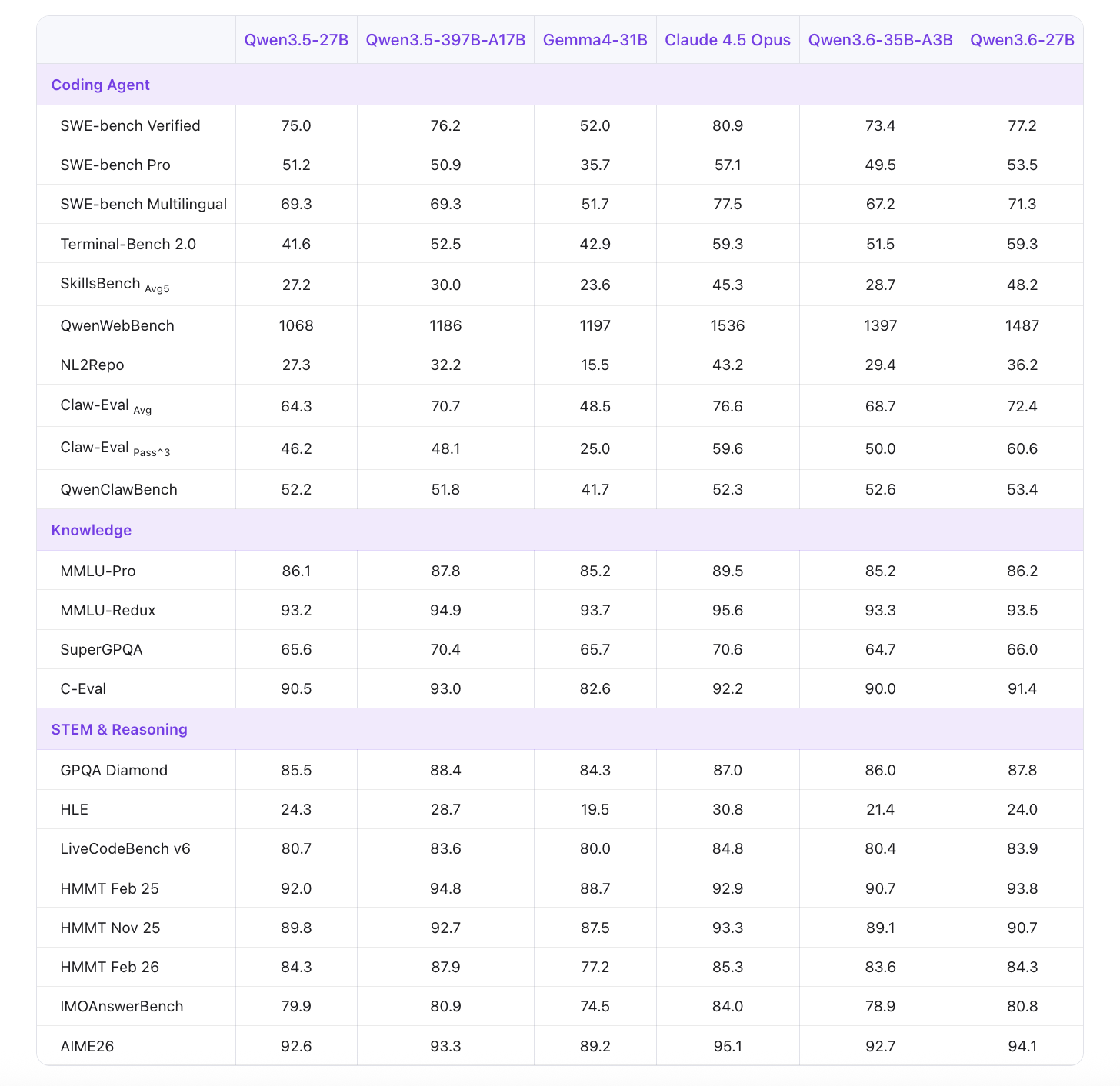

Agentic code the first major development. The model is specifically developed to handle front-end workflows and database-level reasoning – tasks that require understanding a large codebase, navigating file structures, editing across multiple files, and producing consistent, usable output. In QwenWebBench, the first bilingual (EN/CN) internal code generation benchmark covering seven categories — Web Design, Web Applications, Games, SVG, Data Visualization, Animation, and 3D — Qwen3.6-27B scores 1487, a significant jump from 1068 for Qwen3.5129 and 5129 for Qwen3.6-35B-A3B. In NL2Repo, which tests repository-level code generation, the model scores 36.2 compared to 27.3 for Qwen3.5-27B. In SWE-bench Verified – the public standard for agents of independent software developers – it reaches 77.2, up from 75.0, and competes with Claude 4.5 Opus's 80.9.

Conservation of thought it is the second, and undoubtedly architecturally interesting, addition. By default, most LLMs only store the thought-of-thread (CoT) created for the user's current message; thinking from previous conversions is rejected. Qwen3.6 introduces a new option – enabled with "chat_template_kwargs": {"preserve_thinking": True} in the API – to store and use thought traces from historical messages throughout the conversation. For iterative agent workflows, this is especially important: the model forwards the previous thought context rather than retrieving it each time. This can reduce overall token usage by reducing redundant logic and improve KV cache usage.

Under the Hood: Hybrid Architecture

Qwen3.6-27B is a causal language model with Vision Encoder. It is multimodal in nature, supporting text, image, and video input – trained in both pre-training and post-training phases.

The model has 27B parameters distributed across 64 layers, with 5120 hidden dimensions and an embedding space of 248,320 tokens (expanded). The hidden structure follows a unique repeating pattern: 16 blocks, each shaped like 3 × (Gated DeltaNet → FFN) → 1 × (Gated Attention → FFN). This means that three out of four bases use Gated DeltaNet – a form of direct attention – with only one out of four bases using Gated Attention.

What is Gated DeltaNet? A common attention computes the relationship between all token tokens, which scales to four times (O(n²)) the length of the sequence — more expensive than a long context. Linear attention methods such as DeltaNet approximate this with linear complexity (O(n)), making them very fast and memory efficient. Gated DeltaNet adds an overhead approach, basically learning when to update or store information, similar to wind and LSTM logging but used for attention computation. In Qwen3.6-27B, Gated DeltaNet sublayers use 48 value (V) and 16 query and key (QK) queue heads, with a head size of 128.

Gated Attention sublayers use 24 attention heads for queries (Q) and only 4 for keys and values (KV) — a configuration that greatly reduces KV cache memory at decision time. These layers have a head dimension of 256 and use Rotary Position Embedding (RoPE) with a rotational dimension of 64. The average size of FFN is 17,408.

The model also uses Multi-Token Prediction (MTP), which is trained in multiple steps. During inference, this enables inferential logging – where the model generates multiple candidate tokens simultaneously and validates them in parallel – to improve throughput without compromising quality.

Content Window: 262K Native, 1M with YaRN

By default, Qwen3.6-27B supports a context length of 262,144 tokens — enough to hold a large codebase or a book-length document. For operations that exceed this, the model supports YaRN (Yet another RoPE extension) scaling, which extends up to 1,010,000 tokens. The Qwen team advises to keep the context at least 128K tokens to save the model's processing power.

Benchmark Performance

In agent code benchmarks, the gains with Qwen3.5-27B are huge. SWE-bench Pro scores 53.5 compared to 51.2 for the Qwen3.5-27B and 50.9 for the larger Qwen3.5-397B-A17B — meaning the compact 27B model beats the 397B MoE in this task. SWE-bench Multilingual scores 71.3 vs 69.3 for Qwen3.5-27B. Terminal-Bench 2.0, tested under a shutdown time of 3 hours with 32 CPUs and 48 GB RAM, reaches 59.3 — it matches Claude 4.5 Opus exactly, and outperforms Qwen3.6-35B-A3B (51.5). SkillsBench Avg5 shows a very impressive gain: 48.2 compared to 27.2 for Qwen3.5-27B, an average improvement of 77%, which is also much higher than Qwen3.6-35B-A3B's 28.7.

In the benchmarks, GPQA Diamond scores 87.8 (up from 85.5), AIME26 scores 94.1 (up from 92.6), and LiveCodeBench v6 scores 83.9 (up from 80.7).

Visual language ratings show consistent parity or improvement over Qwen3.5-27B. VideoMME (with subtitles) scores 87.7, AndroidWorld (visual agent) scores 70.3, and VlmsAreBlind — which examines common ways of failing visual perception — scores 97.0.

Key Takeaways

- Qwen3.6-27B is Alibaba's first solid openweight model in the Qwen3.6 familybuilt to prioritize real-world code utility over benchmark performance – licensed under Apache 2.0.

- The model introduces Thinking Preservationa new feature that keeps logic traces throughout the conversation history, reduces unnecessary token generation and improves the efficiency of the KV cache in multi-agent workflows.

- Agent code performance is a key strength — Qwen3.6-27B scores 77.2 in SWE-bench Verified, 59.3 in Terminal-Bench 2.0 (similar to Claude 4.5 Opus), and 1487 in QwenWebBench, outperforming both its predecessor Qwen3.5-27B and the larger model 5-A1 in Model3B.

- The structure uses a hybrid structure of Gated DeltaNet + Gated Attention of all 64 layers – three out of four layers use efficient linear attention (Gated DeltaNet), with Multi-Token Prediction (MTP) that allows prediction generation at runtime.

- Two types of weights are available for the Hugging Face Hub –

Qwen3.6-27B(BF16) andQwen3.6-27B-FP8(fine-tuned FP8 with 128 block size) — both support SGlang, vLLM, KTransformers, and Hugging Face Transformers, with a native window of 262,144 tokens that expands to 1,010,000 tokens with YaRN.

Check it out Technical specifications, Qwen/Qwen3.6-27B again Qwen/Qwen3.6-27B-FP8. Also, feel free to follow us Twitter and don't forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us