The company's smart memory Amazon Bedrock with Amazon Neptune and Mem0

This post was written by Shawn Tsai of TrendMicro.

Delivering relevant, context-aware responses is critical to customer satisfaction. For enterprise-grade AI chatbots, understanding not only the current question but the organizational context behind it is critical. The company's intelligent memory in Amazon Bedrock, powered by Amazon Neptune and Mem0, provides AI agents with a persistent, enterprise-specific context—enabling them to learn, adapt, and respond intelligently across multiple interactions. TrendMicro, one of the world's largest anti-virus software companies, has developed Trend's Companion chatbot, so that their customers can explore information through a natural, conversational interaction (read more).

TrendMicro aims to develop its AI chatbot service to deliver personalized, context-aware support to business customers. A chatbot needs to keep a conversation history for continuity, referencing company-specific information at scale, and ensure that memory remains accurate, secure, and up-to-date. The challenge lies in combining the long-term memory of organizational information with the short-term memory of ongoing conversations, while supporting company-wide information sharing. In collaboration with the AWS team, including AWS's Generative AI Innovation Center, TrendMicro tackled this challenge using Amazon Neptune, Amazon OpenSearch, and Amazon Bedrock, as we detail in this blog.

Solution overview

TrendMicro leveraged the company's smart memory in Amazon Bedrock by integrating multiple AWS services. Amazon Neptune maintains a graph of company-specific information, representing organizational relationships, processes, and data to enable accurate and systematic retrieval. Mem0 holds short-term conversational memory for immediate context and long-term memory for ongoing information across sessions. Amazon Bedrock orchestrates AI agent workflows, integrated with both Neptune and Mem0 to acquire and use situational information during forecasting. This feature allows the chatbot to remember relevant history, retrieve structured company information, and respond with tailored and rich responses, helping to improve the user experience.

Memory creation and renewal

The architecture begins by capturing user messages and extracting entities, relationships, and potential memories through Claude's model in Amazon Bedrock. These are then embedded with Amazon Bedrock Titan Text Embed and searched in both Amazon OpenSearch Service and Amazon Neptune. Relevant entities and memories are retrieved, and updated with the model before being re-embedded and referenced back to OpenSearch and Neptune. This close-loop process ensures that business-related memories can be continuously updated and the information graph in Neptune is always up to date with the details of the conversation.

Retrieving memory

When handling user queries, the system uses the same embedding pipeline as Bedrock Titan to search for both vector embeddings in the OpenSearch service and business triples in Neptune. The relevant memories are then reranked using the Amazon Bedrock Rerank or Cohere Rerank models to ensure that contextually accurate information is delivered. This dual retrieval strategy provides both semantic flexibility from OpenSearch and structured precision from Neptune, allowing the chatbot to deliver highly relevant, context-aware answers.

Feedback-memory mapping and human-in-the-loop feedback

For each AI response, the system maps the sentences to the specified memories, generating a memory test report. Users are then given the option to approve or reject this arrangement. Approved memories remain part of the knowledge base, while rejected ones are removed from both the OpenSearch Service and Neptune. This ensures that only verified and reliable information is forwarded. This human-in-the-loop process strengthens trust and helps continuously improve memory accuracy and gives business customers direct influence in the development of their AI experience.

Amazon Neptune works

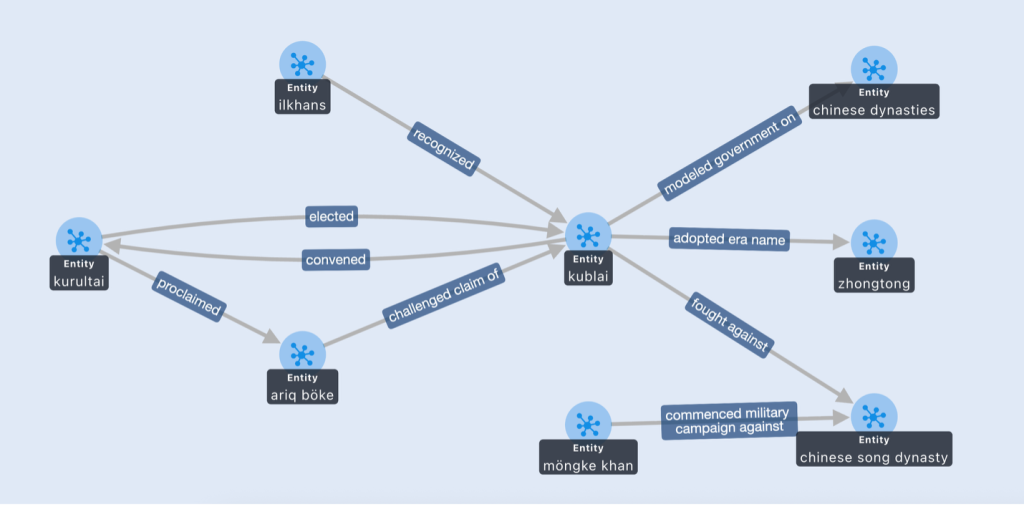

To illustrate how Amazon Neptune improves chatbot memory, consider a customer who asks, “Who saw Kublai as emperor?” Without a knowledge graph, the AI might return a vague answer like: “Kublai was a Mongol emperor who gained recognition from different groups.” This type of response is general and lacks precision.

If the same question is asked but the business graph of Neptune is asked and placed in the context window of the large language model (LLM), the model can support its reasoning with organized triplets such as (Ilkhans, known, Kublai). The chatbot now answers more precisely: “On the basis of organizational information, Kublai was recognized by the Ilkhans as a ruler.” This before-and-after example shows how structured business relationships in Neptune allow the model to generate contextually relevant and verifiable answers.

Conclusion and next step

As described in the AWS Trend Micro case study, Trend Micro uses AWS to help deliver a more secure, scalable, and intelligent customer experience. Building on this foundation, Trend Micro is combining Amazon Bedrock, Amazon Neptune, Amazon OpenSearch Service, and Mem0 to create a persistent, enterprise-specific AI chatbot that delivers intelligent, context-aware conversations at scale. By combining graph-based information with generative AI, Trend Micro is expected to improve response quality, deliver clearer and more accurate responses while establishing a foundation for AI systems that constantly adapt to evolving organizational information; This functionality is still being tested and modified to improve the end user experience.

Looking ahead, TrendMicro is exploring future enhancements such as extensive graphing, additional update pipelines, and multi-language support. For readers who want to go deeper, we recommend checking out the GitHub sample implementation, which includes the source code we've included, and the Amazon Neptune Documentation for more technical details and inspiration.

About the writers