Google Launches T5Gemma 2: Decoder Models for Multimodal Inputs with SigLIP and 128K Content

Google has released it T5Gemma 2open family encoder-decoder Transformer checkpoints are built to adapt Gemma 3 pre-trained weights into an encoder-decoder structure, then continues to pre-train with UL2 purpose. Liberation is something pre-trained onlyintended for developers to train for specific tasks, and Google makes that clear not issuing post-training or IT audits in this fall.

The T5Gemma 2 is positioned as the encoder-decoder counterpart to the Gemma 3 that retains the same low-level building blocks, and adds 2 structural changes aimed at better mini-model performance. The models inherit Gemma 3 features that are important for implementation, in particular multimodality, long context up to 128K tokensand extensive coverage in multiple languages, and a blog that mentions it more than 140 languages.

What exactly did Google release?

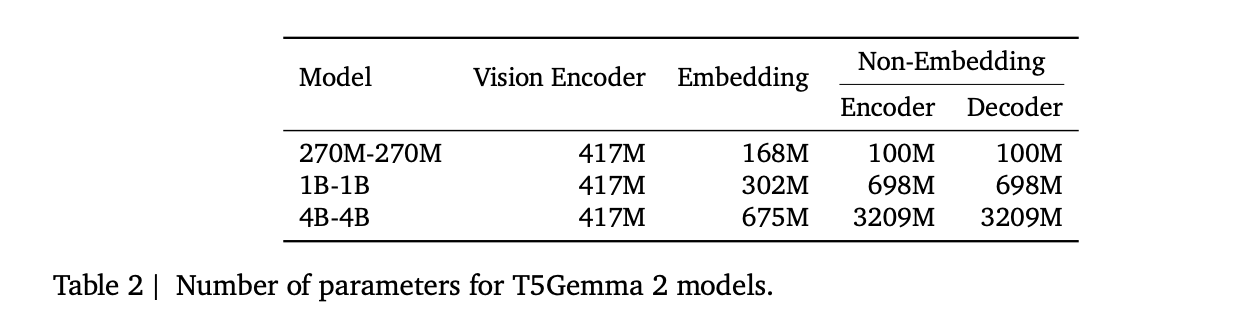

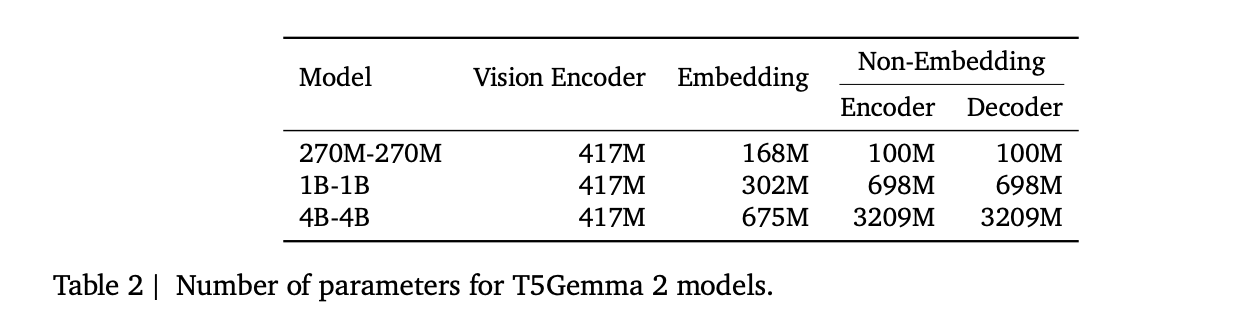

Release includes 3 pre-trained sizes, 270M-270M, 1B-1Bagain 4B-4Bwhere the definition means that the encoder and decoder are the same size. The research team reports almost absolute values without the vision coder, about 370M, 1.7Bagain 7B boundaries. Multimodal accounting calculates a 417M parameter view encoder, and encoder and decoder parameters are broken down into embeddings and non-embeddings.

Adaptation, coder without training from scratch

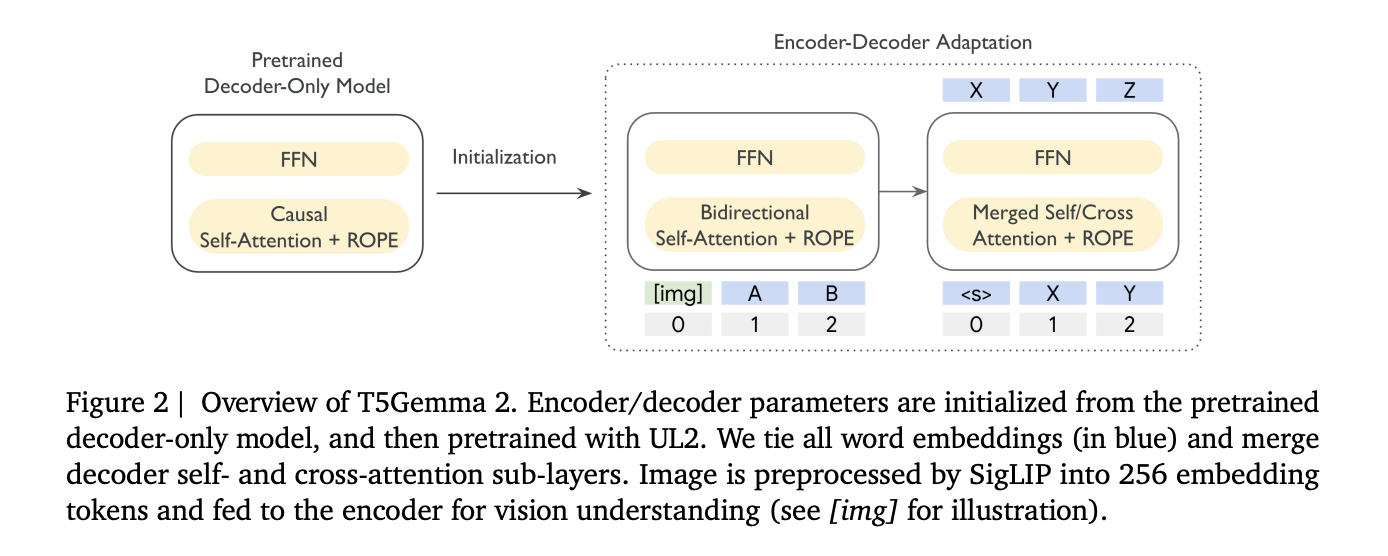

T5Gemma 2 follows the same adaptation concept introduced in T5Gemma, run the decoder model in the decoder test environment only, then adapt to UL2. In the figure above the research team shows the encoder and decoder parameters initialized from a pre-trained decoder-only model, then pre-trained with UL2, with images first converted by SigLIP into 256 tokens.

This is important because the encoder-decoder splits the work, the encoder can read full input twice, while the decoder focuses on automatic generation. The research team says this classification can help in long-term context tasks where the model has to find the right evidence for large inputs before production.

Two performance changes that are easy to miss but affect the smaller models

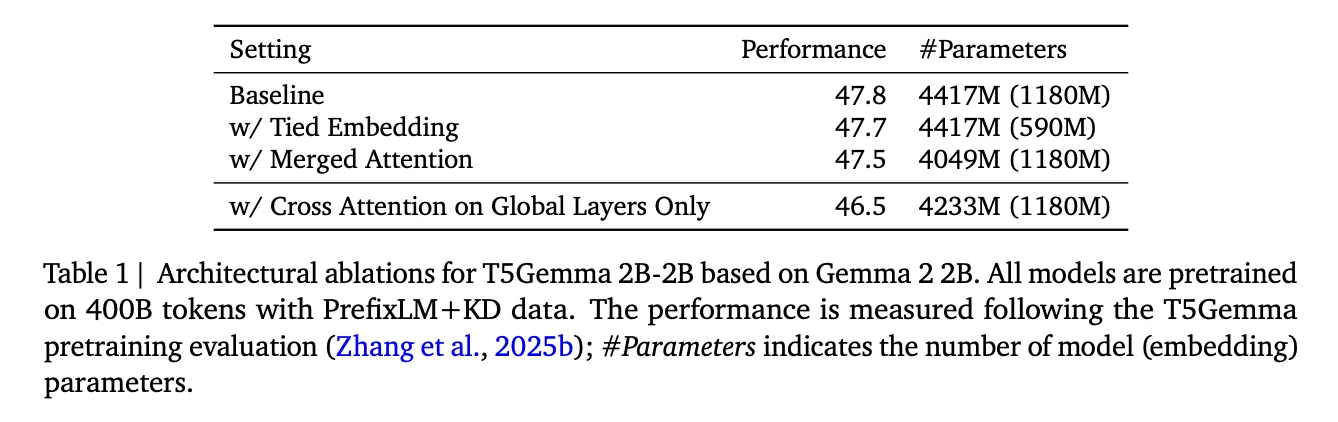

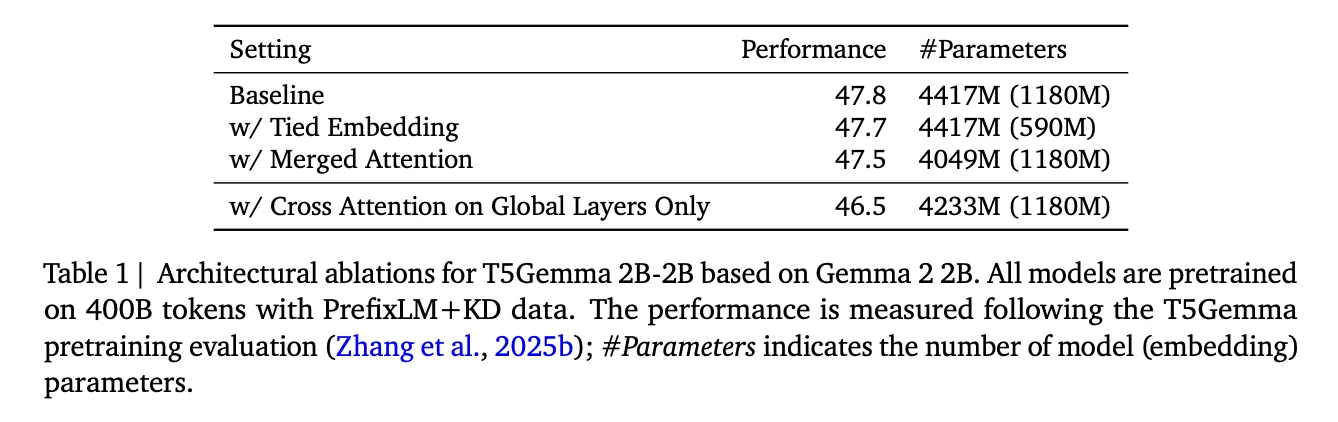

First, the T5Gemma 2 uses integrated word embedding for all encoder input embeddings, decoder input embeddings, and decoder output or softmax embeddings. This reduces the multiplicity of the parameter, and refers to the output that shows the least change in quality while reducing the embedding parameters.

Second, it presents combined attention in the video. Instead of separate sub-objects of self-attention and cross-attention, the decoder performs a single-attention task there K and V they are formed by concatenating the encoder output and decoder states, and the masking preserves the transparency of the decoder tokens. This guarantees easy implementation, because it minimizes the difference between the modified decoder and the original Gemma-style decoder stack, and reports parameter savings with less average quality degradation in their outputs.

Multimodality, image understanding is the input side, not the output side

T5Gemma 2 is multimodal by reusing Gemma 3's vision encoder and saving snow during training. Perception tokens are always provided by the encoder and encoding tokens are fully visible to each other by paying attention to yourself. This is a pragmatic encoder-decoder design, the encoder combines image tokens and text tokens into context representations, and the decoder can then take care of those representations while generating text.

On the equipment side, the T5Gemma 2 is ranked under the image-text-to-text pipeline, such as research structure, image in, text input, text output. That example pipeline is a very fast way to verify the end of a multimodal path, including dtype options like bfloat16 and default device mapping.

Long thread at 128K, what does that do

Google researchers say that the 128K context window is compatible with Gemma 3 exchanges local and global attention way. The Gemma 3 team describes a repeating pattern of 5 to 1, 5 area sliding window focus layers followed by 1 global attention layerwith a local window size of 1024. This design reduces the growth of the KV cache in relation to making all layers global, which is one of the reasons why it is possible to do it in small steps.

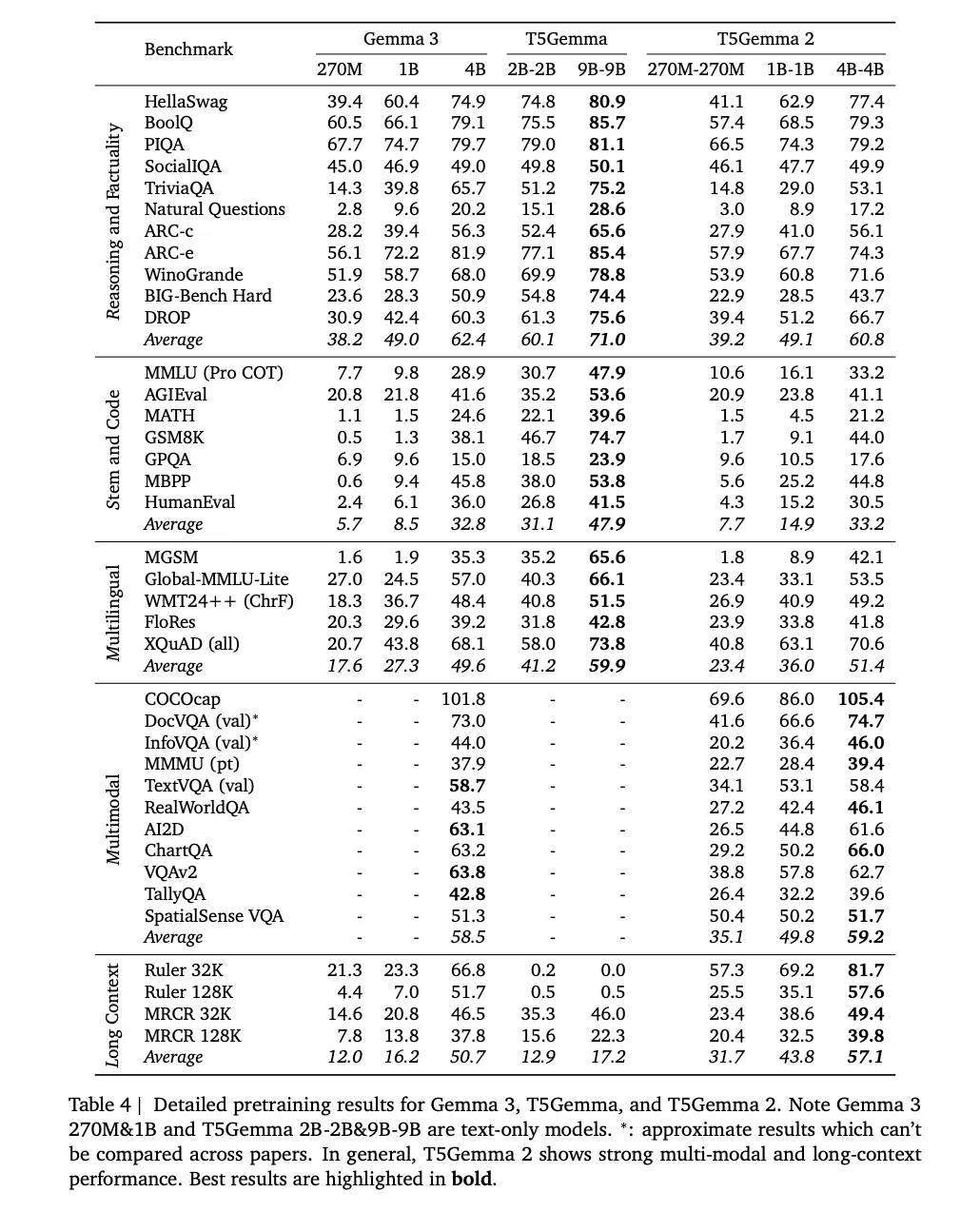

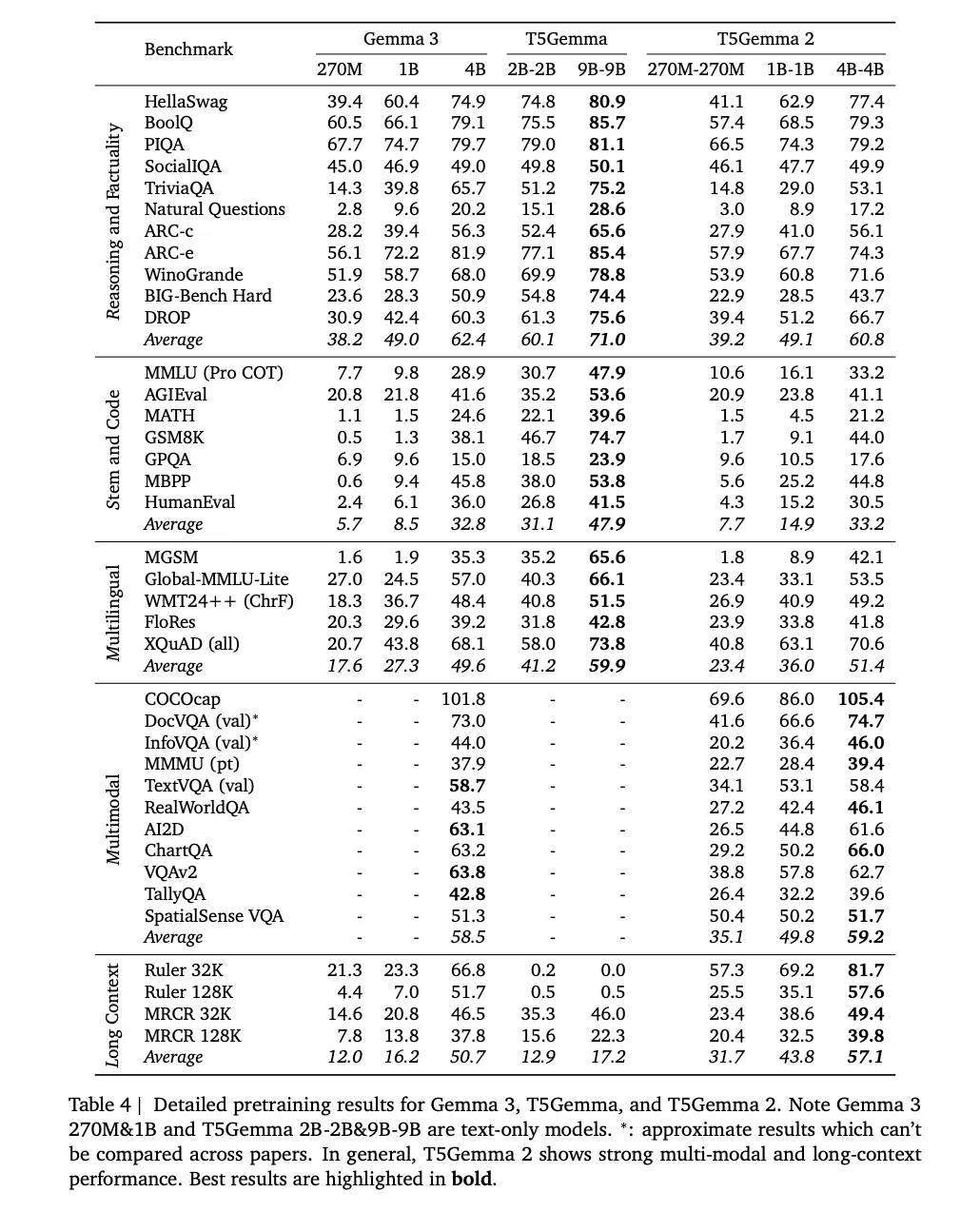

In T5Gemma 2, the research team also talks about adoption spatial translation methods in a long context, and train themselves to sequence until 16K input paired with 16K target outputand test the performance of long content up to 128K in benchmarks including THE GOVERNOR again MRCR. The detailed pre-training results table includes tests 32K and 128K, showing the deltas of the long context they are looking for with Gemma 3 at the same rate.

Training setup and “pre-trained only” means to users

The research team says the models are pre-trained 2T tokens and defines a training setup including a batch size of 4.2M tokenscosine rate learning decomposition with 100 Warmup steps, global gradient clipping at 1.0and a test area with a final rating 5 checkpoints.

Key Takeaways

- T5Gemma 2 is a decoder family derived from Gemma 3 and continued with UL2it also uses the pretrained weights of Gemma 3, and uses the same UL2-based normalization recipe used in T5Gemma.

- Google released only pre-trained checkpointsNo trained posts or different tuned instructions are included in this download, so the use of the stream requires your own training and testing of the posts.

- Multimodal input is handled by a SigLIP vision encoder that outputs 256 image tokens and remains frozen.those view tokens go into the encoder, the recorder produces the text.

- The two parameter efficiency variables are moderateintegrated word embedding shares encoder, chip, and output embedding, integrated attention combines decoder attention and attention into a single module.

- Long core up to 128K enabled by Gemma 3's integrated attention design5 iterative local smoothing window layers with window size 1024 followed by 1 global layer, and T5Gemma 2 inherits this mechanism.

Check it out Paper, Technical details again The model of the face size. Also, feel free to follow us Twitter and don't forget to join our 100k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

I am a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in Data Science, especially Neural Networks and its application in various fields.