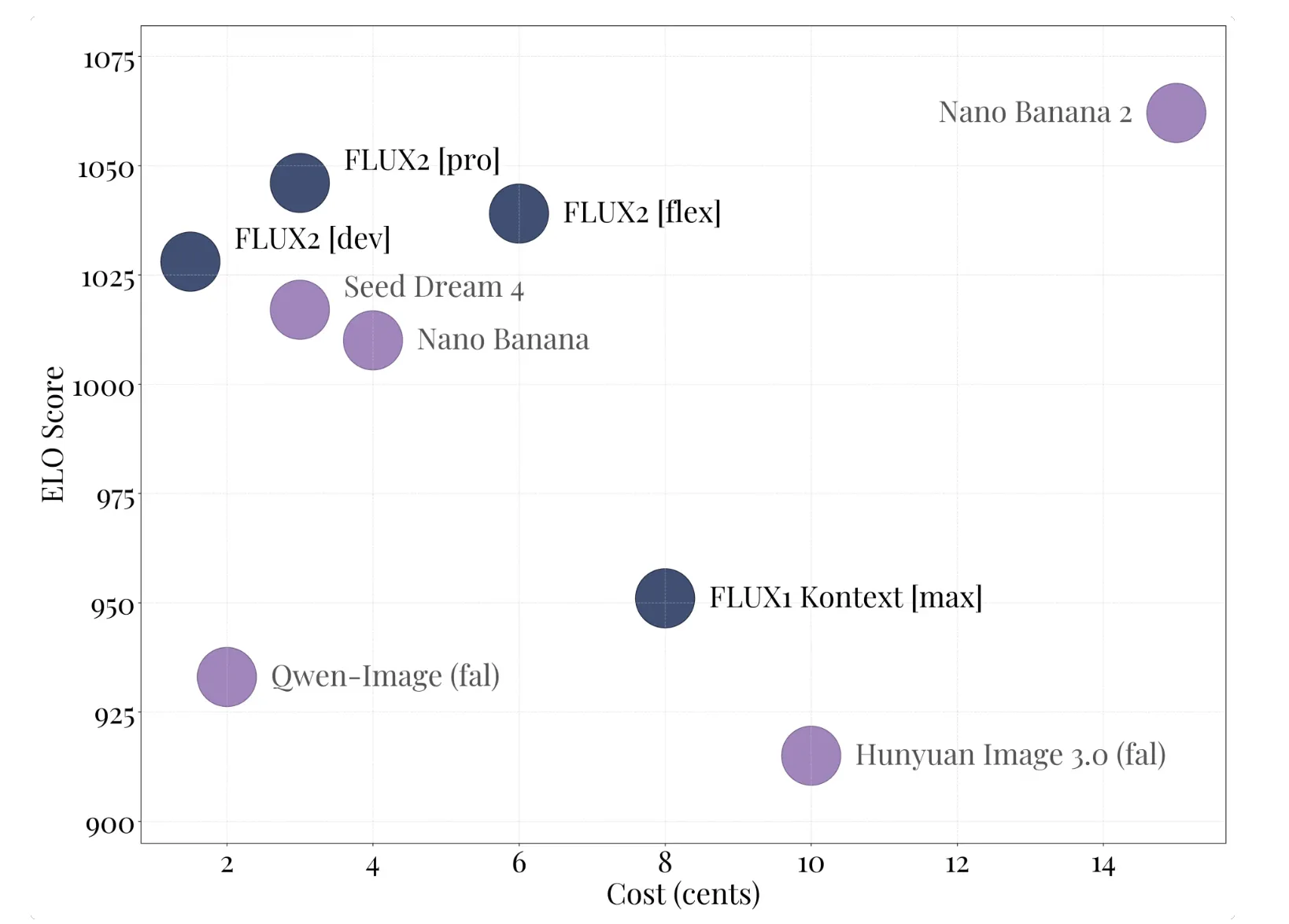

Black Forest Labs Releases Flux.2: A 32B Embedded Comparison Transformer for Production Image Pipelines

Dark forest labs has released flux.2, its second generation of the second generation and editing system. Flux.2 targets real-world workflows such as merchandising, product visualization, complex design, and editing support up to megapixels for layouts, logos, and typography.

Flux.2 Product family and Flux.2 [dev]

The flux.2 families of spans hold apis and open arms:

- Flux.2 [pro] managed api tier. It aims for state of the art quality related to closed models, with high adhesion and low cost of measurement, and is available on BFL PlayTround, BFL API, and partner platforms.

- Flux.2 [flex] It displays parameters such as the number of steps and lead rates, so developers can trade in accuracy, detection accuracy, and visual details.

- Flux.2 [dev] An open weight test, based on the Flux.2 model. It is described as the most open source weight loss and editing model, which combines text-to-image and multi-image editing in one place, with 32 million parameters.

- Flux.2 [klein] Is the open source Apache 2.0 difference, the size has been reduced from the basic model of a small setup, with many of the same capabilities.

All types of support for image editing from text and multiple references in one model, eliminating the need to keep track of different generation and editing.

Properties, current flow, and flux.2 vae

Flux.2 uses the same architecture. Core Design Couples a Mistral-3 24B language model with a Corrected flow correction working with background image presentations. The visual language model provides the semantic base and world knowledge, while the transformer backbone learns the spatial structure, materials and structure.

The model is trained on the sound map to find the background image under the condition of text, so the same architecture supports text-driven integration and text editing. Planning, the tents are started from the existing images, then they are updated under the same flow process while maintaining the structure.

New Flux.2 vae defines the space used. It is designed to measure readability, reconstruction quality, and compression, and it is separately released from surface diving under the Apache 2.0 license. This autoencoder is the backbone of all flux models.2 Flow Models and can also be used in other construction systems.

Production workflow capabilities

Flux.2 Documentation and diffuser integration highlight some key capabilities:

- Multi Reference Support: Flux.2 can combine 10 preview images to preserve character identity, product look, and style in other outputs.

- Photorey details at 4MP: The model can edit and produce images of up to 4 megapixels, with optimized processing, skin, fabrics, hands, and lighting suitable for product shots and photography as use cases.

- Strong text and interpretable layout: It can render complex typography, infographics, memes, and user interface layouts with small readable text, which is a common weakness of many older models.

- World knowledge and spatial reasoning: The model is trained for more lighting, perspective and composition, which reduces art and artificial appearance.

Key acquisition

- Flux.2 is a 32B dynamic input transformer that combines text-to-image, image editing, and multiple reference formats in one test environment.

- Flux.2 [dev] Open weight variation, paired with Apache 2.0 vax.2 VAE, while the basic model tools use a commercial license with mandatory security filtering.

- The system supports up to 4 Megapixel Generation and editing, strong text and structural rendering, and 10 visual references for dynamic characters, products and styles.

- Full tilt requires more than 80GB Vram, but the pipes are reduced by 4 and FP8 with flux loading. [dev] It is usable on 18GB to 24GB GPUS and 8GB cards with enough RAM.

Editorial notes

Flux.2 is an important step for the open visual generation of the mass, because it combines the 32B fixed flow, the 3 24 virtual language model, and the flux.2 vae in one high-quality pipeline for writing and editing. Clear, versatile Vram profiles, and strong integration with Cloudflare's powerful, comfyui, and workers make it easy to run real jobs, not just benchmarks. This release forces open image models closer to production-grade infrastructure.

Look Technical details, model weight and repo. Feel free to take a look at ours GitHub page for tutorials, code and notebooks. Also, feel free to follow us Kind of stubborn and don't forget to join ours 100K + ML Subreddit and sign up Our newsletter. Wait! Do you telegraph? Now you can join us by telegraph.

Michal Sutter is a data scientist with a Master of Science in Data Science from the University of PADOVA. With a strong foundation in statistical analysis, machine learning, and data engineering, Mikhali excels at turning complex data into actionable findings.

Follow Marktechpost: Add us as a favorite source on Google.