Ukuqhathanisa izikhathi ezi-6 eziphezulu ze-6

Amamodeli amakhulu olimi manje akhawulelwe ngaphansi kokuqeqeshwa nokuningi ngendlela esheshayo futhi eshibhile singasebenza ngayo amathokheni ngaphansi kwethrafikhi yangempela. Lokho kwehlela kuze kube yimininingwane emithathu yokuqalisa: ukuthi izicelo ze-Runtime ezihamba phambili kanjani, kanjani okweqisa kuze kube manje futhi zinquma, nokuthi zikusebenzisa kanjani i-KV Cache. Izinjini ezihlukile zenza abathengisi abahlukahlukene kulezi zimbazo, ezibonakala ngqo njengokwehluka kumathokheni ngehora lesibili, P50 / P99 latency, kanye nokusetshenziswa kwememori ye-GPU.

Lo mbhalo uqhathanisa izikhathi eziyisithupha ezibonisa kaninginingi ezinhlakeni zokukhiqiza:

- vllm

- Tensorrt llm

- Ukuqabulana Ubuso Bekungaphansi Kombhalo (TGI V3)

- Lmdcwalisa

- Shang

- Ukuzithoba okujulile / okuvinjelwe zero

1. Vllm

Ukusongoza icebo

I-VLLM yakhelwe nxazonke Cwaziswa. Esikhundleni sokugcina isilondolozi se-KV ngakunye ku-buffer enkulu esontayo, ihlukanisa i-KV kumabhulokhi osayizi ongaguquki bese isebenzisa ungqimba lokulandelana kokulandelana kokulandelana kokulandelana kwamabhulokhi.

Lokhu kunikeza:

- Ukuqhekeka okuphansi kakhulu kwe-KV (kubikwa <4% imfucuza vs 60-80% kuma-naïve allocators)

- Ukusetshenziswa okuphezulu kwe-GPU ngokuhanjiswa okuqhubekayo

- Ukuxhaswa kwendabuko kokwabelana nge-prefix kanye ne-KV sebenzisa kabusha ezingeni le-block

Izinguqulo zakamuva zengeza I-KV Inani (FP8) kanye nokuhlanganisa izikhwebu zesitayela se-flashtattion.

Ukwenza

Ukuhlolwa kwe-VLLM:

- I-VLLM ifinyelela 14-24 × Ukuphikisana okuphezulu kunokuqabulana nobuso be-transformers kanye 2.2-3.5 × ephakeme kune-TGI yokuqala yamamodeli we-llama ku-nvidia gpus.

KV nokuziphatha kwenkumbulo

- I-Pefatter ihlinzeka ngesakhiwo se-KV esinobungane bobabili i-GPU enobungane futhi ihlukaniswa.

- I-FP8 KVanization inciphisa usayizi we-kv futhi ithuthukise ukuguqulwa kwethonya lapho i-compute akuyona ibhodlela.

Lapho kufanelana khona

- Injini yokusebenza okuzenzakalelayo lapho udinga i-General LLM ekhonza i-Backlend nge-Foot Photoput, i-TTFT enhle, ne-Hardware.

2. I-Tensorrt llm

Ukusongoza icebo

I-Tensort LLM iyinjini esekelwe eqoqweni ngaphezulu kweNvidia TensORRT. Ikhiqiza ama-kerners afakiwe ngemodeli nemodeli, futhi adalule i-api efakiwe esetshenziswa ngohlaka olufana neTriton.

I-SUBSTSTSTST yayo ye-KV iyacaca futhi inesici esicebile:

- I-KV cache

- Isilondolozi se-KV (Int8, FP8, ngenhlanganisela ethile isavela)

- Isilondolozi se-buffer kayindiso

- I-KV cache basebenzise kabushakufaka phakathi ukulayisha i-KV ku-CPU nokusebenzisa kabusha ngokuzungeza ukunciphisa i-TTFT

INvidia ibika ukuthi i-CPU esekelwe KV Reule inganciphisa isikhathi sokufika kokuqala 14 × Ku-H100 nangokwengeziwe ku-GH200 kwizimo ezithile.

Ukwenza

I-Tensort LLM iguquke kakhulu, ngakho-ke imiphumela iyahluka. Amaphethini ajwayelekile avela ekuqhathaniseni Komphakathi kanye namabhentshi abathengisi:

- Phansi kakhulu Isicelo Esingashadi Esihle Ku-nvidia gpus lapho izinjini zihlanganiswa imodeli ngqo nokucushwa.

- Ngesikhathi esilinganiselwe, kungahle kubhekwe noma ku-TTFT ephansi noma ngokudlula okuphezulu; Ekuhlanganeni okuphezulu kakhulu, ukudlula kwamaphrofayili alungiselelwe ukusunduza P99 Up ngenxa yokuvusa ngolaka.

KV nokuziphatha kwenkumbulo

- I-KV Plus Plus I-KV ehlukaniswe i-KV inikeza ukulawula okuqinile kokusetshenziswa kwememori kanye nomkhawulokudonsa.

- I-EBUCE NE-Memory APIS vumela ukuthi uklame izinqubomgomo ze-cache ezaziyo

Lapho kufanelana khona

- Ukulayisha okubucayi kwe-latency kanye nama-nvidia kuphela izindawo, lapho amaqembu angatshala khona imali enjini ekheni kanye nokuhleleka kwamamodeli.

3. Ukuqabula ubuso be-TGI v3

Ukusongoza icebo

Ukutholwa Kwezizukulwane (I-TGI) yiseva egxile kwisitaki nge:

- Iseva yokugqwala esekwe ku-HTTP ne-GRPC Server

- Ukuhamba okuqhubekayo, ukusakaza, izingwegwe zokuphepha

- I-Backtorch ye-Pytorch ne-TensOrgt futhi ivale ukuhlanganiswa kobuso bukabuso

I-TGI V3 Ingeza ipayipi elisha elide elide:

- I-Chunkled Found kokufakwa okude

- Isiqalo se-KV Umlando wengxoxo emide kangaka awubuyiselwa esicelweni ngasinye

Ukwenza

Kuma-Products ajwayelekile, kukhombisa umsebenzi wakamuva weqembu lesithathu:

- I-VLLM ivame ukuphenya i-TGI kumathokheni aluhlaza okwesibili ngokuhlangana okuphezulu ngenxa ye-petatatuntion ngenxa yokuqothuka, kepha umehluko awukhulu kuma-setups amaningi.

- I-TGI v3 icubungula amathokheni ama-3 × ngaphezulu futhi ingaphezulu kwe-13 × ngokushesha kune-vllm ekukhuphiseni okudengaphansi kwesethaphu ngemilando emide kakhulu kanye nesiqalo se-prefix esivunyelwe.

Iphrofayili ye-Latency:

- I-P50 isikhathi esifushane nobude obude bufana ne-VLLM lapho bobabili bahlelwe ngokuthuthuka okuqhubekayo.

- Ngemilando yengxoxo emide, okuqala kokubusa kumapayipi angenangqondo; Ukusetshenziswa kabusha kwe-TGI v3 kwangaphambilini amathokheni anikeza ukuwina okukhulu ku-TTFT ne-P50.

KV nokuziphatha kwenkumbulo

- I-TGI isebenzisa ukulondolozwa kwe-KV ngama-pernels wesitayela sokunakwa futhi kunciphisa inkumbulo yezinyawo ngokusebenzisa i-chunking of flues

- Ihlanganisa ukwakhiwa kwamanani ngebhithi nama-byte kanye ne-GPTQ kanye ne-run kuzo zonke izipebe ezimbalwa zehardware.

Lapho kufanelana khona

- Ukukhiqizwa kwesitaki esivele kuvale ubuso, ikakhulukazi imithwalo yezitayela yezindaba enemiyalezo emide lapho isiqalo se-prefix sinikeza izinzuzo ezinkulu zomhlaba zangempela.

4. Lmdeplobhishe

Ukusongoza icebo

Lmdcwalisa iyithuluzi lokucindezela kanye nokuhanjiswa kusuka ku-interll emcomelweni ye-interllm. Iveza izinjini ezimbili:

- Umtshendela: Ukusebenza okuphezulu kweCuda Kernels yeNvidia GPUS

- Injini yePytorch: Ukuvuka okuguquguqukayo

Izici ze-Key Runnitime:

- Ukuqothuka okuqhubekayo, okuqhubekayo

- Ivinjiwe i-KV cache nemenenja yokwabiwa nokusebenzisa kabusha

- Ukuhlukaniswa okunamandla kanye ne-fuse for amabhlogo wokunakwa

- Ukufana kwesibeletho

- Isisindo kuphela futhi i-KVanization (kufaka phakathi i-AWQ ne-Intanethi ye-Int8 / Int4 KV)

I-LMDEPHSEPS Kufika ku-1.8 × Isicelo Esiphezulu Phezulu kune-VLLMebeka lokhu ekuphakamiseni okuqhubekayo, okuvinjelwe i-KV namakhekhe alungiselelwe.

Ukwenza

Ukuhlola Khombisa:

- Okwezinhlobo ezi-4 zeLlama zesitayela sezitayela ku-A100, i-LMDEDY ingafinyelela amathokheni aphezulu ngomzuzwana ku-vllm ngaphansi kwezinkinga zokuqhathanisa eziqhathaniswa neConmurrency ephezulu.

- Kubika futhi lokho I-4 yokulinganisa kancane imayelana ne-2.4 × ngokushesha kune-FP16 amamodeli asekelwayo.

I-Latency:

- Isicelo esisodwa TTFT siku-ballpark efanayo nezinye izinjini ezenzelwe i-GPU uma zilungiselelwe ngaphandle kwemikhawulo ye-batch eyedlulele.

- Ngaphansi kokuhlangenwe nakho okusindayo, ukuhlangana okuqhubekayo kwenhlanganisela okuvinjelwe i-KV Let LmDeplocled streak ukuphakama ngaphandle kokuwohloka kwe-TTFT.

KV nokuziphatha kwenkumbulo

- I-KV cache evinjelwe ngokusobala ngokulandelana kwama-buffers ngegridi yama-KV Chunks aphethwe yisikhathi sokugijima, okufana nomoya we-VLLM kodwa ngesakhiwo esihlukile sangaphakathi.

- Ukusekelwa kwe isisindo nesisindo ne-kva ihlose amamodeli amakhulu ku-GPU ecindezelwe.

Lapho kufanelana khona

- Ukuhanjiswa kwe-Nvidia Centrus okudinga ukugcwaliswa okuphezulu futhi kukhululekile ukusebenzisa i-turbomnd kanye ne-LMDEDH.

5. Sglang

Ukusongoza icebo

USglang womabili:

- A I-DSL Okwenziwa ngezinhlelo ezihleliwe ze-LLM ezifana nama-ejenti, ukugeleza komsebenzi we-rag kanye namapayipi ethuluzi

- Isikhathi sokusebenza esisebenzayo I-Radixattlentionindlela yokusebenzisa kabusha i-KV eyabelana ngeziqalo zisebenzisa isakhiwo sesihlahla se-radix esikhundleni se-block hashes elula.

I-Radixattlention:

- Igcina i-KV ngezicelo eziningi esihlahleni se-prefix esikhishwe ngamathokheni

- Inika amandla amanani aphezulu we-KV Hit lapho izingcingo eziningi zabelana ngeziqalo, okufana nokuvuselelwa okumbalwa okudutshuliwe, amaketanga amaningi, noma amaketanga ethuluzi

Ukwenza

Ukuqonda okusemqoka:

- I-Sglang ifinyelela kufika ku-6.4 × ngaphezulu okuphezulu na- Kufika ku-3.7 × eliphansi langemuva Kunezindlela eziyisisekelo ezifana ne-VLLM, LMQL nabanye ngemisebenzi ehlelekile yomsebenzi.

- Ukuthuthuka kukhulu kakhulu lapho kubuye kube nokusebenzisa isiqalo esindayo, isibonelo, ukuxoxa okuningi noma imithwalo yokuhlola enomongo ophindaphindwayo.

Kubikwe amanani e-KV cache hit aqala kusuka cishe I-50% kuya ku-99%namasheduli ochungechuse amakhekhe asondele kakhulu emazingeni okushaya afanele kumabhentshi alinganisiwe.

KV nokuziphatha kwenkumbulo

- I-Radixattlention ihlala ngaphezulu kwezikhumba zesitayela sokunakwa kwe-petaged futhi igxile kuyo sebenzisa futhi kunokuba kube ukwabiwa.

- USgglang uhlanganisa kahle nezinhlelo zokulondolozwa kwezikhundla zokugcina ukuhambisa i-KV phakathi kwe-GPU ne-CPU lapho kulandela ukulandelana, yize lezo zinhlelo zivame ukusetshenziswa njengamaphrojekthi ahlukile.

Lapho kufanelana khona

- Izinhlelo ze-Agentic, amapayipitela amathuluzi, nezicelo ezisindayo ze-rag lapho izingcingo eziningi zabelana ngeziqalo ezinkulu ezisheshayo kanye nama-KV Reule izindaba ezingeni lesicelo.

I-6. Ukuzithoba okujulile

Ukusongoza icebo

I-Deepspeed ihlinzeka ngezingcezu ezimbili ezifanelekile zokutholwa:

- Ukutholwa okujulile: Kwenzelwe ama-transformer kernels kanye ne-tensor ne-pipeline parallelism

- I-Zero Indence / Zero Offload: Amasu alayishwa izinsimbi zemodeli, nakwezinye isilondolozi se-KV, ku-CPU noma i-NVME ukugijima amamodeli amakhulu kakhulu kwimemori ekhawulelwe ye-GPU

Ukutholwa kwe-zero kugxile ku:

- Ukugcina Izinsimbi Ezincane Noma Zomukeli

- Ukusakaza tincanyana kusuka ku-CPU noma i-NVME njengoba kudingeka

- Ukuqondisa ububanzi kanye nosayizi wemodeli kunokuba kuncishiswe i-latency ephansi

Ukwenza

Ku-zero ukuvancence chofoza u-30b Isibonelo ku-V100 32GB:

- Ukulayisha okugcwele kwe-CPU kufinyelela cishe Amathokheni angama-43 ngomzuzwana

- Ukulayishwa okugcwele kwe-NVME Amathokheni angama-30 ngomzuzwana

- Bobabili 1.3-2.4 × ngokushesha kunokucushwa kokulayishwa okuyingxenye, ngoba ukulahlwa okugcwele kunika amandla amasayizi amakhulu ama-batch

Lezi zinombolo zincanyana ngokuqhathaniswa ne-GPU Residence LLM CRIMS ku-A100 noma H100, kepha ziyasebenza kwimodeli engahambisani nemvelo ku-32GB.

Inhlanganisela yakamuva ye-I / O ye-DeepSpeed and FlexGen iqinisekisa ukuthi amasistimu asuselwa ekuthambitheni aphethwe ngokufundwa kwama-kib ama-128 amancane nokuthi i / o Ukuziphatha kube yi-mainleneck enkulu.

KV nokuziphatha kwenkumbulo

- Isisindo semodeli futhi kwesinye isikhathi amabhlogo we-KV alayishwa ku-CPU noma e-SSD ukuze alungele amamodeli angaphezu kwamandla e-GPU.

- I-TTFT ne-P99 kuphakama kuqhathaniswa nezinjini ezihlanzekile ze-GPU, kepha i-TradeFOFF yikhono lokusebenzisa amamodeli amakhulu kakhulu okungenzeka ukuthi awafaneleki.

Lapho kufanelana khona

- Ukungaxhunyiwe ku-inthanethi noma i-batch, noma izinsizakalo eziphansi ze-QPS lapho usayizi wemodeli kufanelekile ngaphezu kwe-latency kanye ne-GPU ukubala.

Ukuqhathanisa amatafula

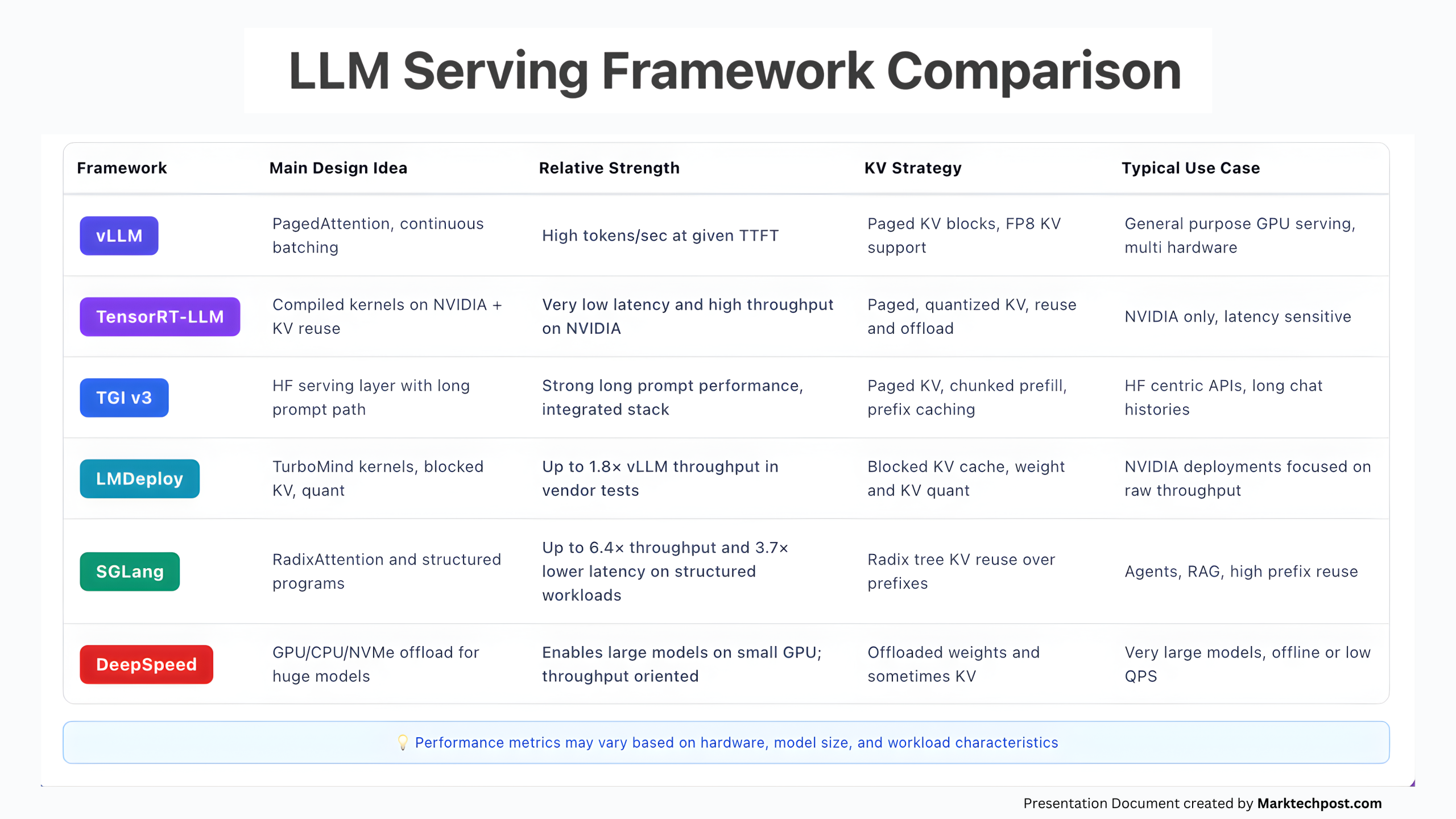

Le thebula ifingqa ama-raneffs amakhulu afanele:

| Isikhathi sokuqalisa | Umbono oyinhloko | Amandla ahlobene | Isu le-KV | Icala elijwayelekile lokusebenzisa |

|---|---|---|---|---|

| vllm | I-PefatThention, Ukuqhuma Okuqhubekayo | Amathokheni aphezulu ngomzuzwana ngamunye enikezwe i-TTFT | Amabhulokhi we-KV, ukusekelwa kwe-FP8 KV | Inhloso ejwayelekile ye-GPU esebenza, i-dwardware eningi |

| Tensorrt llm | Izikhwebu ezihlanganisiwe ku-Nvidia + KV Sebenzisa kabusha | I-latency ephansi kakhulu kanye nokudlula okuphezulu ku-nvidia | I-Peged, i-KV eyenziwe kabusha, iphinde isebenzise kabusha | I-Nvidia kuphela, i-latency inzwetive |

| I-TGI v3 | I-HF esebenza ungqimba nge-ende esheshayo | Ukusebenza okusheshayo okusheshayo, isitaki esihlanganisiwe | I-KV ye-KV, i-CHANED FUSTIAL, ISIQINISEKISO SOKUGCINA | HF centric APIs, umlando omude wengxoxo |

| Lmdcwalisa | Izikhwebu ze-turbomind, zivimbe i-KV, nekana | Kufika ku-1.8 × VLLM PASHT ekuhlolweni komthengisi | Ivinjelwe i-KV cache, isisindo kanye ne-kv. | Ukuhanjiswa kweNvidia kugxile ekutholeni okuluhlaza |

| Shang | Ama-radixattrion kanye nezinhlelo ezihlelekile | Kufika ku-6.4 × ukugcwala kanye ne-3.7 × eliphansi le-latency emisebenzini ehlelekile | I-radix Tree KV isebenzisa kabusha iziqalo | Ama-ejenti, i-rag, isiqalo esiphakeme |

| I-Deepspeed | I-GPU CPU NVME Off in amamodeli amakhulu | Inika amandla amamodeli amakhulu ku-GPU encane; Ukungena okuqondiswe | Izinsimbi ezilayishiwe futhi kwesinye isikhathi i-KV | Amamodeli amakhulu kakhulu, ungaxhunyiwe ku-inthanethi noma ama-QPs aphansi |

Ukukhetha isikhathi sokusebenza ngokuzilolonga

Ngohlelo lokukhiqiza, ukukhetha kuvame ukuwa emaphethini ambalwa alula:

- Ufuna injini eqinile eqinile enomsebenzi omncane wangokwezifiso: Ungaqala nge vllm. Kukunika okubucayi okuhle, i-TTFT enengqondo, nokuphathwa okuqinile kwe-KV ku-Hardware ejwayelekile.

- Uzibophezele ku-NVIDIA futhi ufuna ukulawulwa okuhle okukhohlisayo phezu kwe-latency ne-KV: Ungasebenzisa Tensorrt llmcishe ngemuva kweTriton noma i-TGI. Hlela injini ethile yemodeli yakha nokuhleleka.

- Isitaki sakho sesivele sikubambe ubuso futhi unendaba nezingxoxo ezinde: Ungasebenzisa I-TGI v3. Ukulondolozwa kwayo okusheshayo okusheshayo kanye nesiqalo se-prefix kusebenza kakhulu kwithrafikhi yesitayela sengxoxo.

- Ufuna ukugcwala okuphezulu nge-GPU ngamamodeli ahlukanisiwe: Ungasebenzisa Lmdcwalisa Nge-turbomind futhi ivimbe i-KV, ikakhulukazi amamodeli omndeni ama-4.

- Ukwakha ama-ejenti, amaketanga ethuluzi noma izinhlelo ezisindayo ze-rag: Ungasebenzisa Shang Futhi ukuklama okukhohlisayo ukuze i-KV yokusebenzisa kabusha nge-radixattive iphakeme.

- Kufanele usebenzise amamodeli amakhulu kakhulu ku-GPU elinganiselwe: Ungasebenzisa Ukuzithoba okujulile / okuvinjelwe zeroYamukela i-latency ephakeme, bese uphatha i-GPU njengenjini yokufakelwa nge-SSD ku-loop.

Sekukonke, zonke lezi zinjini ziguqulwa ngombono ofanayo: I-CACE ye-KV iyisisetshenziswa sangempela se-bottleneck. Abaphumelele yizikhathi zokuziphatha eziphatha i-KV njengesakhiwo sokuqala sedatha yeklasi ukuze ziboshwe, zilinganiswe, zisetshenziswe kabusha futhi zilayishwe kancane, hhayi nje ukuthi kufakwe i-tensor enkulu kwimemori ye-GPU.

UMichal Sutter ungumsebenzi wesayensi yedatha ene-Master of Science ku-Data Science evela e-University of PADOVA. Ngesisekelo esiqinile ekuhlaziyeni kwezibalo, ukufunda ngomshini, kanye nobunjiniyela bedatha, ama-Mikhali ama-Excels ekuguquleni imininingwane eyinkimbinkimbi ekutholeni okusebenzayo.

Landela uMarktechpost: Sengeze njengomthombo owuthandayo ku-Google.