Meta AI issued Mobilellm-R1: The model of the edge of less than 1b parameters and reaches 2x-5x function to increase some open AI models

Meta has released Mobilelm-R1The family of thinking models do not survive the models of the imaginary thoughts available now. The release includes the models from 140 to 950M parameters, focusing on the cost of mathematical calculations, codes, and scientific thoughts on a subline science.

Unlike normal dialogue models, MobileM-R1 is intended to be sent to the edge, which aims to bring the statutory accuracy while the efficient functional.

What Appitecture MobileCture Mobiliboboserm-R1?

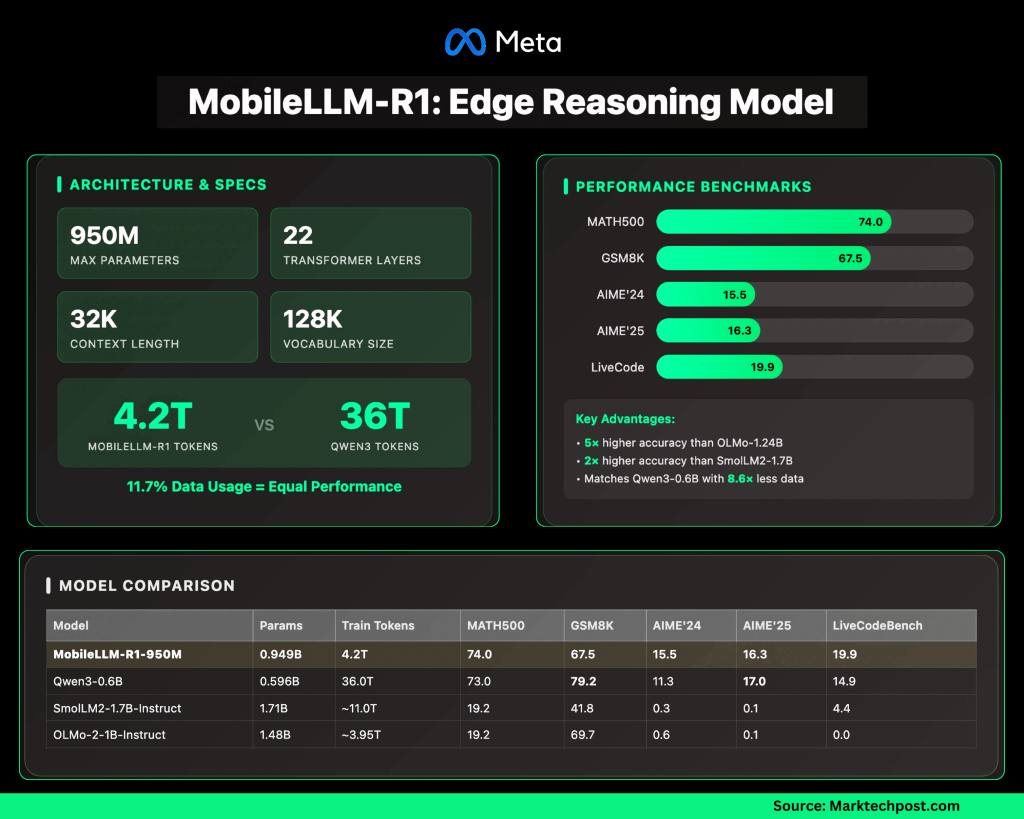

The largest model, Mobilelm-R1-950mIt includes a construction of several information:

- 22 TransformMer layers 24 heads have to give up with 6 heads collect kv heads.

- Estimates Differences: 1536; Hidden Dimensions: 6144.

- Collected Attention – Question (Gqa) Reduce compute and memory.

- Sharing a banned weight Cut a parameter count without heavy latency fines.

- Swighcu Activation Develop a small model representation.

- Boo Length: 4K of Base, 32K for trained models.

- 128k vocabulary By entering entries / output.

Emphasis for reducing computer needs and memory, making it easy to ship to pressed devices.

How does training work?

MobileBM-R1 is noteworthy for data performance:

- Trained ~ 4.2T tokens Overall.

- By comparison, Qwen3's 0.6b The model was trained 36T tokens.

- This means only for Softll-R1 use ≈11.7% of data to access or pass QWEN3's accuracy.

- Posted training training, coding, and consultation details.

This applies to the lower training costs and resources requirements.

How do you get against other open models?

On benches, Mobilell-R1-950m show important benefits:

- Math (Matt500 Dataset): ~5 × High Accuracy than OLMO-1.24B once ~2 × the highest accuracy than Smollm2-1.7b.

- Consultation and Codes (GSM8K, AIME, LIVECEBERCH): Parallels or passing QWEN3-0.6Bwithout using too few tokens.

The model brings the results associated with large buildings while keeping a small footprint.

Does MobileM-R1 fall?

The model focus creates limitations:

- Strong in Statistics, Code, and Formal Reasoning.

- Weak in General Conversations, Performance: and creative activities compared to large llms.

- Distributed underneath NC License (non-commercial)which prevents the use in production settings.

- It raises long situations (32K) KV-cache and memory requirements to Incofer.

How MobileM-R1 matches QWen3, Smollm2, and Olmo?

Summary of operation (trained models):

| Statue | Parali | Train tokens (T) | Math500 | Gsm8k | AIME'24 | AIME'25 | LiveCodebelch |

|---|---|---|---|---|---|---|---|

| Mobilelm-R1-950m | 0.949b | 4.2 | 74.0 | 67.5 | 15.5 | 16.3 | 19.9 |

| QWEN3-0.6B | 0.596b | 36.0 | 73.0 | 79.2 | 11.3 | 17.0 | 14.9 |

| Smollm2-1.7b-ordered | 1.71B | ~ 11.0 | 19.2 | 41.8 | 0.3 | 0.1 | 4.4 |

| OLMO-2-1B-Teaching | 1.48B | ~ 3.95 | 19.2 | 69.7 | 0.6 | 0.1 | 0.0 |

Important recognition:

- R1-950m matches QWEN3-0.6B In Math (74.0 vs 73.0) while required ~8.6 × Few of Token.

- Gaints VS performance Smollm2 including Mag They are beautiful in thoughtful works.

- QWEN3 keeps edge on GSM8K, but the difference is thin compared to good performance benefit.

Summary

Mobilepa's MobileSmm-R1 emphasizes the practice of small models, which are prepared by the domain that brings competable thinking without major training budgets. By finding 2 × × × × of the training sector, it does not indicate that the next rate – especially math statistics, codes, and charges of use in the edge devices.

Look The model in the kisses of face. Feel free to look our GITHUB page for tutorials, codes and letters of writing. Also, feel free to follow it Sane and don't forget to join ours 100K + ml subreddit Then sign up for Our newspaper.

Asphazzaq is a Markteach Media Inc. According to a View Business and Developer, Asifi is committed to integrating a good social intelligence. His latest attempt is launched by the launch of the chemistrylife plan for an intelligence, MarktechPost, a devastating intimate practice of a machine learning and deep learning issues that are clearly and easily understood. The platform is adhering to more than two million moon visits, indicating its popularity between the audience.